1. Welcome

1.1. Product overview

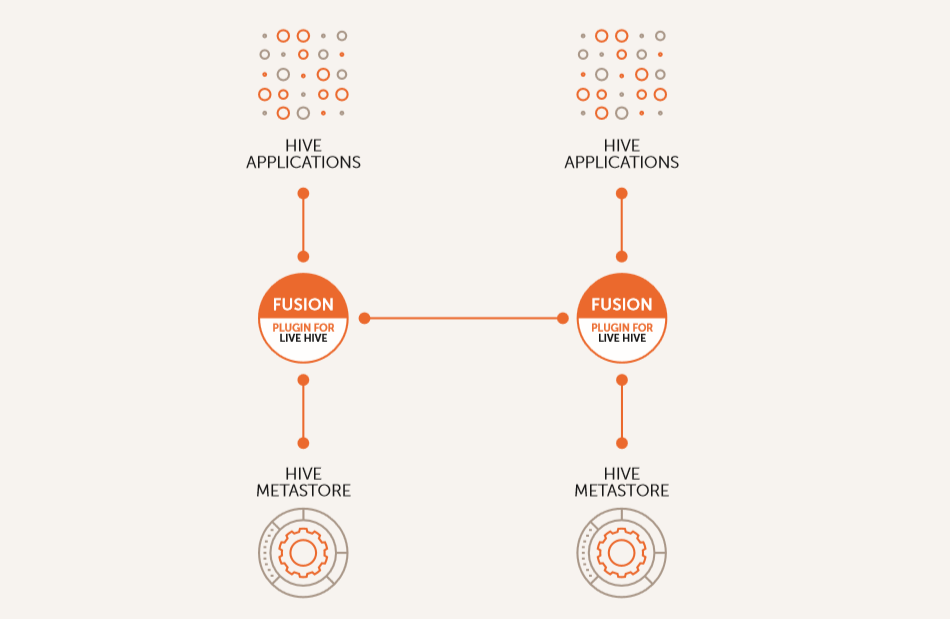

The Fusion Plugin for Live Hive enables WANdisco Fusion to replicate Hive’s metastore, allowing WANdisco Fusion to maintain a replicated instance of Hive’s metadata and, in future, support Hive deployments that are distributed between data centers.

1.2. Documentation guide

This guide contains the following:

- Welcome

-

this chapter introduces this user guide and provides help with how to use it.

- Release Notes

-

details the latest software release, covering new features, fixes and known issues to be aware of.

- Concepts

-

explains how Fusion Plugin for Live Hive through WANdisco Fusion uses WANdisco’s Live Data platform.

- Installation

-

covers the steps required to install and set up Fusion Plugin for Live Hive into a WANdisco Fusion deployment.

- Operation

-

the steps required to run, reconfigure and troubleshoot Fusion Plugin for Live Hive.

- Reference

-

additional Fusion Plugin for Live Hive documentation, including documentation for the available REST API.

1.2.1. Admonitions

In the guide we highlight types of information using the following call outs:

| The alert symbol highlights important information. |

| The STOP symbol cautions you against doing something. |

| Tips are principles or practices that you’ll benefit from knowing or using. |

| The KB symbol shows where you can find more information, such as in our online Knowledgebase. |

1.3. Contact support

See our online Knowledgebase which contains updates and more information.

If you need more help raise a case on our support website.

1.4. Give feedback

If you find an error or if you think some information needs improving, raise a case on our support website or email docs@wandisco.com.

2. Release Notes

2.1. Live Hive Plugin 1.2.1 build 33

15 August 2018

The Fusion Plugin for Live Hive extends WANdisco Fusion by replicating Apache Hive metadata. With it, WANdisco Fusion maintains a Live Data environment including Hive content, so that applications can access, use and modify a consistent view of data everywhere, spanning platforms and locations, even at petabyte scale. WANdisco Fusion ensures the availability and accessibility of critical data everywhere.

The 1.2.1 release of the Fusion Plugin for Live Hive is a minor update, which builds on the improvements of the 1.2 release and focusses on resolving known issues and improving resilience both during installation and operation. It does not introduce new features.

2.1.1. Available Packages

This release of the Fusion Plugin for Live Hive supports deployment into WANdisco Fusion 2.11.1 or greater for HDP and CDH Hadoop clusters:

-

CDH 5.12.0 - CDH 5.14.0

-

HDP 2.6.0 - HDP 2.6.4

2.1.2. Installation

The Fusion Plugin for Live Hive supports an integrated installation process that allows it to be added to an existing WANdisco Fusion deployment. Consult the Installation Guide for details.

2.1.3. Resolved Issues

-

WD-LHV-681 - Restarting services when required was marked as successful for Cloudera Manager with an Enterprise license, but did not complete. All necessary steps now complete as expected.

-

WD-LHV-684 - After deleting a replication rule from Fusion Plugin for Live Hive, replication continued indefinitely. Replication now stops within one minute of the rule being deleted.

-

WD-LHV-729 - The Fusion Plugin for Live Hive proxy stack failed to deploy on Ambari when certain directories were missing. The stack now creates these automatically when required. Related improvements also increase the reliability of starting the proxy.

-

WD-LHV-863 - When an inconsistency between partitions was resolved, it did not always correct the table metadata to bring it in line. This has been addressed and tables now correctly become consistent after partitions are repaired, when the partition was the only reason the table was inconsistent.

-

WD-LHV-881 - It was not possible to install Live Hive on Ambari without Kerberos being enabled. This has now been resolved.

2.1.4. Known Issues

-

WD-LHV-878 - Table repairs currently also apply to the containing database, which introduces a risk of data loss. See Risk of data loss in Hive repair.

-

WD-LHV-654 - Consistency checks currently include non-replicated paths which should be excluded.

-

WD-LHV-238 - The Live Hive Plugin requires common Hadoop distributions and versions to be in place for all replicated zones.

-

WD-LHV-341 - The proxy for the Hive Metastore must be deployed on the same host as the Fusion server.

2.1.5. Other Improvements

-

FIX - LHV installer doesn’t work on CDH5.8 -

WD-LHV-708 -

FIX - Unexpected response from manager when trying to check if LIVE_HIVE_PROXY installed on manager -

WD-LHV-751 -

FIX - LIVE_HIVE_PROXY failing to start during Live Hive installation -

WD-LHV-842 -

FIX - Live Hive Proxy Master Installation failed -

WD-LHV-848 -

FIX - Using manual kerberos parameters doesn’t persist past installer (CDH) -

WD-LHV-695 -

FIX - Unable to delete LIVE_HIVE* services if installation broken -

WD-LHV-697 -

FIX - Proxy stack does not define correct dependencies for stale config restart -

WD-LHV-73- FIX - Install fails in case of manual kerberos setup -WD-LHV-791 -

FIX - Repair doesn’t work in case Hive Table Conflicting inconsistency -

WD-LHV-796 -

FIX - Live Hive 1.2.1 doesn’t work with default keytabs -

WD-LHV-862 -

FIX - Hive Consistency checks are not fired when pressing check. -

WD-LHV-867 -

FIX - Keytab missing from High Availability node -

WD-LHV-688 -

FIX - In some cases Live HIve doesn’t replicate databases which are satisfied to the rule patterns -

WD-LHV-716 -

FIX - Hive rule Consistency Check status page missing pagination; limited to 10 DBs -

WD-LHV-734 -

FIX - Hiveserver2 template won’t start because revert property name is incorrect -

WD-LHV-750 -

FIX - Replication doesnt work if Hive rule was created after database -

WD-LHV-759 -

FIX - live.hive.proxy.keytab Overwritten by Live Hive -

WD-LHV-768 -

FIX - Error message not correct when Hive is not installed on the cluster -

WD-LHV-769

2.2. Live Hive Plugin 1.2 build 27

11 July 2018

The Fusion Plugin for Live Hive extends WANdisco Fusion by replicating Apache Hive metadata. With it, WANdisco Fusion maintains a Live Data environment including Hive content, so that applications can access, use and modify a consistent view of data everywhere, spanning platforms and locations, even at petabyte scale. WANdisco Fusion ensures the availability and accessibility of critical data everywhere.

The 1.2 release of the Fusion Plugin for Live Hive is a minor update, adding functionality and resolving some of the known issues with prior releases, ensuring that it covers the features available with the prior Fusion Hive Metastore Plugin. With this release, implementations of WANdisco Fusion that include Hive replication requirements should take advantage of the Fusion Plugin for Live Hive.

2.2.1. New Platform Support

The Fusion Plugin for Live Hive has added support for the following new platforms since version 1.0:

-

CDH 5.14.0

The Fusion Plugin for Live Hive has added support for the following new platforms since version 1.0:

-

CDH 5.14.0

2.2.2. Available Packages

This release of the Fusion Plugin for Live Hive supports deployment into WANdisco Fusion 2.11.1 or greater for HDP and CDH Hadoop clusters:

-

CDH 5.12.0 - CDH 5.14.0

-

HDP 2.6.0 - HDP 2.6.4

2.2.3. Installation

The Fusion Plugin for Live Hive supports an integrated installation process that allows it to be added to an existing WANdisco Fusion deployment. Consult the Installation Guide for details.

2.2.4. What’s New

This minor update to Fusion Plugin for Live Hive adds some new key features and addresses limitations of the 1.0 release. Notable enhancements are:

- Replication Rules

-

Hive Replication Rules no longer generate HCFS replication rules in response to operations on tables that may create new data locations that require replication. Instead, this release requires that Hive data are replicated with an existing HCFS replication rule. This allows for a greater scale of operation, because Hive operations such as CREATE TABLE can now re-use existing replication rules, and you have greater control over which content is replicated by combining Hive replication rules and HCFS replication rules.

- Pattern Syntax

-

Replication rules can be created to match Hive tables based on the same simple syntax used by Hive for pattern matching, rather than more complex regular expressions. Wildcards in replication rules can only be

*for any character(s) or|for a choice. Examples areemployees,emp*,emp*|*ees, all of which will match a table namedemployees. - Database-level replication

-

Hive replication rules now apply to Hive databases as well as tables. Where previous versions of Live Hive Plugin replicated all Hive databases, in this release data replication may be applied on a per-database basis.

- Initial transfers and consistency checks

-

Initial transfers and Consistency Checks can be performed for a Hive replication rule, covering all elements that match the rule.

- Rule management

-

Hive replication rules can be removed.

- In-place upgrades

-

Future upgrades of the Fusion Plugin for Live Hive will be able to offer a streamlined upgrade path.

- Database-level checks and initial transfer

-

You can perform a consistency check for an entire database, and get results that indicate whether elements within the database are inconsistent. Similarly, the Fusion Plugin for Live Hive allows you to perform an initial transfer of data for a database as a whole, rather than requiring you to perform that transfer for each table.

2.2.5. Resolved Issues

The following known issues have been resolved with this release.

-

WD-LHV-219 - Consistency check and initial transfers could not be performed at the level of a Hive Regex rule, but must have been performed per-table. Consistency checks and initial transfers can now be performed for databases or tables.

-

WD-LHV-342 - The Fusion Plugin for Live Hive did not provide for the removal of a Hive Regex rule. Hive replication rules can now be removed.

-

WD-LHV-343 - Databases were replicated to all zones on creation regardless of Hive Regex rules. Databases are now replicated in accordance with established Hive replication rules.

-

WD-LHV-344 - Replication rules for Hive data locations generated by the Fusion Plugin for Live Hive could not be edited. Replication rules for locations associated with Hive data are no longer controlled by the Fusion Plugin for Live Hive, and can be edited as regular HCFS rules.

-

WD-LHV-480 - It wasn’t possible to replicate metadata for tables created using the CTAS "create table as select" method. Table metadata is now governed by Hive replication rules and associated table data by regular HCFS replication rules.

-

WD-LHV-486 - Previously unable to trigger initial transfers for metadata on nodes where the data was undergoing initial transfer. Initial transfers can now be triggered from any node.

2.2.6. Known Issues

-

WD-LHV-654 - Consistency checks currently include non-replicated paths which should be excluded.

-

WD-LHV-238 - The Live Hive Plugin requires common Hadoop distributions and versions to be in place for all replicated zones.

-

WD-LHV-341 - The proxy for the Hive Metastore must be deployed on the same host as the Fusion server.

2.2.7. Other Improvements

-

Support regex rule removal -

WD-LHV-342 -

FIX - Hive commands through beeline using anonymous user without Kerberos fail -

WD-LHV-407 -

FIX - Unable to remove Hive Regex -

WD-LHV-481 -

FIX - Unable to trigger repair of a table on a fusion node where the database does not exist. -

WD-LHV-486 -

FIX - metastore.service.host is not longer used -

WD-LHV-488 -

Allow multiple LHV proxies in a single zone for proxy HA -

WD-LHV-490 -

FIX - Hive Metastore Canary cannot pass through proxy -

WD-LHV-500 -

Document deployment models -

WD-LHV-523 -

Handle pre-existing kerberos principals on CDH -

WD-LHV-552,WD-LHV-485 -

FIX - Creating a table with a location that matches existing replicated table broken -

WD-LHV-554 -

CDH 5.13 Sentry / Metastore HA testing -

WD-LHV-557 -

Remove auto-creation of a DSM on table creation -

WD-LHV-561 -

Test if ConsistencyCheck and Initial Transfer work over DBs through REST API -

WD-LHV-567 -

Trigger repair by regex for all databases, ignoring location -

WD-LHV-571 -

Regex rule status tab -

WD-LHV-572 -

FIX - Hive Table rules disappearing -

WD-LHV-573 -

Hive Server 2 unable to connect to Hive Metastore through Hive Thrift Proxy with Kerberos -

WD-LHV-574 -

Add ability to trigger CC via regex rule in the UI -

WD-LHV-582 -

Use patterns as Hive uses them instead of general Regexes -

WD-LHV-583 -

Support display of metadata replication status in FUI -

WD-LHV-584 -

FIX - Installer fails on non-Kerberized cluster due to auto.kerberos.enabled option -

WD-LHV-591 -

FIX - Consistency check and initial transfer open new connection for every thrift call -

WD-LHV-599 -

FIX - Installer fails to install the Live Hive Plugin service in Cloudera Manager -

WD-LHV-600 -

FIX - Consistency Check All option is incorrectly reporting databases as consistent -

WD-LHV-606 -

FIX - Add if not exists partition fails with NPE if partition exists -

WD-LHV-607 -

FIX - "Error connecting to Hive Metastore" within Cloudera Navigator Metadata Server log -

WD-LHV-608 -

FIX - Adding LHV HA proxy to a node inducted to a zone post an existing activated LHV install breaks new LHV installation -

WD-LHV-611 -

Regex rules can now be removed -

WD-LHV-614 -

Provide the CC status in the

WD-LHV-569data -WD-LHV-616 -

Document how to manually deploy a proxy if only gateway nodes have been installed -

WD-LHV-618 -

FIX - The stack upgrade process is incorrectly upgrading on every proxy restart -

WD-LHV-620 -

Proxy not starting until manually start Live Hive Slave -

WD-LHV-622 -

Need single database/table variants of the new get databases/tables by ruleid -

WD-LHV-623 -

Remove hardcoded user:group from postinstall scripts and tidy up folder permissions -

WD-LHV-625 -

Fix silent installer for LHV -

WD-LHV-627 -

Document LHV-628 known issue -

WD-LHV-629 -

Document requirements for user running live hive proxy -

WD-LHV-630 -

FIX - Regex rule status consistency check fails shortly after issuing a repair -

WD-LHV-634 -

Rename CDH service from "live_hive_proxy" -

WD-LHV-351 -

Typos fixes -

WD-LHV-551 -

FIX - Create Rule screen doc link "Hive Pattern" anchor refers to "regex" -

WD-LHV-633 -

Link to Fusion UI from live_hive_proxy configuration in CDH -

WD-LHV-372 -

FIX - Kerberos ticket expiration through long run of the testing batch. -

WD-LHV-528 -

FIX - Service.args leaks -

WD-LHV-540 -

FIX - Dependency on fusion-server for non-repl operations, even with

repl_exchange_dir-WD-LHV-289 -

Standardise logging to use SL4J -

WD-LHV-387 -

FIX - Wrong plugin status after installation -

WD-LHV-497 -

Publish type script definitions -

WD-LHV-507 -

FIX - NoClassDefFoundError after LiveHive successful installation -

WD-LHV-521 -

Complete solution to Metastore port config -

WD-LHV-539 -

Retry thrift client -

WD-LHV-241 -

FIX - [CDH-5.13] LiveHive plugin status unknown in UI -

WD-LHV-392 -

FIX - Silent installer will attempt to install live-hive erroneously -

WD-LHV-412 -

Investigate Hiveserver2 token issue described in

WD-LHV-386-WD-LHV-414 -

FIX - Unexpected error occurred message won’t vanish -

WD-LHV-483 -

Unify live hive service name on HDP and CDH -

WD-LHV-495 -

Final step - warn of outage -

WD-LHV-503 -

FIX - Installation failed: Hive Install step Restart Hive Service failed -

WD-LHV-510 -

Include live-hive logs in talkbacks -

WD-LHV-530 -

Document validation section -

WD-LHV-534 -

Rewrite the package removal instructions -

WD-LHV-538 -

Document the properties added as part of the fix for LHV-414 -

WD-LHV-541 -

Add support for CDH 5.14 -

WD-LHV-548 -

New User Guide Section: Deployment Planning -

WD-LHV-550 -

Better error message and retry button on parcel/stack download page -

WD-LHV-445 -

FIX - Hiveserver2 couldn’t connect to LiveHive Proxy -

WD-LHV-542

3. Concepts

3.1. Product concepts

Familiarity with product and environment concepts will help you understand how to use the Fusion Plugin for Live Hive. Learn the following concepts to become proficient with replication.

- Apache Hive

-

Hive is a data warehousing technology for Apache Hadoop. It is designed to offer an abstraction that supports applications that want to use data residing in a Hadoop cluster in a structured manner, allowing ad-hoc querying, summarization and other data analysis tasks to be performed using high-level constructs, including Apache Hive SQL querys.

- Hive Metadata

-

The operation of Hive depends on the definition of metadata that describes the structure of data residing in a Hadoop cluster. Hive organizes its metadata with structure also, including definitions of Databases, Tables, Partitions, and Buckets.

- Apache Hive Type System

-

Hive defines primitive and complex data types that can be assigned to data as part of the Hive metadata definitions. These are primitive types such as TINYINT, BIGINT, BOOLEAN, STRING, VARCHAR, TIMESTAMP, etc. and complex types like Structs, Maps, and Arrays.

- Apache Hive Metastore

-

The Apache Hive Metastore is a stateless service in a Hadoop cluster that presents an interface for application to access Hive metadata. Because it is stateless, the metastore can be deployed in a variety of configuration to suit different requirements. In every case, it provides a common interface for applications to use Hive metadata.

The Hive Metastore is usually deployed as a standalone service, exposing an Apache Thrift interface by which client applications interact with it to create, modify, use and delete Hive Metadata in the form of databases, tables, etc. It can also be run in embedded mode, where the metastore implementation is co-located with the application making use of it.

- WANdisco Fusion Live Hive Proxy

-

The Live Hive Proxy is a WANdisco service that is deployed with Live Hive, acting as a proxy for applications that use a standalone Hive Metastore. The service coordinates actions performed against the metastore with actions within clusters in which associated Hive metadata are replicated.

- Hive Client Applications

-

Client applications that use Apache Hive interact with the Hive Metastore, either directly (using its Thrift interface), or indirectly via another client application such as Beeline or Hiveserver2.

- Hiveserver2

-

is a service that exposes a JDBC interface for applications that want to use it for accessing Hive. This could include standard analytic tools and visualization technologies, or the Hive-specific CLI called Beeline.

Hive applications determine how to contact the Hive Metastore using the Hadoop configuration property

hive.metastore.uris. - Hiveserver2 Template

-

A template service that amends the hiveserver2 config so that it no longer uses the embedded metastore, and instead correctly references the

hive.metastore.urisparameter that points to our "external" Hive Metastore server. - Hive pattern rules

-

A symple syntax used by Hive for matching database objects, This pattern system replaced the more complex regular expressions that were used prior to Live Hive Plugin 1.2.

- WANdisco Fusion plugin

-

The Fusion Plugin for Live Hive is a plugin for WANdisco Fusion. Before you can install it you must first complete the installation of the core WANdisco Fusion product. See WANdisco Fusion user guide.

| Get additional terms from the Big Data Glossary. |

3.2. Product architecture

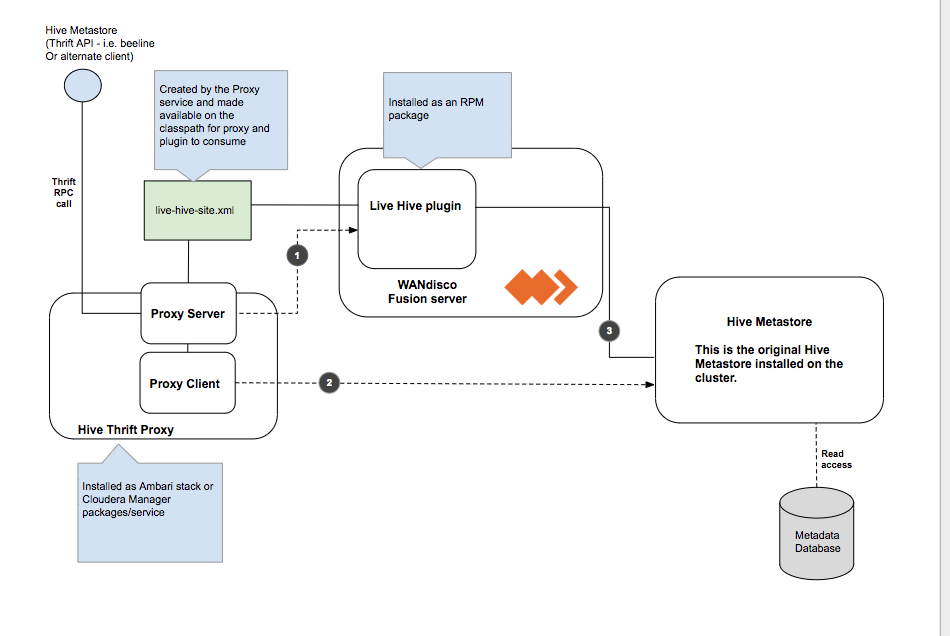

The native Hive Metastore is not replaced, instead, Live Hive Plugin runs as a proxy server that issues commands via the connected client (i.e. beeline) to the original metastore, which is on the cluster.

The Fusion Plugin for Live Hive proxy passes on read commands directly to the local Hive Metastore, while a WANdisco Fusion Live Hive Plugin will co-ordinates any write commands, so all metastores on all clusters will perform the write operations, such as table creation. Live Hive will also automatically start to replicate Hive tables when their names match a user defined rule.

| 1 | Write access needs to be co-ordinated by Fusion before executing the command on the metastore. |

| 2 | Read Commands are 'passed-through' straight to the metastore as we do not need to co-ordinate via Fusion. |

| 3 | Makes connection to the metastore on the cluster. |

3.2.1. Limitations

Membership changes

There is currently no support for dynamic membership changes. Once installed on all Fusion nodes, the Live Hive Plugin plugin is activated. See Activate Live Hive Plugin. During activation, the membership for replication is set and cannot be modified later. For this reason, it’s not possible to add new Live Hive Plugin nodes at a later time, including a High Availability node running an existing Live Hive proxy that wasn’t part of your original membership.

Any change to membership in terms of adding, removing or changing existing nodes will require a complete reinstallation of Live Hive.

Hive must be running at all zones

All participating zones must be running Hive in order to support replication. We’re aware that this currently prevents the popular use case for replicating between on-premises clusters and s3/cloud storage, where Hive is not running. We intend to remove the limitation in a future release.

3.3. Deployment models

The following deployment models illustrate some of the common use cases for running Live Hive.

3.4. Analytic off-loading

In a typical on-premises Hadoop cluster, data ingest, analytic jobs all run through the same infrastructure where some activities impose a load on the cluster that can impact other activities. Fusion Plugin for Live Hive allows you to divide up the workflow across separate environments, which lets you isolate the overheads associated with some events. You can ingest in one environment while using a different environment where capacity is provided to run the analytic jobs. You get more control over each environment’s performance.

-

You can ingest data from anywhere and query that at scale within the environment.

-

You can ingest data on premises (or where ever the data is generated) and query it at scale in another optimized environment, such as a cloud environment with elastic scaling that can be spun up only when queries jobs are queued. In this model, you may ingest data continuously but you don’t need to run a large cluster 24-hours-per-day for queries jobs.

3.5. Multi-stage jobs across multiple environments

A typical Hadoop workflow might involve a series of activities, ingesting data, cleaning data and then analyzing the data in a short series of steps. You may be generating intermediate output to be run against end-stage reporting jobs that perform analytical work, running all these work streams on a single cluster could require a lot of careful coordination with different types of workloads, conducting multi-stage jobs. This is a common chain of query activities for Hive, where you might ingest raw data, refine and augment it with other information, then eventually run analytic jobs against your output on a periodic basis, for reporting purposes, or in real-time.

In a replicated environment, however, you can control where those job stages are run. You can split this activity across multiple clusters to ensure the queries jobs needed for reporting purposes will have access to the capacity necessary to ensure that they run under within SLAs. You also can run different types of clusters to make more efficient use of the overall chain of work that occurs in a multi-stage job environments. You could have a cluster running that is tweaked and tuned for most efficient ingest, while running a completely different kind of environment that is tuned for another task, such as the end-stage reporting jobs that run against processed and augmented data. Running with Live data across multiple environments allows you to run each different type of activity in the most efficient way.

3.6. Migration

Live Hive allows you to move both the Hive data, stored in HCFS and associated Hive metadata from an on-premises cluster over to cloud-based infrastructure. There’s no need to stop your cluster activity; the migration can happen without impact to your Hadoop operations.

4. Installation

4.1. Pre-requisites

An installation should only proceed if the following prerequisites are met on each Live Hive Plugin node:

-

Hadoop Cluster (CDH or HDP, meeting WANdisco Fusion requirements, see Available Packages)

-

Hive installed, configured and running on the cluster

-

WANdisco Fusion 2.11.1 or later

It’s extremely useful complete some work before you begin a Live Hive Plugin deployment. The following tasks and checks will make installation easier and reduce the chance of an unexpected roadblock causing a deployment to stall or fail.

It’s important to make sure that the following elements meet the requirements that are set in the Pre-requisites.

4.1.1. Server OS

One common requirement that runs through much of the deployment is the need for strict consistency between Live Hive Plugin nodes. Your nodes should, as a minimum, be running with the same versions of:

-

Hadoop/Manager software.

-

Linux.

-

Is the version running a variant, e.g. Oracle Linux is actually RHEL?

-

-

java.

-

Which version, any other versions installed but not in PATH?

-

4.1.2. Hadoop Environment

-

Confirm that your Hadoop clusters are working.

-

Ensure that as part of your base Fusion installation, the node has a "fusion" system user account and group for Fusion services to run under on all Fusion nodes.

-

|

Folder Permissions

When installing the Live Hive proxy or plugin, the permissions of /etc/wandisco/fusion/plugins/hive/ is set to match the Fusion user (FUSION_SERVER_USER) and group (FUSION_SERVER_GROUP), which are set in the Fusion node installation procedure. Permissions on the folder are also set such that processes can write new files to that location as long as the user associated with the process is the FUSION_SERVER_USER or is a member of the FUSION_SERVER_GROUP. No automatic fix for permissioning

Changes to the fusion user/group are not automatically updated in their directories. You need to manually fix these issues, folloing the above guidelines. |

-

Confirm no errors in Hadoop daemon log files.

Live Hive Plugin dependencies

Generally, Live Hive Plugin is reliant on distribution artefacts being available for it to load. Proxy init scripts, plugin cp-extra scripts, etc, load in relevant items from the cluster it is installed on.

For Cloudera deployments, these functions will work as expected because all managed nodes have the CDH Parcel, regardless of the role of the node, thus libs are available. However, on Ambari deployments there is a requirement to load in the needed items.

Hive Metastore Libraries (Ambari)

Check that hive metastore libraries are available on the Live Hive Plugin node. These instructions are dependent on availability of the binaries and any local policies for access and use of the native system packages or access to repositories. The following commands check if the requirement packages / libraries are already installed:

rpm -qa "hive*-metastore"

dpkg -l "hive*-metastore"

ls /usr/*/current/hive-metastore/lib/

If running any of these commands shows that hive-metastore is installed, no further action should be required. If the packages are not in place, you should run the appropriate packager:

# can be made non-interactive with -y flag yum install "hive*-metastore"

# can be made non-interactive with --non-interactive flag zypper install "hive*-metastore"

# can be made non-interactive with -y flag apt-get install "hive*-metastore"

4.1.3. Firewalls and Networking

-

If iptables or SELinux are running, you must confirm that any rules that are in place will not block Live Hive Plugin communication.

-

If any nodes are multi-homed, ensure that you account for this when setting which interfaces will be used during installation.

-

Ensure that you have hostname resolution between clusters, if not add suitable entries to your hosts files.

-

Check your network performance to make sure there are no unexpected latency issues or packet loss.

Kerberos Configuration (CDH)

Prepare a Kerberos principal for each Fusion node and place this in a keytab with read/write permissions for user “fusion” on the relevant node.

If a non-superuser principal is used, it also needs sufficient permission to impersonate all users. Setting the permissions is done by adding the following parameters to the Cluster-wide Advanced Configuration Snippet (Safety Valve) for core-site.xml on all clusters:

hadoop.proxyuser.fusion.groups=*

and

hadoop.proxyuser.fusion.hosts=<FQDN of local Fusion nodes>

Kerberos Configuration (HDP)

Under HDP, these properties are added to the:

HDFS Config --> Advanced Core-site

| For more information, see Secure Impersonation. |

-

(HDP) Set up the KDC server and create admin user and database.

-

(HDP) Create principals for Hadoop in Kerberos database.

-

Edit Hadoop config files to reference Keytabs and principals.

-

Restart Cluster.

SSL

To enable SSL encrypted communication between Fusion nodes (optional) Java KeyStore and TrustStore files must be generated or available for all Live Hive Plugin nodes.

| We don’t recommend using Self-signed certificates, except for proof-of-concept/testing. |

Confirm which components will need SSL encryption, e.g.

-

Live Hive Plugin server ←→ Live Hive Plugin server

-

server, IHC ←→ Live Hive Plugin server

-

client ←→ Live Hive Plugin server

-

browser ←→ UI server

Server utilisation

-

Will Live Hive Plugin be running on a dedicated server or sharing resources with other applications?

-

Check you will be running with sufficient disk space, will you be installing to no-default paths.

-

Use "ulimit -a" to check on the the open processes being sufficient.

-

Use netstat to review the connections being made to the server. Verify that any ports required by Live Hive Plugin are not in use.

-

Consider using SCP to push large files across the WAN to ensure that no data transfer problems occur.

4.2. Installation

4.2.1. Installer Options

The following section provides additional information about running the Live Hive installer.

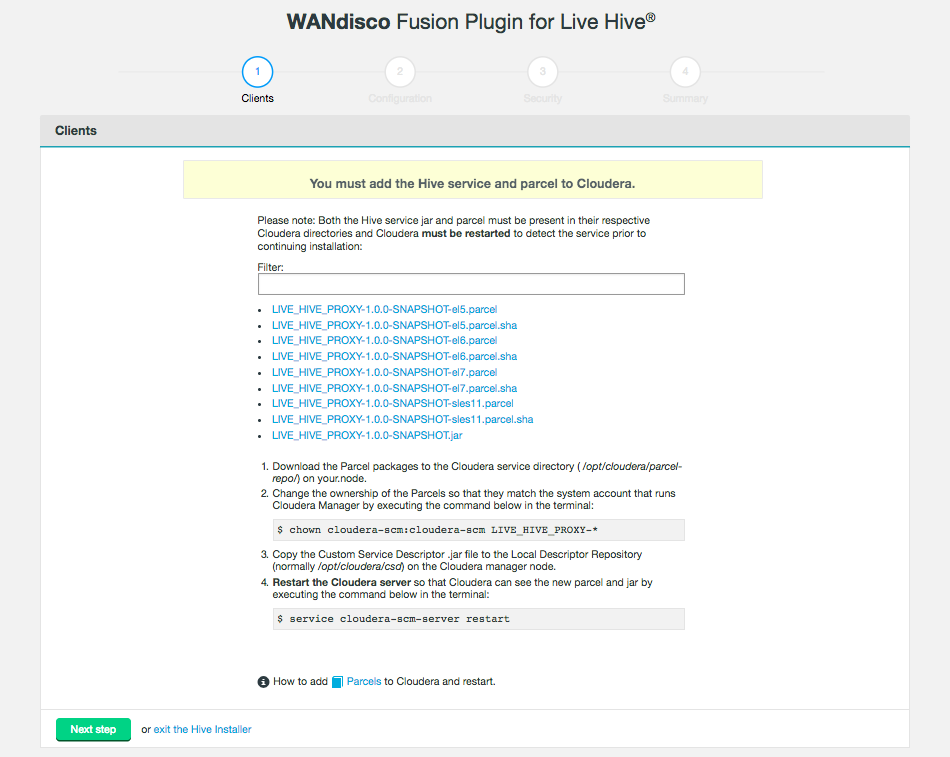

Installation files

The Client step of the installer provides a list of available parcel/jar files for you to choose from. You need to select the files that correspond with your platform.

-

LIVE_HIVE_PROXY-2.11.0-el6.parcel -

LIVE_HIVE_PROXY-2.11.0-el6.parcel.sha -

LIVE_HIVE_PROXY-2.11.0.jar

Obtain the files so that you can distribute them to the appropriate hosts in your deployment for WANdisco Fusion. The JAR and parcel files need to be saved to your /cloudera/parcels directory, while the .jar file must be copied to the Local Descriptor Repository, the default path is opt/cloudera/csd.

Installer Help

The bundled installer provides some additional functionality that lets you install selected components, which may be useful if you need to restore or replace a specific file. To review the options, run the installer with the --help option, i.e.

[user@gmart01-vm1 ~]# ./live-hive-installer.sh --help

Verifying archive integrity... All good.

Uncompressing WANdisco Hive Live.......................This usage information describes the options of the embedded installer script. Further help, if running directly from the installer is available using '--help'. The following options should be specified without a leading '-' or '--'. Also note that the component installation control option effects are applied in the order provided.

Installation options

General options:

help Print this message and exit

Component installation control:

only-fusion-ui-client-plugin Only install the plugin's fusion-ui-client component

only-fusion-ui-server-plugin Only install the plugin's fusion-ui-server component

only-fusion-server-plugin Only install the plugin's fusion-server component

only-user-installable-resources Only install the plugin's additional resources

skip-fusion-ui-client-plugin Do not install the plugin's fusion-ui-client component

skip-fusion-ui-server-plugin Do not install the plugin's fusion-ui-server component

skip-fusion-server-plugin Do not install the plugin's fusion-server component

skip-user-installable-resources Do not install the plugin's additional resources

[user@docs01-vm1 tmp]#Standard help parameters

[user@docs01-vm1 tmp]# ./live-hive-installer.sh --help

Makeself version 2.1.5

1) Getting help or info about ./live-hive-installer.sh :

./live-hive-installer.sh --help Print this message

./live-hive-installer.sh --info Print embedded info : title, default target directory, embedded script ...

./live-hive-installer.sh --lsm Print embedded lsm entry (or no LSM)

./live-hive-installer.sh --list Print the list of files in the archive

./live-hive-installer.sh --check Checks integrity of the archive

2) Running ./live-hive-installer.sh :

./live-hive-installer.sh [options] [--] [additional arguments to embedded script]

with following options (in that order)

--confirm Ask before running embedded script

--noexec Do not run embedded script

--keep Do not erase target directory after running the embedded script

--nox11 Do not spawn an xterm

--nochown Do not give the extracted files to the current user

--target NewDirectory Extract in NewDirectory

--tar arg1 [arg2 ...] Access the contents of the archive through the tar command

-- Following arguments will be passed to the embedded script

3) Environment:

LOG_FILE Installer messages will be logged to the specified fileSilent installation

Instead of installing through the UI, you can install using the silent (scripted) installer. These steps need to be repeated on each node you want the Live Hive plugin installed on.

-

Obtain the Live Hive Plugin installer from WANdisco and open a terminal session on your WANdisco Fusion node.

-

Ensure the downloaded file is executable e.g.

# chmod +x live-hive-installer.sh Enter -

Run the Live Hive Plugin installer e.g.

# sudo ./live-hive-installer.sh Enter -

Now place the parcels or stacks in the relevant directory. They can be found in the directory

/opt/wandisco/fusion-ui-server/ui-client-platform/downloads/core_plugins/live-hive-<version>. The steps are the same steps as in the UI installer. For more information see Parcels if you are using Cloudera, or Stacks if using Ambari. Ensure that you restart your Cloudera or Ambari server. -

Now edit the

live_hive_silent_installer.propertiesfile, located in/opt/wandisco/fusion-ui-server/plugins/live-hive-ui-server-<your version>/properties.

The following fields are required:-

live.hive.proxy.thrift.host- the hostname the live hive proxy server will connect to. -

live.hive.proxy.keytab- the keytab the live hive proxy will use. -

live.hive.proxy.principal- the principal the live hive proxy will use. This must be in the form user/HOST@REALM. -

metastore.service.principal- the user portion of the vanilla metastore’s service principal e.g. user. Note this may not be the same as the user/HOST@REALM entered above. -

metastore.service.host- the host portion of the vanilla metastore’s service principal e.g. HOST@REALM. Note this may not be the same as the user/HOST@REALM entered above. -

remote.thrift.host- the original Hive Metastore thrift host and port. This must be in the form host:port.

If vanilla metastore HA is configured, this should be a comma separated list of all existing metastore host:portsOptional fields:

-

live.hive.proxy.thrift.port- the port the live hive proxy server will connect to. Default=9090. -

plugin.hive.metastore.heap.size- the maximum Java heap size of the metastore. Default = 1GB.

-

-

To start the silent installation, go to

/opt/wandisco/fusion-ui-server/plugins/live-hive-ui-server-<version>and run:# ./scripts/silent_installer_live_hive.sh ./properties/live-hive-proxy-silent-installer.properties Enter -

Repeat these steps on each node.

-

Once the plugin is installed on all relevant nodes, activate the plugin.

4.2.2. Cloudera-based steps

Run the installer

Obtain the Live Hive Plugin installer from WANdisco. Open a terminal session on your WANdisco Fusion node and run the installer as follows:

-

Run the Live Hive Plugin installer on each host required:

# sudo ./live-hive-installer.sh Enter -

The installer will check for install the components necessary for completing the installation:

[user@docs-vm tmp]# sudo ./live-hive-installer.sh Verifying archive integrity... All good. Uncompressing WANdisco Live Hive....................... :: :: :: # # ## #### ###### # ##### ##### ##### :::: :::: ::: # # # # ## ## # # # # # # # # # ::::::::::: ::: # # # # # # # # # # # # # # ::::::::::::: ::: # # # # # # # # # # # ##### # # # ::::::::::: ::: # # # # # # # # # # # # # # # :::: :::: ::: ## ## # ## # # # # # # # # # # # :: :: :: # # ## # # # ###### # ##### ##### ##### You are about to install WANdisco Live Hive version 1.2.0 Do you want to continue with the installation? (Y/n) y wd-live-hive-plugin-1.2.0.tar.gz ... Done live-hive-fusion-core-plugin-1.2.0-1079.noarch.rpm ... Done storing user packages in '/opt/wandisco/fusion-ui-server/ui-client-platform/downloads/core_plugins/live-hive' ... Done live-hive-ui-server-1.0.0-dist.tar.gz ... Done All requested components installed. Go to your WANDisco Fusion UI Server to complete configuration.

|

Installer options

View the Installer Options section for details on additional installer functions, including the ability to install selected components.

|

| IMPORTANT: Once you run this installer script, do not restart the Fusion node until you have fully completed the installation steps (up to activation) for this node. |

Configure the Live Hive Plugin

-

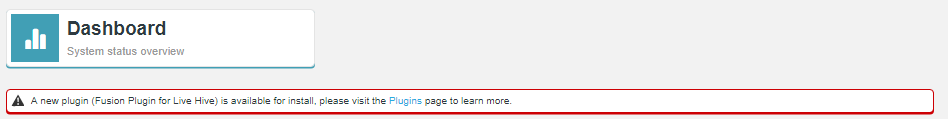

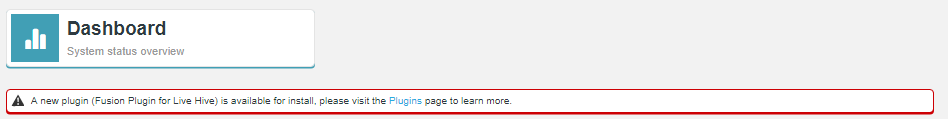

Open a session to your WANdisco Fusion UI. You will see message that confirms that the Live Hive Plugin has been detected. Click on plugins link to review the Plugins page.

Figure 3. Live Hive Plugin Architecture

Figure 3. Live Hive Plugin Architecture -

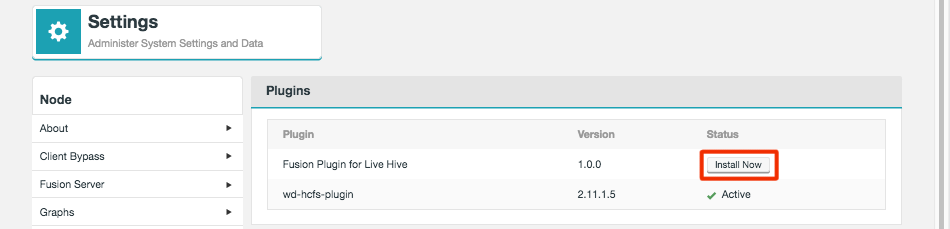

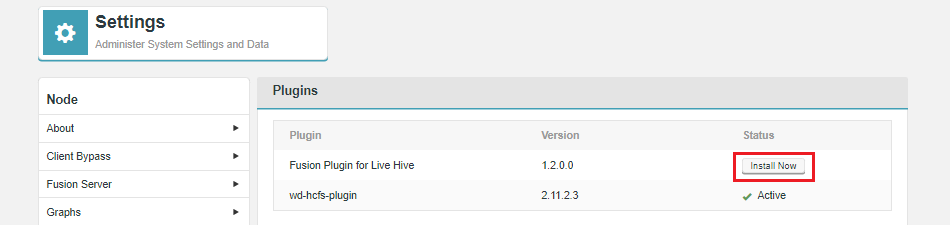

The plugin Fusion Plugin for Live Hive now appears on the list. Click the button labelled Install Now.

Figure 4. Live Hive Plugin Architecture

Figure 4. Live Hive Plugin Architecture -

The installation process runs through four steps that handle the placement of parcel files onto your Cloudera Manager server.

Figure 5. Live Hive Plugin Architecture

Figure 5. Live Hive Plugin Architecture -

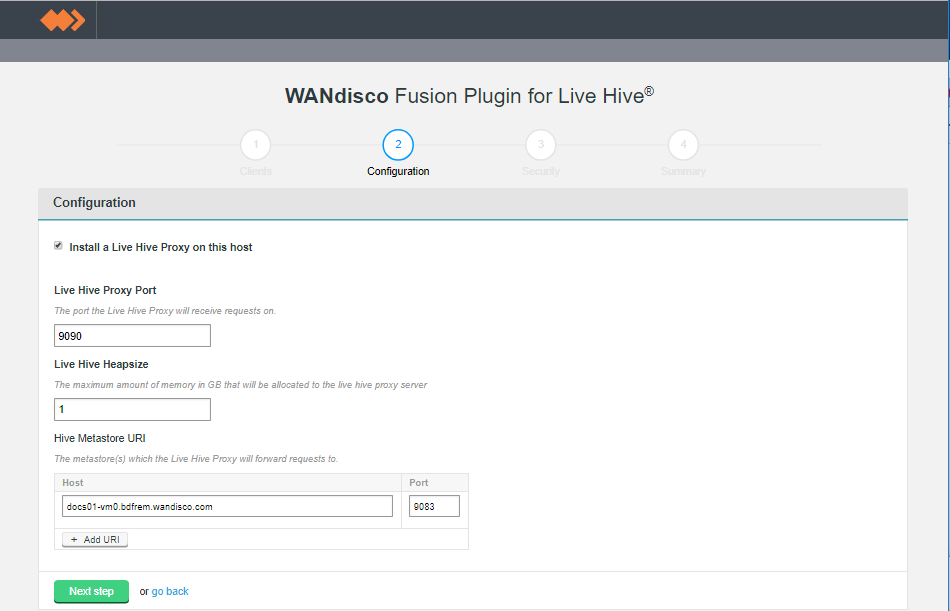

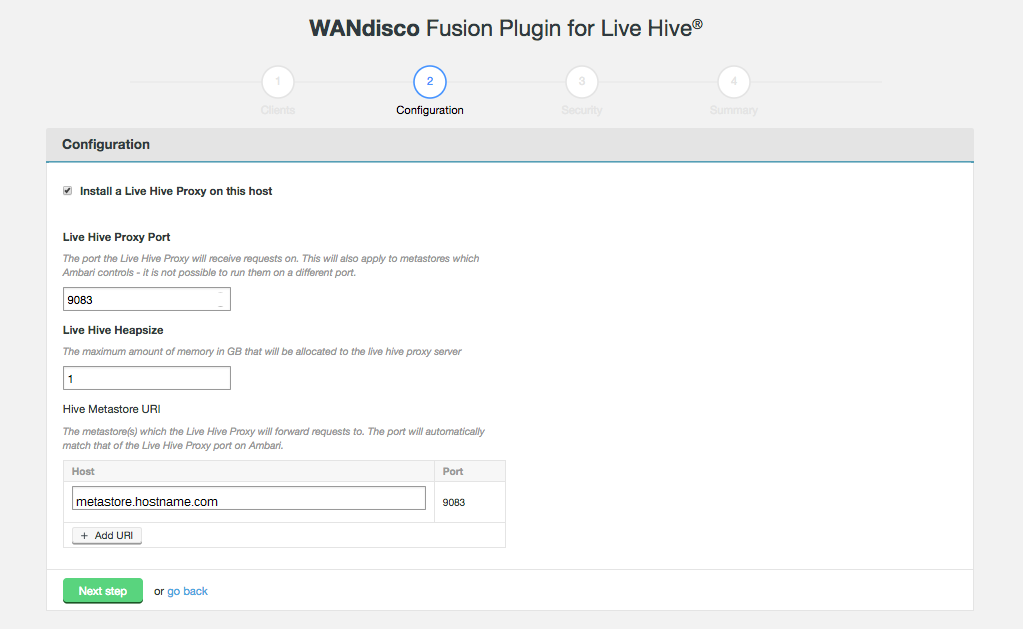

The second installer screen handles Configuration. The first section validates existing configuration to ensure that Hive is set up correctly. Click the Validate button.

Figure 7. Live Hive Plugin installation - validation (Screen 2)

Figure 7. Live Hive Plugin installation - validation (Screen 2)- Install a Live Hive Proxy on this host

-

The installer lets you choose not to install the Live Hive proxy onto this node. While you must install Live Hive on all nodes, if you don’t wish to use a node to store hive metadata, you can choose to exclude the Live Hive proxy from the installation. If you do this, the node still plays its part in transaction coordination, without keeping a local copy of the replicated data.

If you deselect Live Hive proxy on ALL nodes, then replication will not work. You must install at least 1 proxy in each zone. Should you have a cluster that doesn’t have a single Live Hive proxy, you will need to perform the following procedure to enable Hive metadata replication. - Live Hive Proxy Port

-

The HTP port used by the Plugin. Default: 9090

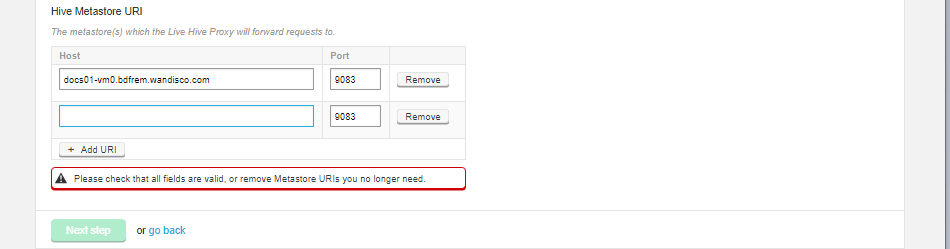

- Hive Metastore URI

-

The metastore(s) which the Live Hive proxy will send requests to.

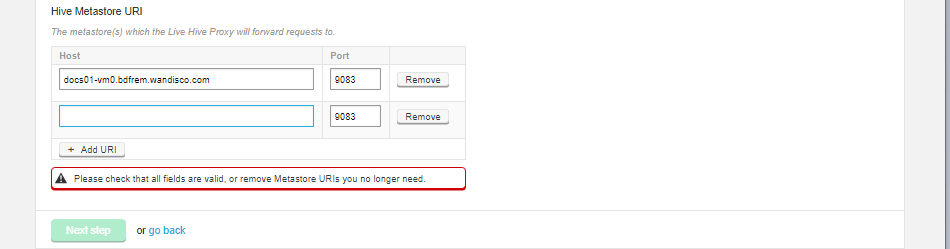

Add additional URIs by clicking the + Add URI button and entering additional URI / port information.If you add additional URIs, you must complete the necessary information or remove them. You cannot have an incomplete line.  Figure 8. Live Hive Plugin installation - Additional URIs

Figure 8. Live Hive Plugin installation - Additional URIsClick on Next step to continue.

-

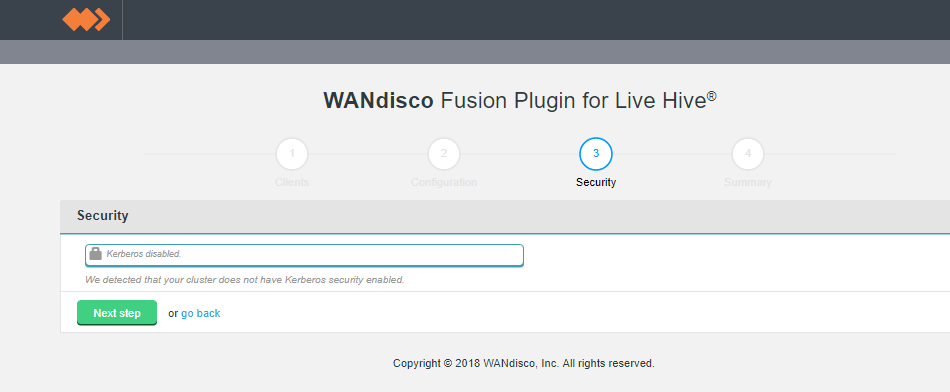

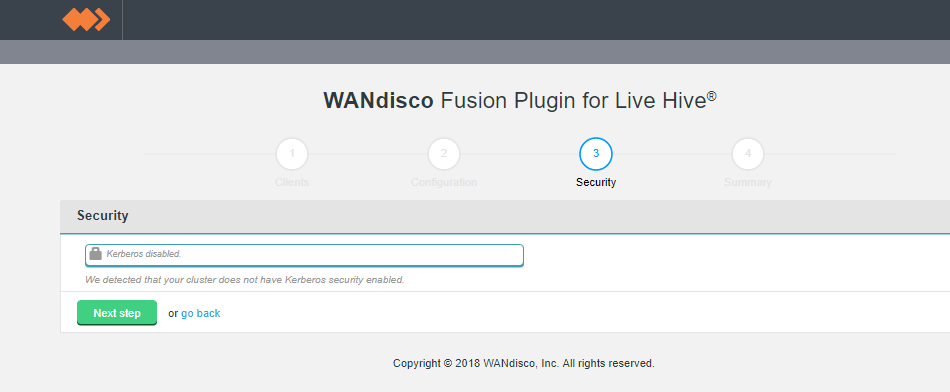

Step 3 of the installation covers security. If you have not enabled Kerberos on your cluster, you will pass through this step without adding any additional configuration.

Figure 9. Live Hive Plugin installation - security disabled (Screen 3)

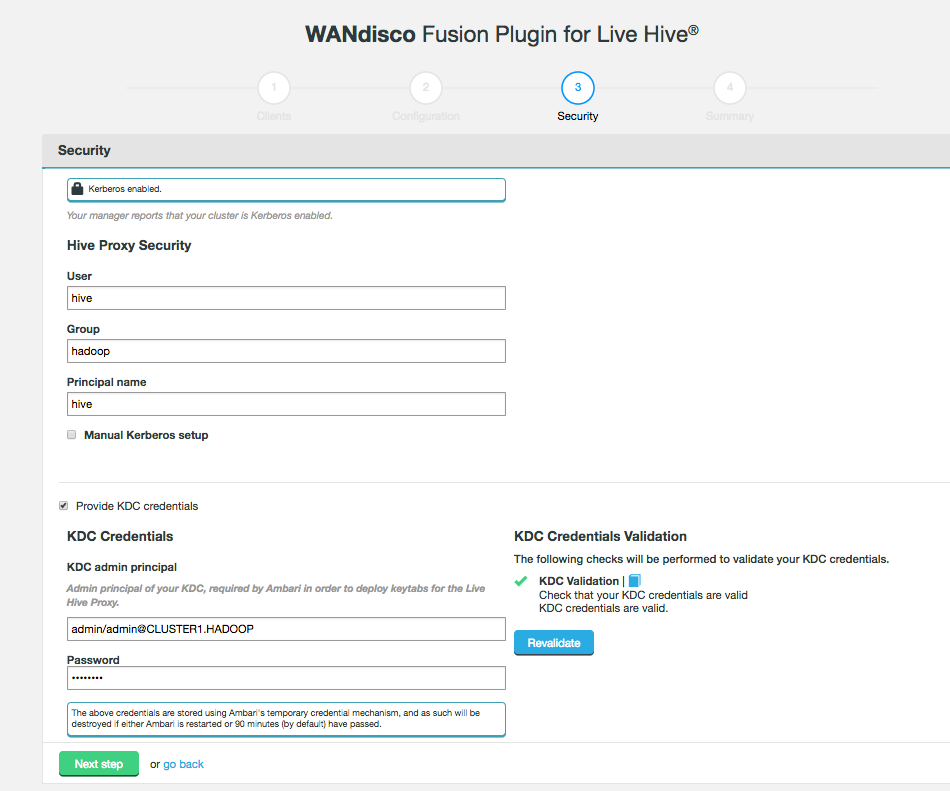

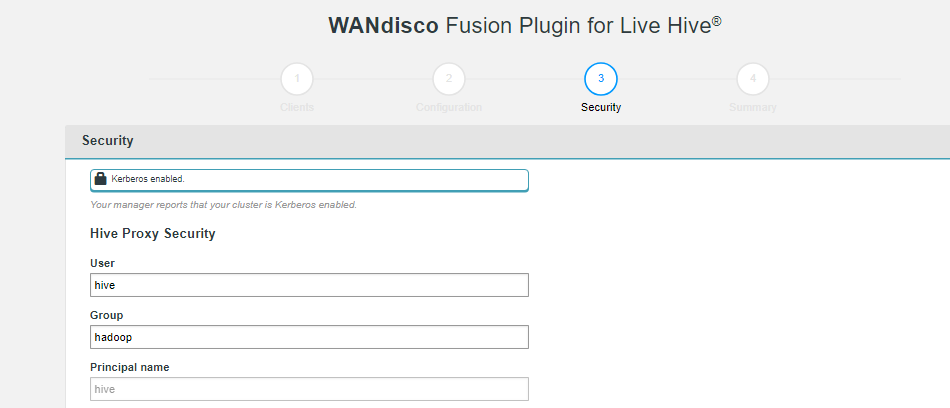

Figure 9. Live Hive Plugin installation - security disabled (Screen 3)If you enable Kerberos, you will need to supply your Kerberos credentials.

Figure 10. Live Hive Plugin installation - security enabled (Screen 3)

Figure 10. Live Hive Plugin installation - security enabled (Screen 3)Hive Proxy Security

- User

-

System user used for Hive Proxy

- Group

-

System group for secure access

- Principal name

-

The name of the Kerberos principal name for access

Ensure that you use the same principal as is used for the Hive stack. If you use a different principal then Live Hive will not work due to basic security constraints. - Manual Kerberos setup

-

Tick the manual Kerberos setup

- Provide KDC credentials

-

Tick the checkbox to configure KDC credentials

KDC Credentials

If Ambari is managing the cluster’s Kerberos implementation, you must provide the following KDC credentials or the plugin installation will fail. - KDC admin principal

-

Admin principal of your KDC, required by the Hadoop manager in order to deploy keytabs for the Live Hive Proxy.

- Password

-

Password for the KDC admin principle.

The above credentials are stored using stored using the Hadoop Manager’s temporary credential mechanism, and as such will be destroyed if either the Hadoop manager is restarted or 90 minutes (by default) have passed.

- Keytab file path

-

The installer now validates that there is read access to the keytab that you specify here.

- Metastore Service Principal Name

-

The installer validates where there are valid principals in the keytab.

- Metastore Service Hostname

-

Enter the hostname of your Hive Metastore service.

-

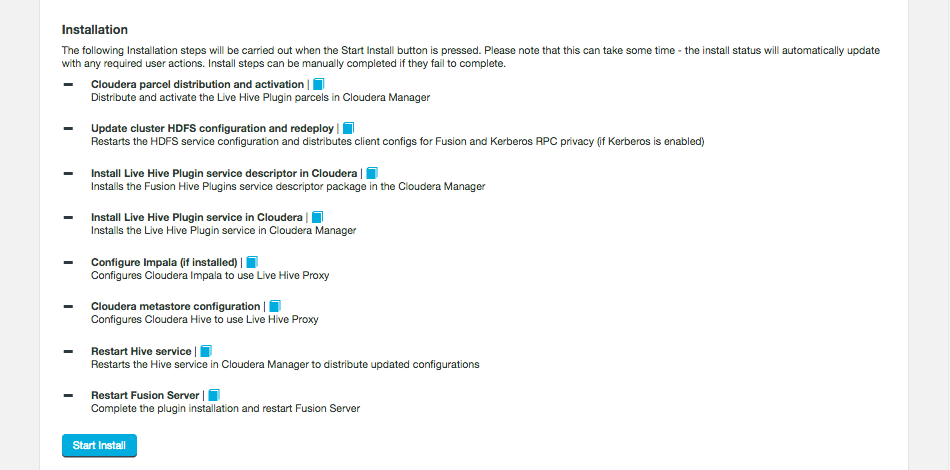

The final step is to complete the installation. Click Start Install.

Figure 11. Live Hive Plugin installation summary - screen 4

Figure 11. Live Hive Plugin installation summary - screen 4The following steps are carried out:

- Cloudera parcel distribution and activation

-

Distribute and active the Fusion Hive Plugin parcels in Cloudera Manager

- Update cluster HDFS configuration and redeploy

-

Restarts the HDFS service configuration and distributes client configs for Fusion and Kerberos RPC privacy (if Kerberos is enabled)

- Install Fusion Hive Plugin service descriptor in Cloudera

-

Installs the Fusion Hive Plugin service in Cloudera Manager

- Configure Impala (if installed)

-

Configures Cloudera Impala to use Fusion Hive Plugin proxy

- Configure Hive

-

Configure Cloudera Hive to use Fusion Hive Plugin proxy

- Restart Hive service

-

Restarts the Hive service in Cloudera Manager to distribute update configurations

- Restart Fusion Server

-

Complete the plugin installation and restart Fusion Server

-

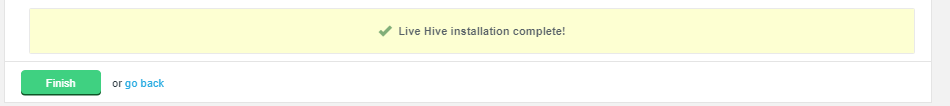

The installation will complete with a message "Live Hive installation complete!"

Figure 12. Live Hive Plugin installation - Completion

Figure 12. Live Hive Plugin installation - CompletionClick Finish to close the Plugin installer screens.

Now advance to the Activation steps.

4.2.3. Ambari-based steps

Run the installer

Obtain the Live Hive Plugin installer from WANdisco. Open a terminal session on your WANdisco Fusion node and run the installer as follows:

-

Run the Live Hive Plugin installer on each host required:

# sudo ./live-hive-installer.sh Enter -

The installer will check for install the components necessary for completing the installation:

[user@docs-vm tmp]# sudo ./live-hive-installer.sh Verifying archive integrity... All good. Uncompressing WANdisco Live Hive....................... :: :: :: # # ## #### ###### # ##### ##### ##### :::: :::: ::: # # # # ## ## # # # # # # # # # ::::::::::: ::: # # # # # # # # # # # # # # ::::::::::::: ::: # # # # # # # # # # # ##### # # # ::::::::::: ::: # # # # # # # # # # # # # # # :::: :::: ::: ## ## # ## # # # # # # # # # # # :: :: :: # # ## # # # ###### # ##### ##### ##### You are about to install WANdisco Live Hive version 1.2.0 Do you want to continue with the installation? (Y/n) y wd-live-hive-plugin-1.2.0.tar.gz ... Done live-hive-fusion-core-plugin-1.2.0.noarch.rpm ... Done storing user packages in '/opt/wandisco/fusion-ui-server/ui-client-platform/downloads/core_plugins/live-hive' ... Done live-hive-ui-server-1.0.0-dist.tar.gz ... Done All requested components installed. Go to your WANDisco Fusion UI Server to complete configuration.

| IMPORTANT: Once you run this installer script, do not restart the Fusion node until you have fully completed the installation steps for this node. |

Configure the Live Hive Plugin

-

Open a session to your WANdisco Fusion UI. You will see message that confirms that the Live Hive Plugin has been detected. Click on Plugins link to review the Plugins page.

Figure 13. Live Hive Plugin Architecture

Figure 13. Live Hive Plugin Architecture -

The plugin live-hive-plugin now appears on the list. Click the button labelled Install Now.

Figure 14. Live Hive Plugin Parcel installation

Figure 14. Live Hive Plugin Parcel installation -

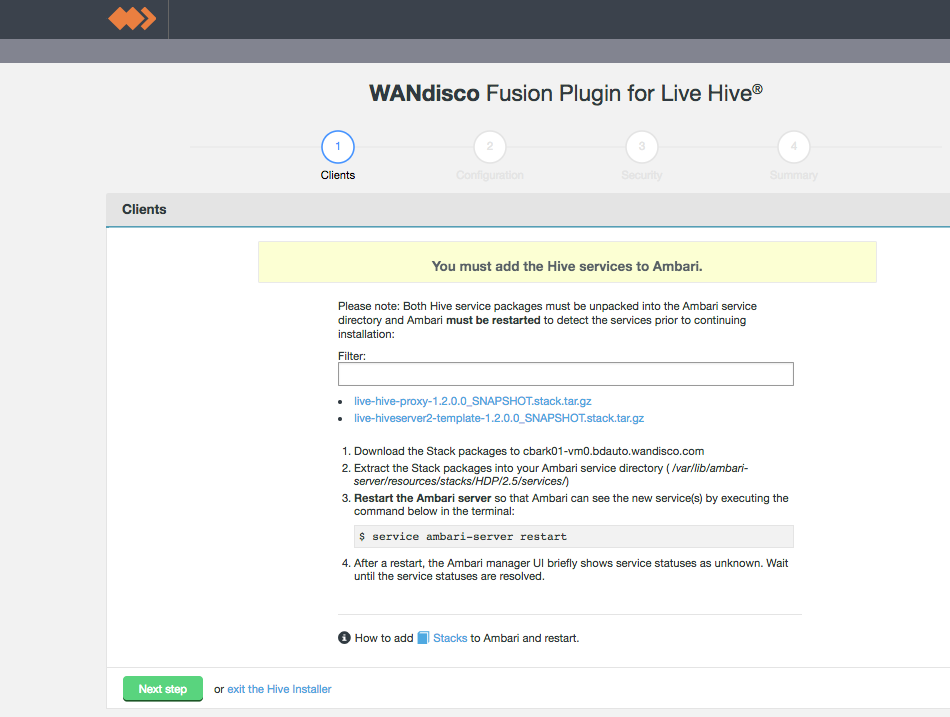

The installation process runs through steps that handle the placement of stack files onto your Ambari server.

Figure 15. Live Hive Plugin installation - Clients (Step 1)Stacks

Figure 15. Live Hive Plugin installation - Clients (Step 1)StacksStacks need to be placed in the correct directory to make them available to the manager. To do this:

-

Download the service from the installer client download panel

-

The services are gz files that will expand to the directories /LIVE_HIVE_PROXY and /LIVE_HIVESERVER2_TEMPLATE.

-

For HDP, place this directory in /var/lib/ambari-server/resources/stacks/HDP/<version>/services.

File rename neededBefore continuing, the file live-hive-proxy-env.xml needs to be renamed. This is an issue in 1.2.0 only.

In /var/lib/ambari-server/resources/stacks/HDP/<version>/services/LIVE_HIVE_PROXY/configuration/ rename the file live-hive-proxy-env.xml to live-hive-env.xml. -

Restart the Ambari server.

Note If using centos6/rhel6 we recommend using the following command to restart:initctl restart ambari-server

-

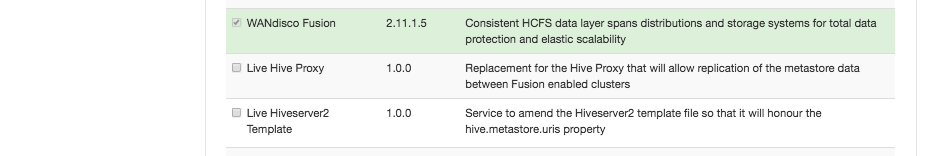

Check on your Ambari manager that the services are present, eg.

Actions > Add Service > Check the list.

Figure 16. Stacks present

Figure 16. Stacks present

-

-

The second installer screen handles Configuration.

Figure 17. Live Hive Plugin installation - Configuration (Step 2)

Figure 17. Live Hive Plugin installation - Configuration (Step 2)- Install a Live Hive Proxy on this host

-

The installer lets you choose not to install the Live Hive proxy onto this node. While you must install Live Hive on all nodes, if you don’t wish to use a node to store hive metadata, you can choose to exclude the Live Hive proxy from the installation. If you do this, the node still plays its part in transaction coordination, without keeping a local copy of the replicated data.

If you deselect Live Hive proxy on ALL nodes, then replication will not work. You must install at least 1 proxy in each zone. Should you have a cluster that doesn’t have a single Live Hive proxy, you will need to perform the following procedure to enable Hive metadata replication. - Live Hive Proxy Port

-

The HTP port used by the Plugin. Default: 9090

- Hive Metastore URI

-

The metastore(s) which the Live Hive proxy will send requests to.

Add additional URIs by clicking the + Add URI button and entering additional URI / port information.If you add additional URIs, you must complete the necessary information or remove them. You cannot have an incomplete line.  Figure 18. Live Hive Plugin installation - Additional URIs

Figure 18. Live Hive Plugin installation - Additional URIsClick on Next step to continue.

-

Step 3 of the installation covers security. If you have not enabled Kerberos on your cluster, you will pass through this step without adding any additional configuration.

Figure 19. Live Hive Plugin installation - security disabled (Step 3)

Figure 19. Live Hive Plugin installation - security disabled (Step 3)If you enable Kerberos, you will need to supply your Kerberos credentials.

Figure 20. Live Hive Plugin installation - security enabled (Step 3)

Figure 20. Live Hive Plugin installation - security enabled (Step 3)- Hive Proxy Security

-

Kerberos settings for the Hive Proxy.

- User

-

The system user for Hive.

- Group

-

The system group for Hive.

- Principal name

-

The Principal name for the Hive user.

Ensure that you use the same principal as is used for the Hive stack. If you use a different principal then Live Hive will not work due to basic security constraints. - Manual Kerberos setup (checkbox)

-

Tick this checkbox to provide the Kerberos details for Hive Proxy Kerberos.

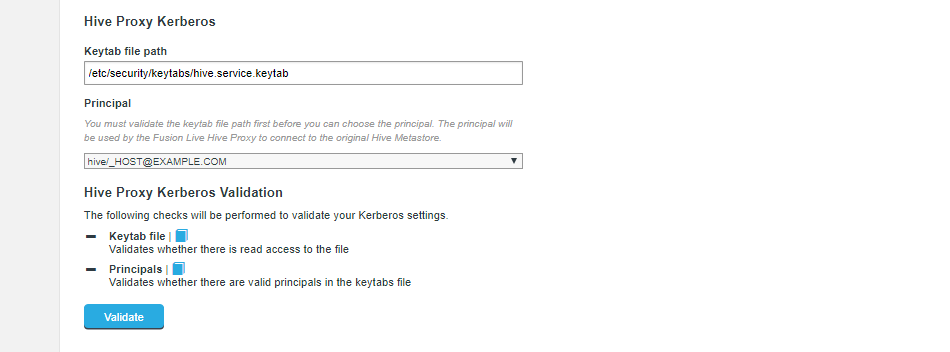

- Hive Proxy Kerberos

-

Figure 21. Live Hive Plugin installation - security enabled (Step 3)

Figure 21. Live Hive Plugin installation - security enabled (Step 3)- Keytab file path

-

The installer now validates that there is read access to the keytab that you specify here.

- Principal

-

Select from the available principals. This is the principal that will be used to connect to the original Hive metastore. Validation checks that the principal is valid.

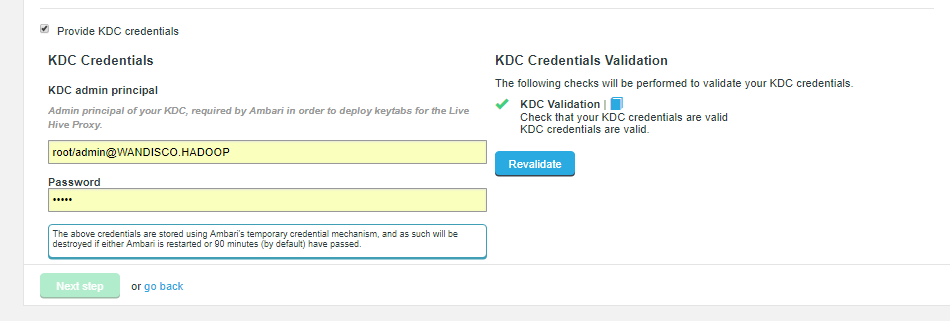

- Provide KDC credentials (Checkbox)

-

Tick the checkbox to provide details for a KDC’s admin principal and password.

Figure 22. Live Hive Plugin installation - security enabled (Step 3)

Figure 22. Live Hive Plugin installation - security enabled (Step 3)KDC Credentials

If Ambari is managing the cluster’s Kerberos implementation, you must provide the following KDC credentials or the plugin installation will fail. - KDC admin principal

-

Admin principal of your KDC, required by the Hadoop manager in order to deploy keytabs for the Live Hive Plugin Proxy.

- Password

-

Password for the KDC admin principle.

The above credentials are stored using stored using the Hadoop Manager’s temporary credential mechanism, and as such will be destroyed if either the Hadoop manager is restarted or 90 minutes (by default) have passed.

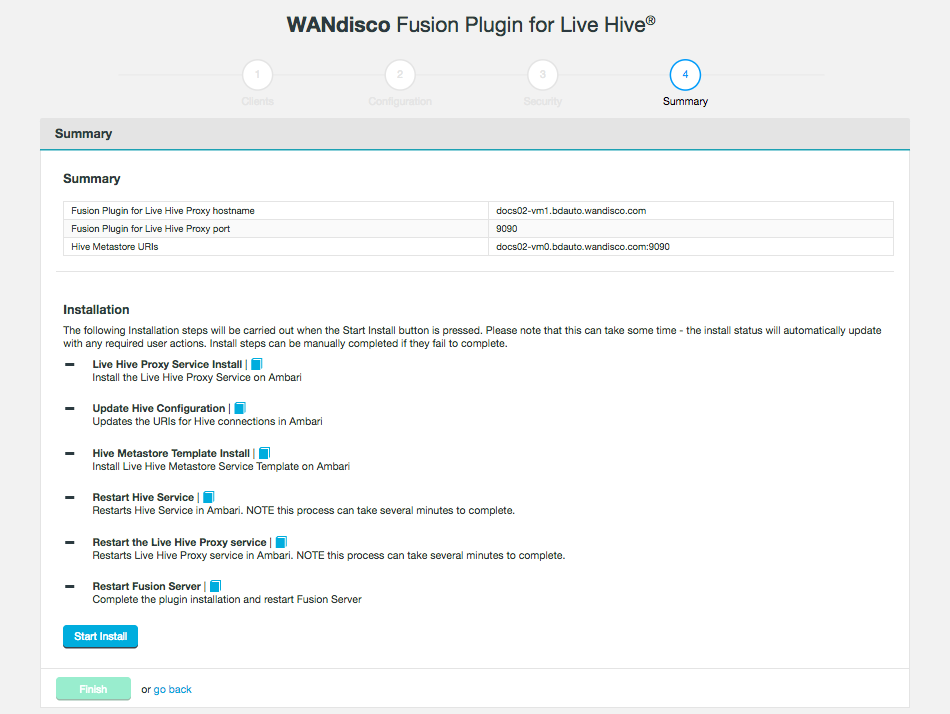

-

The final step is to complete the installation. Click Start Install.

Figure 23. Live Hive Plugin installation summary - (Step 4)

Figure 23. Live Hive Plugin installation summary - (Step 4)The following steps are carried out:

- Live Hive Proxy Service Install

-

Install the Live Hive Proxy Service on Ambari.

- Update Hive Configuration

-

Updates the URIs for Hive connections in Ambari.

- Hive Metastore Template Install

-

Install Live Hive Metastore Service Template on Ambari.

- Restart Hive Service

-

Restarts Hive Service in Ambari. NOTE this process can take several minutes to complete.

- Restart Live Hive Proxy Service

-

Restarts Live Hive Proxy Service in Ambari, Note this process can take several minutes to complete.

- Restart Fusion Server

-

Complete the plugin installation and restart Fusion Server.

-

The installation will complete with a message "Live Hive installation complete"

Figure 24. Live Hive Plugin installation - Completion

Figure 24. Live Hive Plugin installation - CompletionClick Finish to close the Plugin installer screens. You must now activate the plugin.

Instead of installing through the UI, you can install using the silent (scripted) installer. These steps need to be repeated on each node you want the Live Hive plugin installed on.

-

Obtain the Live Hive Plugin installer from WANdisco and open a terminal session on your WANdisco Fusion node.

-

Ensure the downloaded file is executable e.g.

# chmod +x live-hive-installer.sh Enter -

Run the Live Hive Plugin installer e.g.

# sudo ./live-hive-installer.sh Enter -

Now place the parcels or stacks in the relevant directory. They can be found in the directory

/opt/wandisco/fusion-ui-server/ui-client-platform/downloads/core_plugins/live-hive-<version>. The steps are the same steps as in the UI installer. For more information see Parcels if you are using Cloudera, or Stacks if using Ambari. Ensure that you restart your Cloudera or Ambari server. -

Now edit the

live_hive_silent_installer.propertiesfile, located in/opt/wandisco/fusion-ui-server/plugins/live-hive-ui-server-<your version>/properties.Required fields:-

live.hive.deploy.proxy- controls whether the installation includes the Live Hive proxy. Default is true only enter false if at least one other Live Hive Plugin node in the zone is going to run the proxy. -

live.hive.proxy.thrift.host- the hostname the live hive proxy server will bind to. -

live.hive.proxy.keytab- the keytab and subsequent principal that the live hive proxy will use. -

live.hive.proxy.principal- the principal the live hive proxy will use. This must be in the form user/HOST@REALM. -

metastore.service.principal- the user portion of the vanilla metastore’s service principal e.g. user. Note this may not be the same as the user/HOST@REALM entered above. -

metastore.service.host- the host portion of the vanilla metastore’s service principal e.g. HOST@REALM. Note this may not be the same as the user/HOST@REALM entered above. -

live.hive.proxy.remote.thrift.uris- the original Hive Metastore thrift host and port. This must be in the form host:port.

If vanilla metastore HA is configured, this should be a comma separated list of all existing metastore host:ports

Optional fields:-

live.hive.proxy.thrift.port- the port the live hive proxy server will connect to. Default=9090. -

plugin.hive.metastore.heap.size- maximum Java heap size of the metastore (Gigabytes). Default = 1

Kerberized - using cluster manager to generate ketab and principals:-

live.hive.proxy.kerberos.user- default is hive -

live.hive.proxy.kerberos.group- default is hadoop -

live.hive.proxy.kerberos.principal.short.namedefault is - hive -

live.hive.proxy.kadmin.principal- no default, KDC principal -

live.hive.proxy.kadmin.password- no default, KDC password

Kerberized and you don’t want Live Hive Plugin to use the cluster manager for keytab/principal generation:-

live.hive.kerberos.manual.setup- Set to true -

live.hive.proxy.keytab- The keytab and subsequent principal that the live hive proxy will use -

live.hive.proxy.principal- Principal must take the form of user/HOST@REALM

-

-

To start the silent installation, go to

/opt/wandisco/fusion-ui-server/plugins/live-hive-ui-server-<version>and run:# ./scripts/silent_installer_live_hive.sh ./properties/live-hive-proxy-silent-installer.properties Enter -

Repeat these steps on each node.

-

Once the plugin is installed on all relevant nodes, activate the plugin.

4.3. Activate Live Hive Plugin

After completing the installation you will need to active Live Hive Plugin before you can use it. Use the following steps to complete the plugin activation.

-

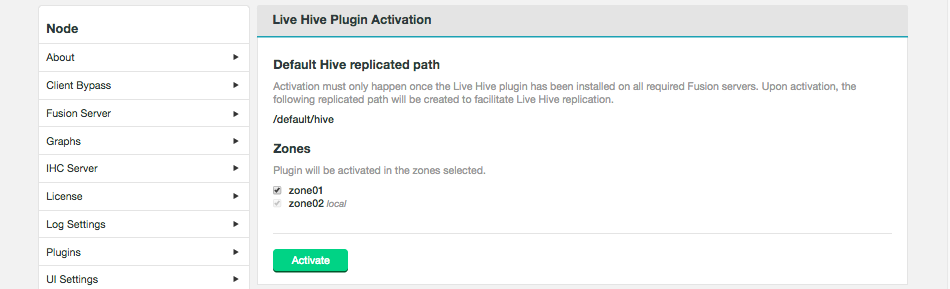

Log into the WANdisco Fusion UI. On the Settings tab, go to the Live Hive link on the side menu. The Live Hive Plugin Activation screen will appear.

Figure 25. Live Hive Plugin activation - StartEnsure that your clusters have been inducted before activating.The plugin will not work if you activate before completing the induction of all applicable zones.

Figure 25. Live Hive Plugin activation - StartEnsure that your clusters have been inducted before activating.The plugin will not work if you activate before completing the induction of all applicable zones.Tick the checkboxes that correspond with the zones that you will to replicate Hive metadata between, then click Activate.

-

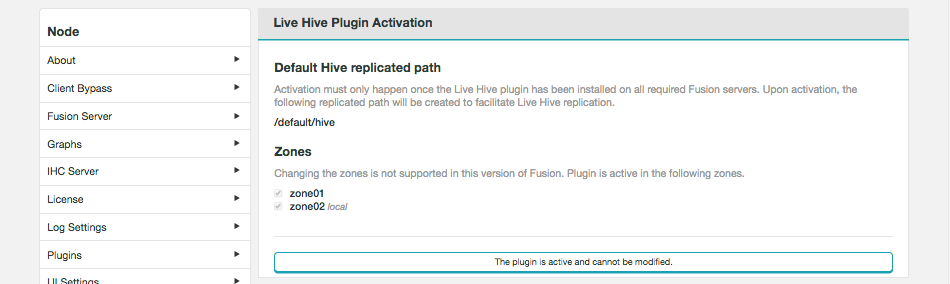

A message will appear at the bottom of the screen that confirms that the plugin is active.

Figure 26. Live Hive Plugin activation - Completion

Figure 26. Live Hive Plugin activation - CompletionThe plugin is active and cannot be modified.

You can’t change membership, once activated. See Membership changes

4.4. Validation

Once an installation is completed you should verify that hive metadata replication is working as expected, before entering into a production phase.

4.4.1. Hive Rule Creation

-

Login to the Live Hive Plugin UI in a browser.

-

Click the Replication tab, then click Create.

-

For the Hive option, enter:

Database name: test_01

- Table name

-

test_table.*

| In Live Hive 1.2 Regular expression rules were dropped in favor of native Hive pattern rules. See Hive LanguageManual |

-

Click Create rule.

-

Create Hive Data - a partitioned table, e.g., Login to beeline eg.

CDH with Kerberos

kinit as your user (avoid hdfs and hive - they may be blacklisted), then

beeline -u 'jdbc:hive2://<Hive server>:10000/;transportMode=binary;principal=hive/_HOST@<KDC.DOMAIN>'

HDP with Kerberos

kinit as your user, then

beeline -u 'jdbc:hive2://<Hive server>:10000/;transportMode=binary;principal=hive/_HOST@<KDC.DOMAIN>' <user> <password>

CDH with Sentry

To grant the hive group the appropriate permissions, run the commands:

beeline>CREATE ROLE admin; beeline>GRANT ALL ON SERVER server1 TO ROLE admin; beeline>GRANT ROLE admin TO GROUP hive;

User impersonation should now work.

HDP with Ranger

beeline>GRANT ALL ON test_01.* TO hive/<client node>@<KDC.DOMAIN>

beeline>CREATE DATABASE test_01;

beeline>USE test_01;

beeline>SET hive.exec.dynamic.partition=true;

beeline>SET hive.exec.dynamic.partition.mode=nonstrict;

beeline>SET hive.exec.max.dynamic.partitions=10000;

beeline>SET hive.exec.max.dynamic.partitions.pernode=10000;

beeline>SET hive.metastore.partition.name.whitelist.pattern=.*;

beeline>CREATE TABLE temp_test_table (col_value STRING) tblproperties("skip.header.line.count"="1");

beeline>LOAD DATA LOCAL INPATH '/path/to/test-table-data.csv' OVERWRITE INTO TABLE temp_test_table;

beeline>CREATE TABLE test_table (player_id STRING,runs INT) PARTITIONED BY(year INT);

beeline>INSERT OVERWRITE TABLE test_table PARTITION(year) SELECT regexp_extract(col_value, '^(?:([^,]*),?){1}', 1) player_id, regexp_extract(col_value, '^(?:([^,]*),?){9}', 1) runs, regexp_extract(col_value, '^(?:([^,]*),?){2}', 1) year from temp_test_table;

-

Check Replication tab - should see a generated rule for table batting.

4.4.2. Consistency Check

Select the generated table rule on the Replication tab and start a consistency check - this will check both HCFS table data and Hive metadata.

4.4.3. Repair

You can test the repair process by creating a metadata inconsistency by bypassing the Live Hive proxy.

beeline --hiveconf ..uris= does not work - its blacklisted from the cmd line boo hoo. hive -hiveconf hive.metastore.uris=thrift://<a metastore host>:<port> hive>DROP TABLE test_01.table-test

Create a data inconsistency by modifying table data on HDFS, bypassing the Fusion client:

hdfs dfs -D "fs.hdfs.impl=org.apache.hadoop.hdfs.DistributedFileSystem" -rm -r /a/partition/folder

Platform |

Default Location |

CDH |

/user/hive/warehouse |

HDP |

/apps/hive/warehouse |

4.5. Upgrade

Fusion Plugin for Live Hive is upgraded is completed by uninstalling the plugin on all nodes, followed by a re-installation, using the standard installation steps for your platform type.

4.5.1. Upgrade from Hive Metastore Plugin

Use the following procedure to upgrade to Live Hive from Hive Metastore Plugin (the precursor to Live Hive).

Uninstall Hive Metastore Plugin (WDHive)

-

Decouple Fusion from the Hive service, reset _hive.metastore.uris

-

Restart Hive service

-

Stop our service(s) in Manager

-

Remove our services from Manager

-

Remove our manager elements

-

Stop UI, Stop Fusion servers

-

Remove Fusion elements

-

Start UI, Fusion servers

On Hadoop Manager

-

Reset all mentions of the

hive.metastore.urisparameter in the Hive service config to the default value. -

Restart the Hive service to deploy the config change.

-

(Cloudera) Stop WD Hive Metastore.

-

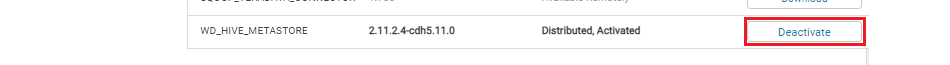

(Cloudera Manager) Deactivate the WD_Hive parcel.

Figure 27. Live Hive Plugin Upgrade

Figure 27. Live Hive Plugin UpgradeDeactive WD_HIVE_METASTORE parcel (Deactive Only), then 'Remove from hosts'; you may then remove the WD Hive Metastore service from the Cloudera UI.

(Ambari) Stop WD HS2 Template , WD Hive Metastore and WD Hive Metastore Slave services.

Uninstall WDHiveServerTemplate Uninstall WDHiveMetastoreService

On Fusion Node

-

On the manager node, remove service parcel/stack, e.g.

Ambari

find /var/lib/ambari-server/ ! -readable -prune -o -name LIVE_HIVE* -print

Cloudera

find /opt/cloudera/ ! -readable -prune -o -name LIVE_HIVE* -print | xargs -n1 rm -rf

Remove the files found, e.g. (for CDH)

rm -rf /opt/cloudera/csd/CLIENT-JAR.jar rm -rf /opt/cloudera/parcels/.flood/PACKAGE-NAME.parcel.torrent rm -rf /opt/cloudera/parcels/.flood/PACKAGE-NAME.parcel rm -rf /opt/cloudera/parcels/.flood/PACKAGE-NAME.parcel/PACKAGE-NAME.parcel

-

Bring the Fusion server and UI server to a stop,

service fusion-server stop service fusion-ui-server stop

-

Remove the WDHive package, e.g.

rpm -qa |grep live-hive yum remove -y live-hive-fusion-core-plugin-1.2.1.2-1246.noarch yum remove -y live-hive-fusion-core-proxy-1.2.1.2-1246.noarch

-

Remove package files from the node’s file system:

Removing the dcone/db deletes a Live Hive Plugin configuration, including inductions and all Replicated path settings. After this removal you will need to re-induct all nodes and restore all replication settings. Record all configuration settings before completing this step.

rm -rf /opt/wandisco/fusion-ui-server/ui-client-platform/plugins/wd-live-hive-plugin rm -rf /opt/wandisco/fusion-ui-server/ui-client-platform/downloads/core_plugins/live-hive rm -rf /opt/wandisco/fusion-ui-server/plugins/live-hive-ui-server-1.2.1.2 rm -rf /opt/wandisco/fusion/server/dcone/db

-

Start the Fusion UI server.

service fusion-server start service fusion-ui-server start

Now that the Hive Metastore plugin has been removed, you can following the Installation instructions located in the Installation section. See Installation.

4.6. Uninstallation

Use the following section to guide the removal.

| Ensure that you contact WANdisco support before running this procedure. The following procedures currently require a lot of manual editing and should not be used without calling upon WANdisco’s support team for assistance. |

4.6.1. Service removal

If removing Live Hive from a live cluster (rather than just removing Live Hive from a fusion server for re-installation / troubleshooting purposes) the following steps should be performed before removing the plugin:

-

Remove or reset to default the amended

hive.metastore.urisparameter in the Hive service config (either in Ambari or Cloudera Manager) that is currently pointing at the Live Hive Proxy. -

Restart the cluster to deploy the changed config. No Hive clients will now be replicating to the proxy.

-

Stop and delete the proxy service. On Cloudera, deactivate the

LIVE_HIVE_PROXYparcel.

4.6.2. Package removal

Currently there is no programatic method for removing components although you can use the following commands to delete the plugin components, one at a time:

[user@node1 ~]# yum erase -y -q live-hive-fusion-core-plugin

Repository cloudera-manager-5.10.0 is listed more than once in the configuration

Warning: RPMDB altered outside of yum.

WANdisco Hive Metastore Plugin uninstalled successfully.

rm -rf /opt/wandisco/fusion-ui-server/plugins/live-hive-server-2.11-SNAPSHOT/

rm -rf /opt/wandisco/fusion-ui-server/ui-client-platform/plugins/wd-live-hive-plugin/

rm -rf /opt/wandisco/fusion-ui-server/ui-client-platform/downloads/core_plugins/live-hive/Revert the HS2 template

-

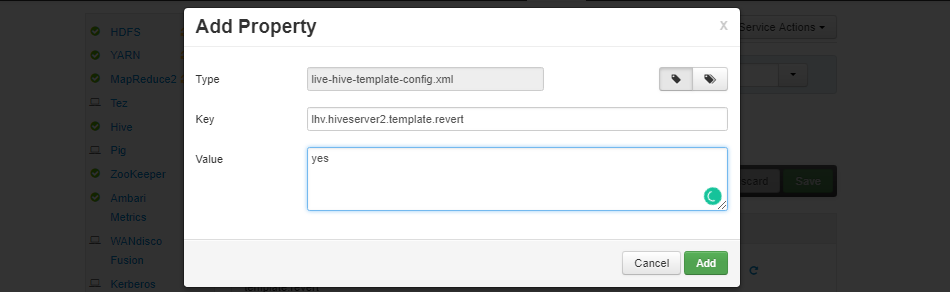

Login to Ambari and goto the HS2 template component. Go to the config tab.

-

Inside

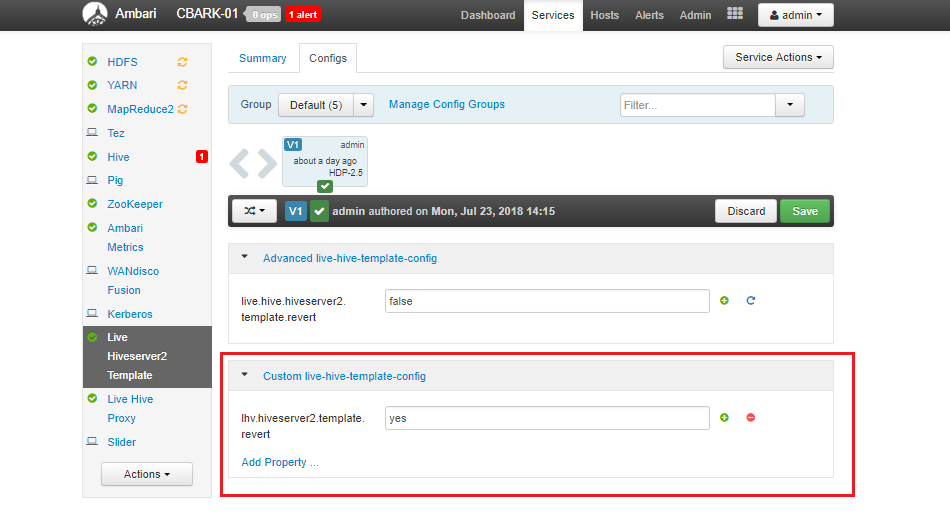

Custom live-hive-template-configadd propertylive.hive.hiveserver2.template.revert- Set any value (1oryesare perfectly fine, the value is not important, but must not be empty). Figure 28. reverting01

Figure 28. reverting01Click Add.

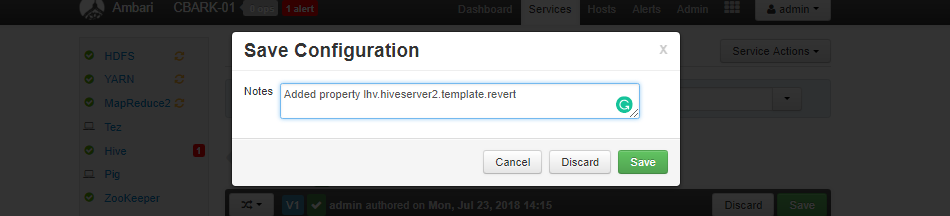

Figure 29. reverting02

Figure 29. reverting02Click Save.

-

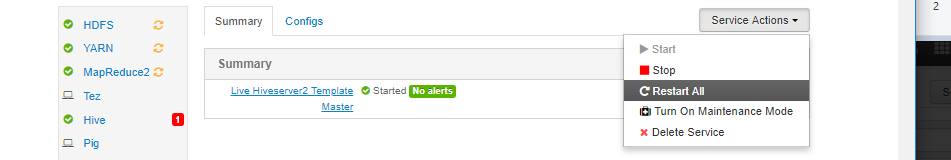

Save the config and Restart the HS2 template. This will undo the template changes.

Figure 30. reverting03

Figure 30. reverting03Click Save.

-

Restart any Hiveserver2 instances to allow them to revert to Embedded values. The HS2 template will now go into a stopped state on its own

Figure 31. reverting04

Figure 31. reverting04 -

In the Hive service in Ambari, update all instances of properties

hive.metastore.uristo point to the existing Metastore instances and not Live Hive Proxy.templeton.hive.properties is an often missed property that needs updates -

Save the Hive config updates.

-

Restart all Hive services.

| The above steps are provided to ensure a complete and safe removal process. In most cases the steps may not be required as a replacement HS2 template is going to be required. |

Remove stacks (Ambari)

These commands are correct for HDP 2.5.3.0 with Ambari 2.4.1.0., and HDP 2.6.0.3 with Ambari 2.5.0.3. If you are using a different version then they may differ slightly.

In the commands below you will need to replace the following:

-

login:password - your details to log in to the Ambari UI

-

AMBARI_SERVER_HOST - the host url

-

<cluster-name> - cluster name e.g. HVLV-01

-

Run the following curl command to show existing services.

curl -v -u login:password -X GET http://$HOSTNAME:8080/api/v1/clusters/$DC_NAME/services

-

Stop the WD Hive Metastore.

curl -u login:password -H "X-Requested-By: ambari" -X PUT -d '{"RequestInfo":{"context":"Stop Service"},"Body":{"ServiceInfo":{"state":"INSTALLED"}}}' http://AMBARI_SERVER_HOST:8080/api/v1/clusters/<cluster-name>/services/LIVE-HIVE-PROXY -

Stop the WD Hiveserver2 template.

curl -u login:password -H "X-Requested-By: ambari" -X PUT -d '{"RequestInfo":{"context":"Stop Service"},"Body":{"ServiceInfo":{"state":"INSTALLED"}}}' http://AMBARI_SERVER_HOST:8080/api/v1/clusters/<cluster-name>/services/LIVE-HIVESERVER2-TEMPLATE -

Remove the Metastore - MUST BE REMOVED HERE - HIVESERVER2 Template depends on the metastore.

curl -v -u login:password -H "X-Requested-By: ambari" -X DELETE http://AMBARI_SERVER_HOST:8080/api/v1/clusters/<cluster-name>/services/LIVE-HIVESERVER2-TEMPLATE

-

Remove the Hiveserver2 Template.

curl -u login:password -H "X-Requested-By: ambari" -X DELETE http://AMBARI_SERVER_HOST:8080/api/v1/clusters/<cluster-name>/services/LIVE-HIVESERVER2-TEMPLATE

-

Now delete the Stacks from

/var/lib/ambari-server/resources/stacks/HDP/x.y/services/and restart Ambari Server.

-

There is also an entry in /opt/wandisco/fusion-ui-server/properties/ui.properties that needs removing to tell the UI the plugin is no longer installed.

Put together, the following commands (run perhaps in a quick bash script) will clear all live hive from a fusion-server node.

| This will also re-induct the ecosystem and leave all nodes un-inducted with no memberships or replicated folders. |

service fusion-ui-server stop

service fusion-server stop

rm -rf /opt/wandisco/fusion/server/dcone/db

yum remove -y live-hive-fusion-core-plugin.noarch

rm -rf /opt/wandisco/fusion-ui-server/ui-client-platform/downloads/core_plugins/live-hive/

rm -rf /opt/wandisco/fusion-ui-server/ui-client-platform/plugins/wd-live-hive-plugin/

rm -rf /opt/wandisco/fusion-ui-server/plugins/live-hive-ui-server-1.0.0-SNAPSHOT/

sed -i '/plugin.installed.LiveHiveFusionPlugin=true/d' /opt/wandisco/fusion-ui-server/properties/ui.properties

service fusion-server start

service fusion-ui-server start-

Remove any replicated paths related to plugin (i.e. auto-generated paths for tables), and default/hive. You may need to use the REST API to complete this. See Remove a directory from replication.

-

Check for tasks, wait 2 hours, check again. If the /tasks directory is now empty on ALL nodes, proceed with the following:

-

Stop Fusion Plugin for Live Hive, e.g.

[user@node ~]# service fusion-ui-server stop [user@node ~]# service fusion-server stop -

Remove installation components with the following commands,

yum remove -y live-hive-fusion-core-plugin.noarch rm -rf /opt/wandisco/fusion-ui-server/ui-client-platform/downloads/core_plugins/live-hive/ rm -rf /opt/wandisco/fusion-ui-server/ui-client-platform/plugins/wd-live-hive-plugin/ rm -rf /opt/wandisco/fusion-ui-server/plugins/live-hive-ui-server-1.0.0-SNAPSHOT/ sed -i '/LiveHive/d' /opt/wandisco/fusion-ui-server/properties/ui.properties

-

Now restart, e.g.

[user@docs01-vm1 ~]# service fusion-server start [user@docs01-vm1 ~]# service fusion-ui-server startThe servers will come back, still inducted and with non-hive replication folders still in place.

4.7. Installation Troubleshooting

This section covers any additional settings or steps that you may need to take in order to successfully complete an installation.

4.7.1. Ensure hadoop.proxyuser.hive.hosts is properly configured

The following Hadoop property needs to be checked, when running with the Live Hive plugin. While the settings apply specifically to HDP/Ambari, it may also be necessary to check the property for Cloudera deployments.

Configuration placed in core-site.xml

<property>

<name>hadoop.proxyuser.hive.hosts</name>

<value>host1.domain.com,host-live-hive-proxy.organisation.com,host2.domain.com </value>

<description>

Hostname from where superuser hive can connect. This

is required only when installing hive on the cluster.

</description>

</property>Proxyuser property

- name

-

Hive hostname from which the superuser "hive" can connect.

- value

-

Either a comma-separated list of your nodes or a wildcard. The hostnames should be included for Hiveserver2, Metastore hosts and LiveHive proxy.

Some cluster changes can modify this property

There are a number of changes that can be made to a cluster that might impact configuration, e.g.

-

adding a new Ambari component

-

adding an additional instance of a component

-

adding a new service using the Add Service wizard

These additions can result in unexpected configuration changes, based on installed components, available resources or configuration changes. Common changes might include (but are not limited to) changes to Heap setting or changes that impact security parameters, such as the proxyuser values.

| Systems changes to properties such as hadoop.proxyuser.hive.hosts, should be made with great care. If the configuration is not present, impersonation will not be allowed and connection will fail. |

Handling configuration changes

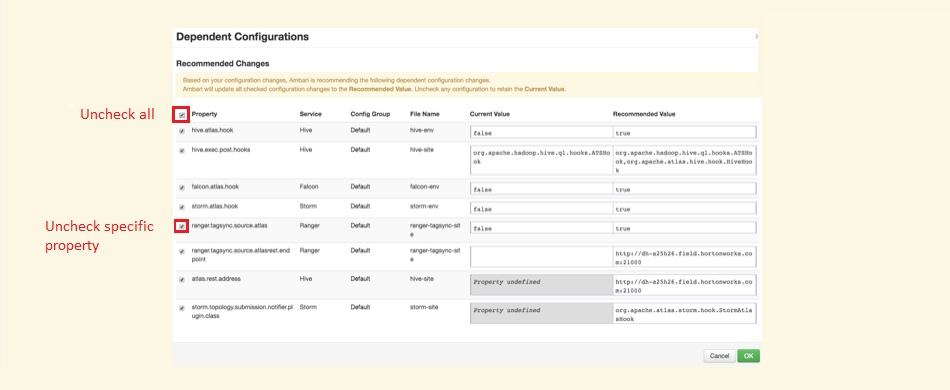

If any of the changes, listed in the previous section trigger a system change recommendation, there are two options:

-

A checkbox (selected by default) allowing you to say Ambari should apply the recommendation. You can uncheck this (or use the bulk uncheck at the top) for these.

Figure 32. Stopping a system change from altering hadoop.proxyuser.hive.hosts

Figure 32. Stopping a system change from altering hadoop.proxyuser.hive.hosts -

Manually adjust the recommended value yourself, as you can specify additional properties that Ambari may not be aware of.

The Proxyuser property values should include hostnames for Hiveserver2, Metastore hosts and LiveHive proxy. Accepting recommendations that do not contain this (or the alternative all encompassing wildcard *), will more than likely result in service loss for Hive.

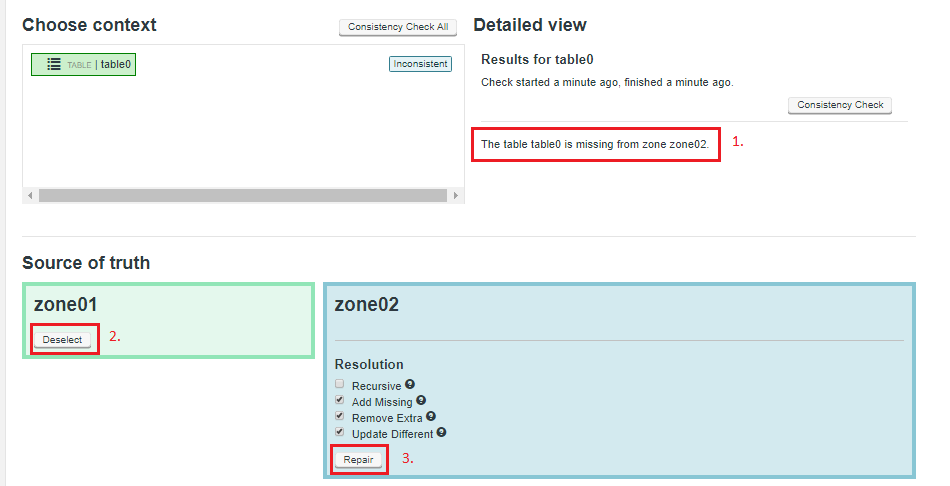

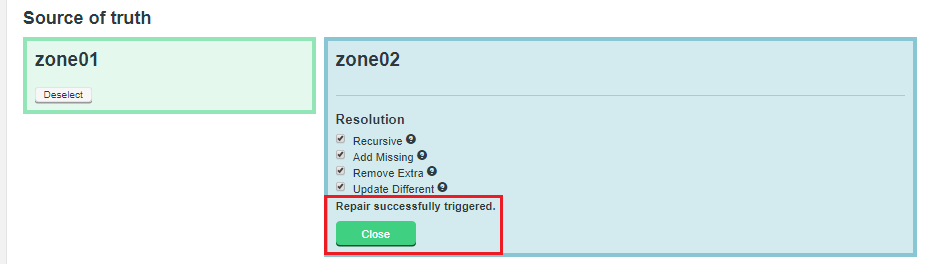

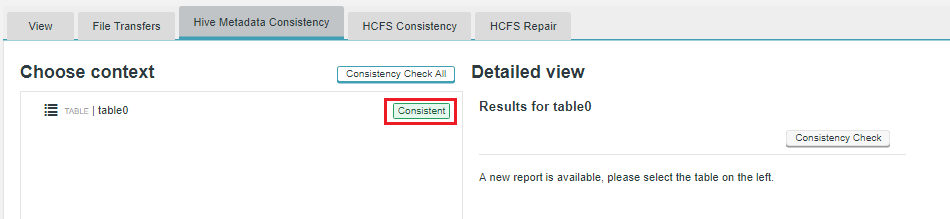

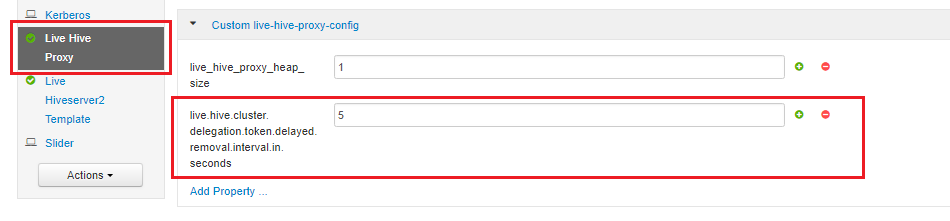

5. Operation

This section covers the steps required for setting up and configuring Live Hive Plugin after installation has been completed.

5.1. Setting up Hive Metadata Replication

The configuration section covers the essential steps that you need to take to start replicating Hive metadata. This section covers those steps that are required for replicating Hive Metadata between zones.

| Live Hive Plugin can only replicate transactional data, it isn’t intended to sync large blocks of existing data. |

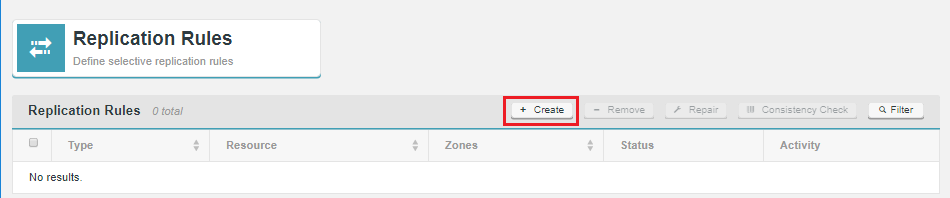

Live Hive Plugin requires that you create two kinds of rules in order to replicate Hive metadata.

- HCFS Rule

-

Create a rule that matches the location of your underlying Hive data on the HDFS file-system. This rule handles the actual data replication, without it, a corresponding hive rule will not work. See Creating a HCFS rule.

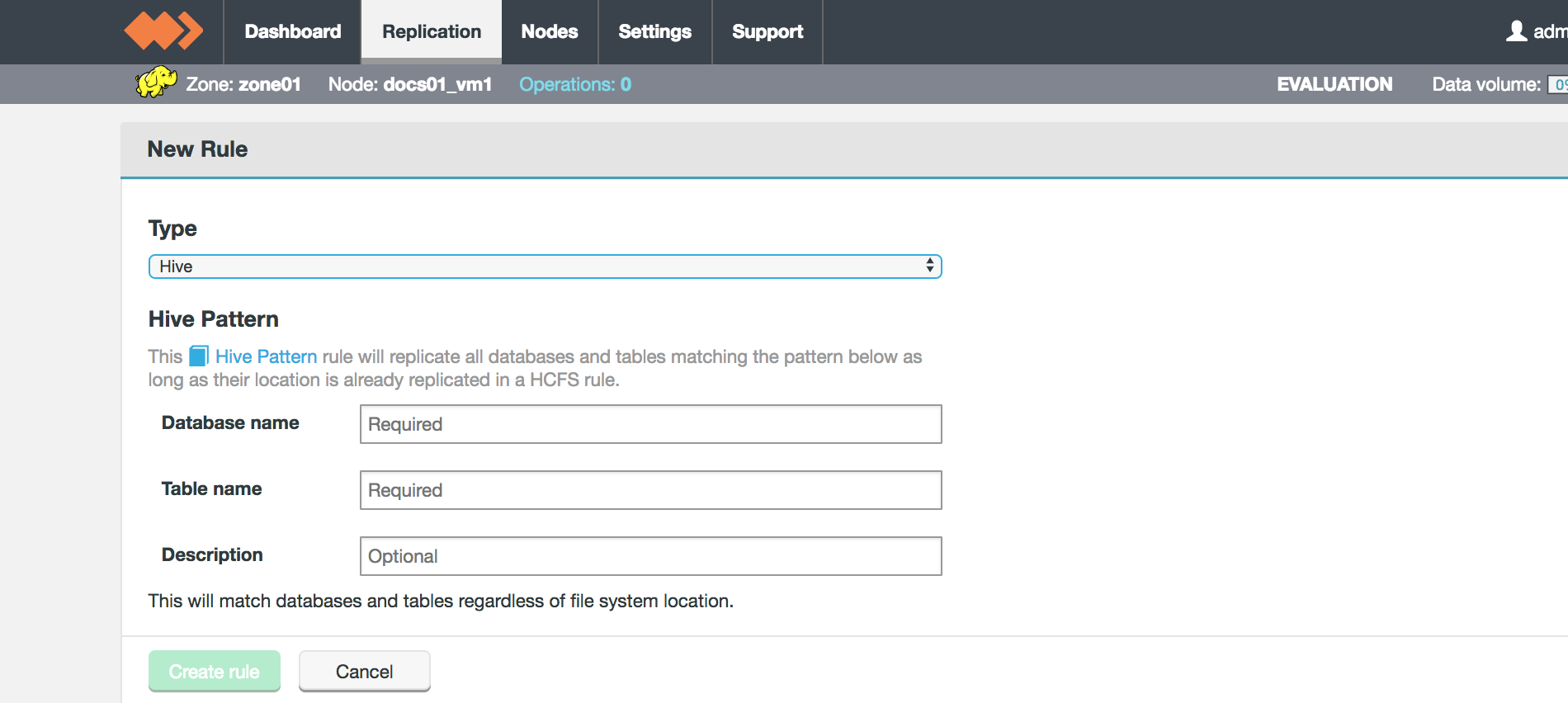

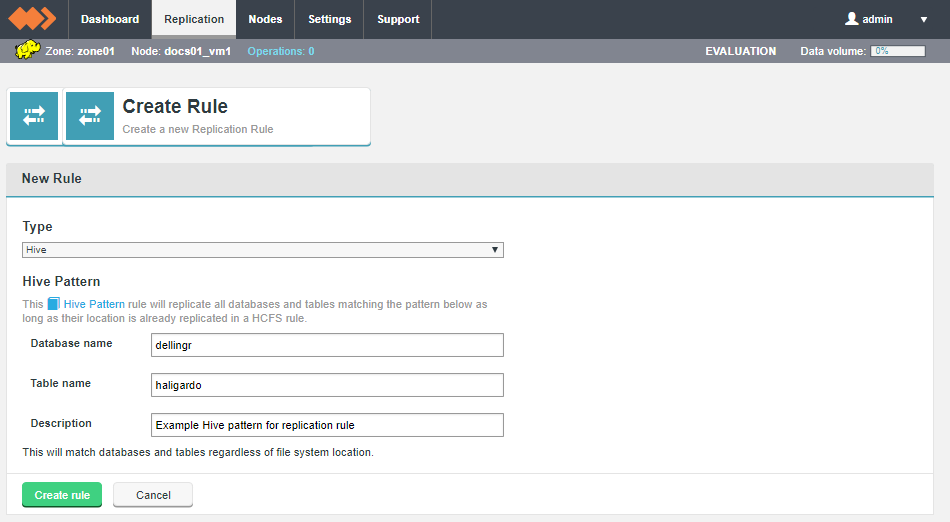

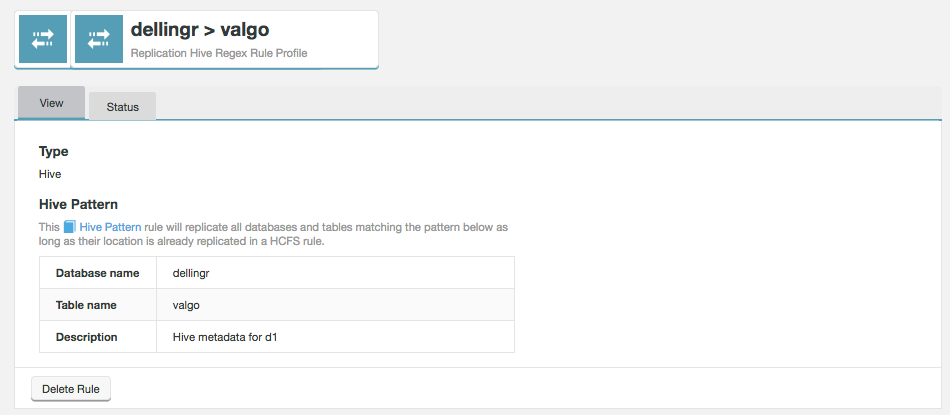

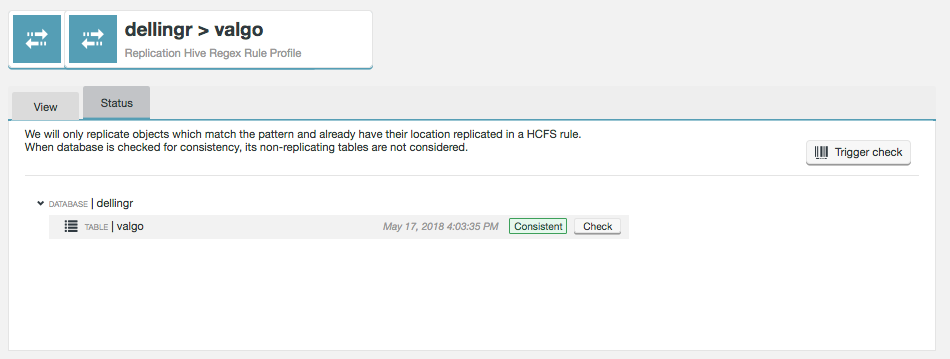

- Hive Rule

-

Create a rule that uses Hive’s pattern syntax to describe Hive databases and tables. This rule applies to any matching HCFS rule, contextualizing Hive metadata. See Create Hive rule.

5.1.1. Create a HCFS rule

Before you can replicate Hive metadata, you need to set up a replication rule for Hive’s resource location in the underlying file system.

-

Go to the Live Hive Plugin UI and click on Replication tab.

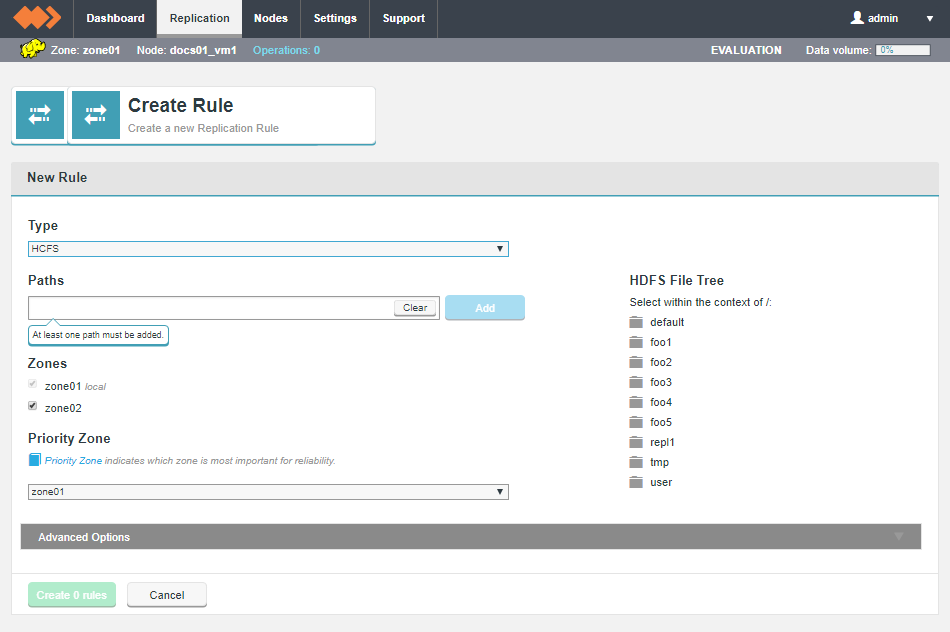

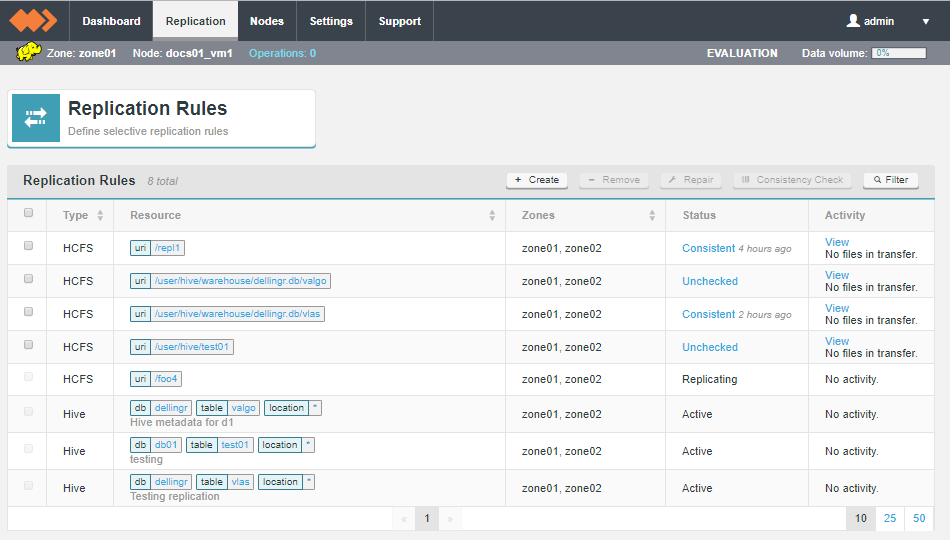

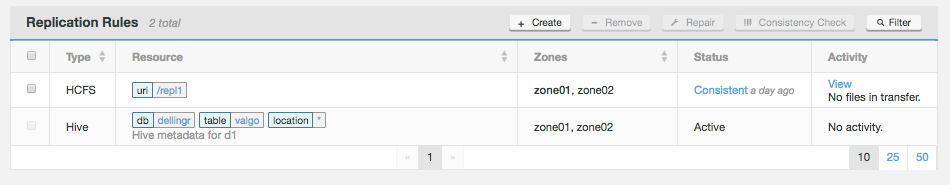

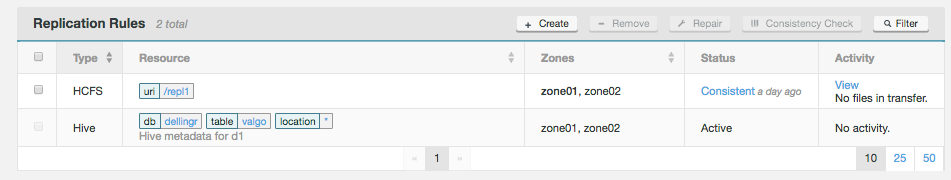

Figure 33. Live Hive Plugin - Replication

Figure 33. Live Hive Plugin - Replication -

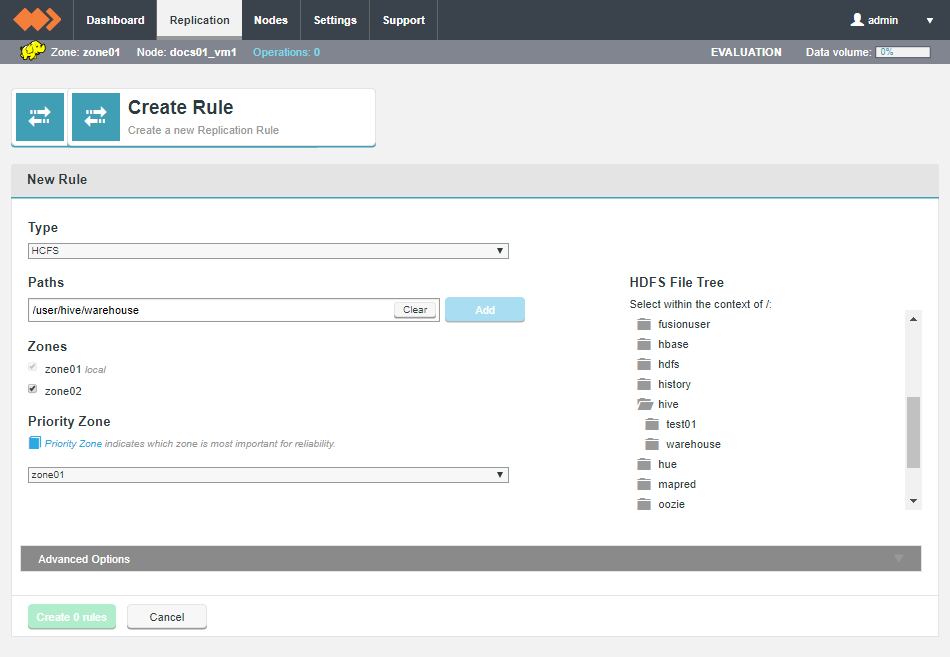

Select Type "HCFS". Use the HDFS File Tree to navigate and select a file system resource. For the purpose of Hive metadata replication, this resource should correspond with the location of the Hive data to be replicated.

Figure 34. Live Hive Plugin - Replication

Figure 34. Live Hive Plugin - Replication -

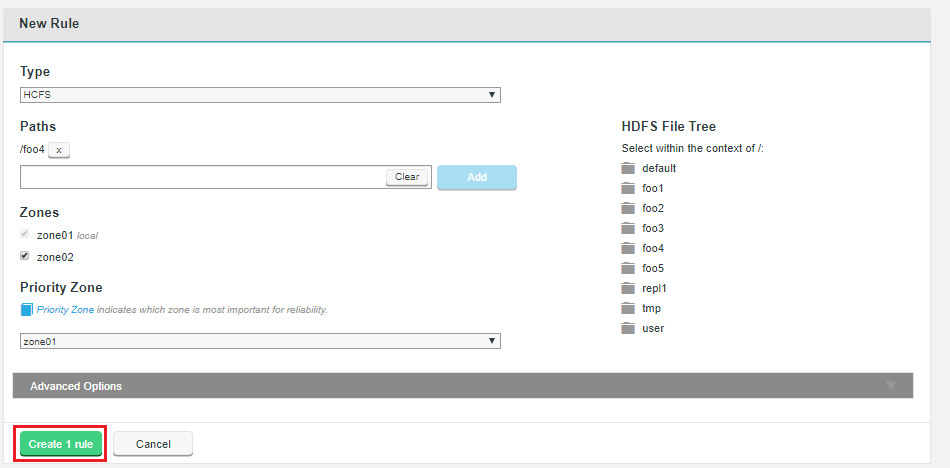

Selected resources will appear on the Paths line. Click Add to use the selected resource.

Figure 35. Live Hive Plugin - ReplicationDefault database Locations

Figure 35. Live Hive Plugin - ReplicationDefault database Locations-

CDH: /user/hive/warehouse

-

HDP: /apps/hive/warehouse

-

-

Click Create 1 rule.

Figure 36. Live Hive Plugin - Replication

Figure 36. Live Hive Plugin - ReplicationThe rule will now appear on the Replication Rules table.

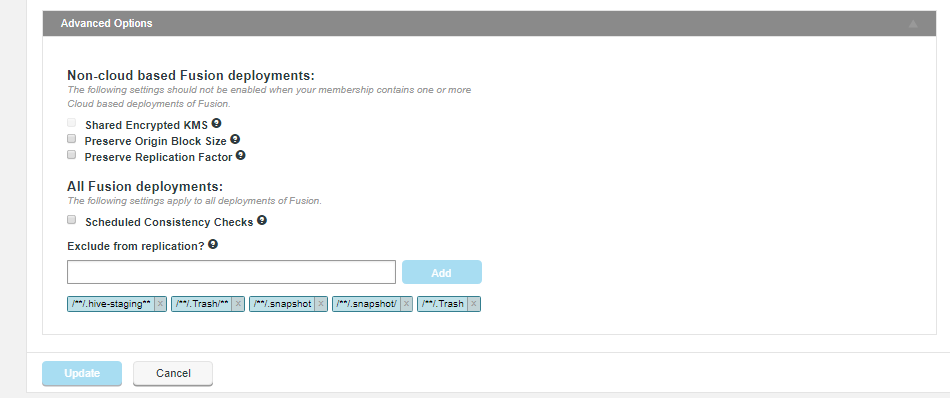

Click on the Advanced Options tab to see further options for setting up the rule:

For non-cloud based deployments the advanced options are:

- Shared Encrypted KMS

-

In deployments where multiple zones share a command KMS server, then enable this parameter to specify a virtual prefix path.

- Preserve Origin Block Size

-

The option to preserve the block size from the originating file system is required when Hadoop has been set up to use a columnar storage solution such as Apache Parquet. If you are using a columnar storage format in any of your applications then you should enable this option to ensure that each file sits within the same HDFS block.

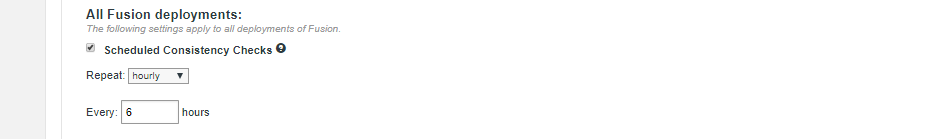

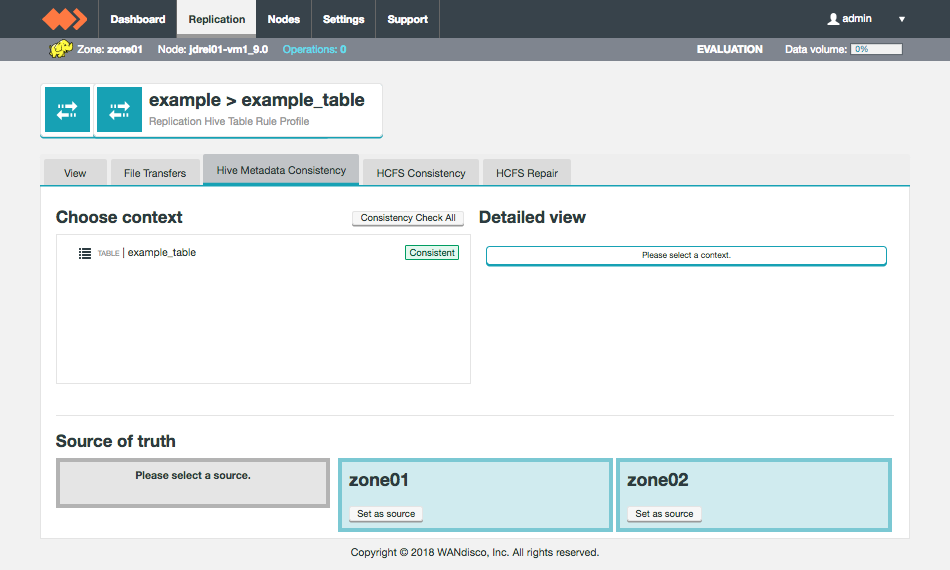

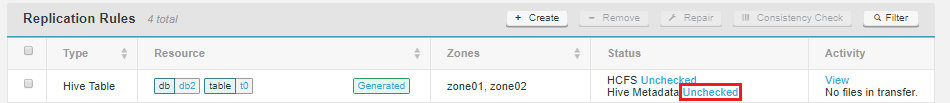

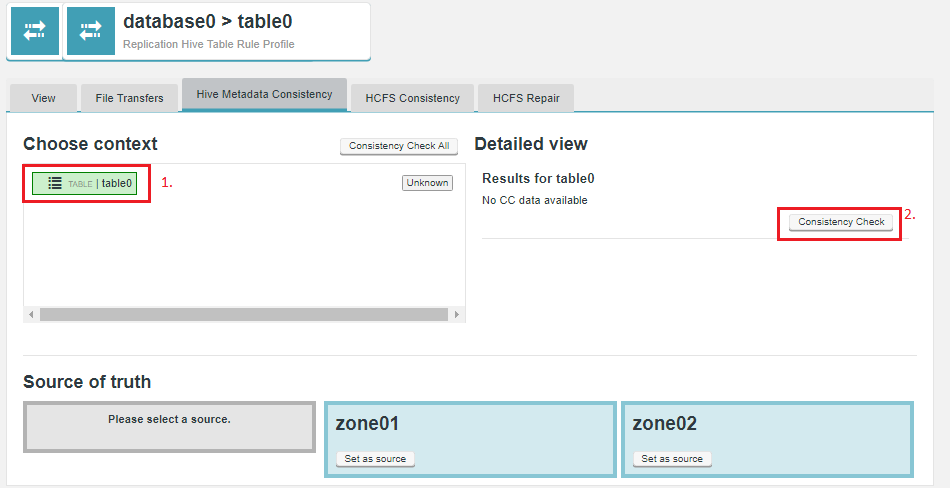

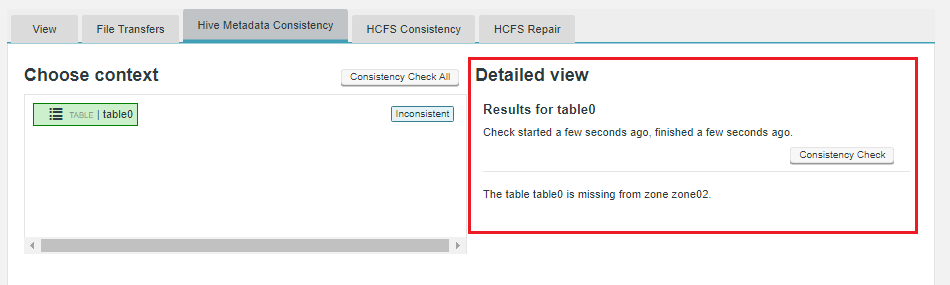

- Preserve Replication Factor