1. Introduction

1.2. What is WANdisco Fusion?

WANdisco Fusion is a software application that allows Hadoop deployments to replicate HDFS data between Hadoop clusters that are running different, even incompatible versions of Hadoop. It is even possible to replicate between different vendor distributions and versions of Hadoop.

1.2.1. Benefits

-

Virtual File System for Hadoop, compatible with all Hadoop applications.

-

Single, virtual Namespace that integrates storage from different types of Hadoop, including CDH, HDP, EMC Isilon, Amazon S3/EMRFS and MapR.

-

Storage can be globally distributed.

-

WAN replication using the WANdisco Fusion LIVE DATA platform, delivering single-copy consistent HDFS data, replicated between far-flung data centers.

1.3. Using this guide

This guide describes how to install and administer WANdisco Fusion as part of a multi data center Hadoop deployment, using either on premises or cloud-based clusters. We break down the guide into the following three sections:

- Deployment Guide

-

Covers the various requirements for running WANdisco Fusion, in terms of hardware, software and environment. Reading and understanding these requirements help you to avoid deployment problems. Additionally, if you need to make changes on your platform, we strongly recommend that you re-check the Deployment Checklist.

Working in the Hadoop ecosystem covers any special requirements or limitations imposed when running WANdisco Fusion along with various Hadoop applications.

The Installation section covers on-premises deployments into data centers. See Cloud Installation for cloud or hybrid installations.

- Administration Guide

-

This section describes all the common actions and procedures that are required as part of managing WANdisco Fusion in a deployment. It covers how to work with the UI’s monitoring and management tools. Use the Administration Guide if you need to know how to do something.

- Reference Guide

-

This section describes the UI, systematically covering all screens and providing an explanation for what everything does. Use the Reference Guide if you need to check what something does on the UI, or gain a better understanding of WANdisco Fusion’s underlying architecture.

1.4. Admonitions

In the guide we highlight types of information using the following call outs:

| The alert symbol highlights important information. |

| The STOP symbol cautions you against doing something. |

| Tips are principles or practices that you’ll benefit from knowing or using. |

| The KB symbol shows where you can find more information, such as in our online Knowledgebase. |

1.5. Get support

See our online Knowledgebase which contains updates and more information.

If you need more help raise a case on our support website.

We use terms that relate to the Hadoop ecosystem, WANdisco Fusion and WANdisco’s DconE replication technology. If you encounter any unfamiliar terms checkout the Glossary.

1.6. Give feedback

If you find an error or if you think some information needs improving, raise a case on our support website or email docs@wandisco.com.

2. Release Notes

View the Release Notes. These provide the latest information about the current release, including lists of new functionality, fixes, known issues and software requirements.

3. Deployment Guide

3.1. WANdisco server requirements

This section describes hardware requirements for deploying Hadoop using WANdisco Fusion. These are guidelines that provide a starting point for setting up data replication between your Hadoop clusters.

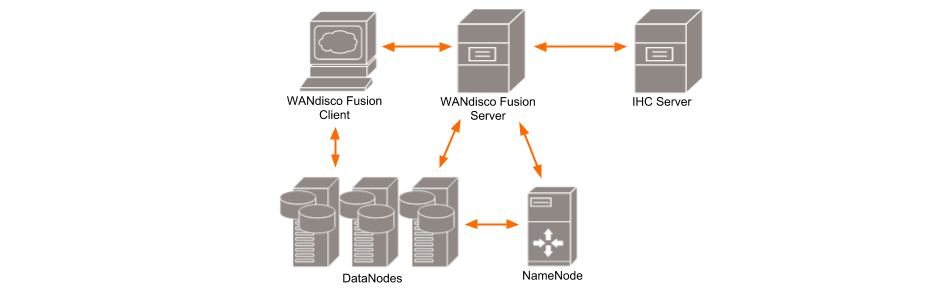

- WANdisco Fusion UI

-

A separate server that provides administrators with a browser-based management console for each WANdisco Fusion server. This can be installed on the same machine as WANdisco Fusion’s server or on a different machine within your data center.

- IHC Server

-

Inter Hadoop Communication servers handle the traffic that runs between zones or data centers that use different versions of Hadoop. IHC Servers are matched to the version of Hadoop running locally. It’s possible to deploy different numbers of IHC servers at each data center, additional IHC Servers can form part of a High Availability mechanism.

|

WANdisco Fusion servers don’t need to be collocated with IHC servers

If you deploy using the installer, both the WANdisco Fusion and IHC servers are installed into the same system by default. This configuration is made for convenience, but they can be installed on separate systems. This would be recommended if your servers don’t have the recommended amount of system memory.

|

- WANdisco Fusion Client

-

Client jar files to be installed on each Hadoop client, such as mappers and reducers that are connected to the cluster. The client is designed to have a minimal memory footprint and impact on CPU utilization.

|

WANdisco Fusion must not be collocated with HDFS servers (DataNodes, etc)

HDFS’s default block placement policy dictates that if a client is collocated on a DataNode, then that collocated DataNode will receive 1 block of whatever file is being put into HDFS from that client. This means that if the WANdisco Fusion Server (where all transfers go through) is collocated on a DataNode, then all incoming transfers will place 1 block onto that DataNode. In which case the DataNode is likely to consume lots of disk space in a transfer-heavy cluster, potentially forcing the WANdisco Fusion Server to shut down in order to keep the Prevaylers from getting corrupted.

|

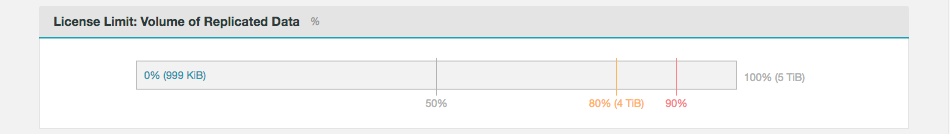

3.2. Licensing

WANdisco Fusion includes a licensing model that can limit operation based on time, the number of nodes and the volume of data under replication. WANdisco generates a license file matched to your agreed usage model. You need to renew your license if you exceeds these limits or if your license period ends. See License renewals.

3.2.1. License Limits

When your license limits are exceeded, WANdisco Fusion will operate in a limited manner, but allows you to apply a new license to bring the system back to full operation. Once a license is no longer valid:

-

Write operations to replicated locations are blocked,

-

Warnings and notifications related to the license expiry are delivered to the administrator,

-

Replication of data will no longer occur,

-

Consistency checks and repair operations are not allowed, and

-

Operations for adding replication rules will be denied.

Each different type of license has different limits.

Evaluation license

To simplify the process of pre-deployment testing, WANdisco Fusion is supplied with an evaluation license (also known as a "trial license"). This type of license imposes limits:

Source |

Time limit |

No. fusion servers |

No. of Zones |

Replicated Data |

Plugins |

Specified IPs |

Website |

14 days |

1-2 |

1-2 |

5TB |

No |

No |

Production license

Customers entering production need a production license file for each node.

These license files are tied to the node’s IP address.

In the event that a node needs to be moved to a new server with a different IP address customers should contact WANdisco’s support team and request that a new license be generated.

Production licenses can be set to expire or they can be perpetual.

Source |

Time limit |

No. fusion servers |

No. of Zones |

Replicated Data |

Plugins |

Specified IPs |

WANdisco |

variable (default: 1 year) |

variable (default: 20) |

variable (default: 10) |

variable (default: 20TB) |

Yes |

Yes |

3.2.2. License renewals

-

The WANdisco Fusion UI provides a warning message whenever you log in.

Figure 2. License expiry warning

Figure 2. License expiry warning -

A warning also appears under the Settings tab on the license Settings panel. Contact WANdisco support or follow the link to the website.

Figure 3. License expiry warning

Figure 3. License expiry warning -

Complete the form to set out your requirements for license renewal.

Figure 4. License webform

Figure 4. License webform

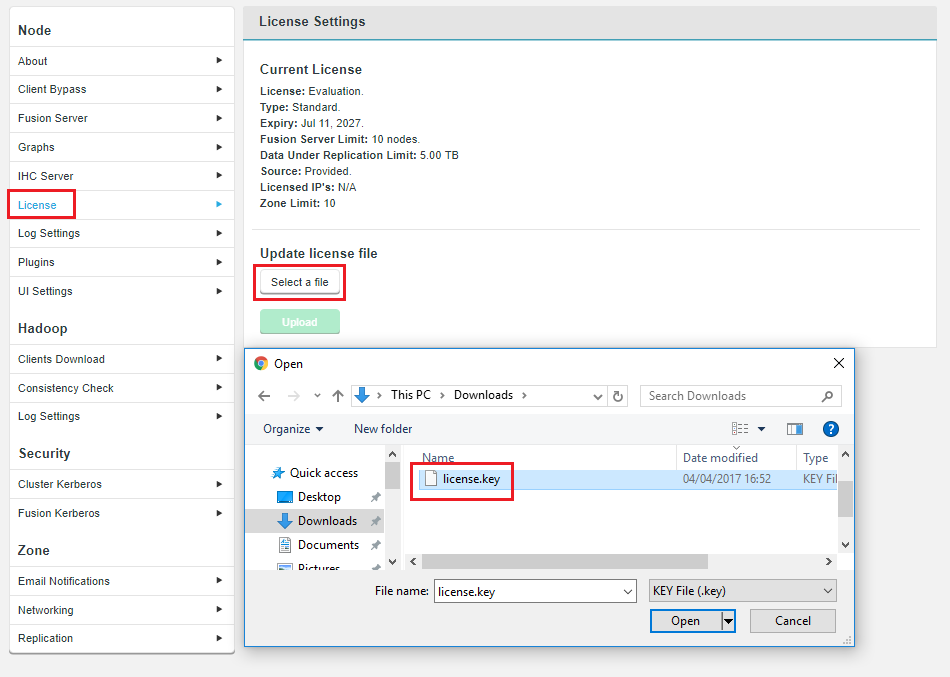

3.2.3. License updates

Unless there’s a problem that stops you from reaching the WANdisco Fusion UI, the correct way to upgrade a node license is through the License panel, under the Settings tab.

-

Click on License to bring up the License Settings panel.

-

Click Select a file. Navigate to and select your replacement License file.

-

Click Upload and review the details of your replacement license file.

License updates when a node is not accessible.

If one or more of your nodes are down or expired, you can still perform a license update by updating the license file on all nodes, via the UI. In this situation, the license upgrade cannot be done in a coordinated fashion, from a single node, but it can be completed locally if done on all nodes.

Manual license update

The following manual procedure should only be used if the above method is not available, such as when a node cannot be started - maybe caused by ownership or permissions errors on an existing license file. If you can, use the procedure outlined above.

-

Log in to your server’s command line, navigate to the properties directory:

/etc/wandisco/fusion/server

-

We recommend that you rename the

license.keyto something versioned, e.g.license.20170711. -

Get your new license.key and drop it into the

/etc/wandisco/fusion/serverdirectory. You need to account for the following factors:-

Ensure the filename is license.key

-

Ownership should be the same as the original file.

-

Permissions should be the same as the original file.

-

-

Restart the replicator by running the Fusion init.d script with the following argument:

[root@redhat6 init.d]# service fusion-ui-server restart

This will trigger the WANdisco Fusion replicator restart, which will force WANdisco Fusion to pick up the new license file and apply any changes to permitted usage.

If you don’t restartIf you follow the above instructions but don’t do the restart WANdisco Fusion will continue to run with the old license until it performs a daily license validation (which runs at midnight). Providing that your new license key file is valid and has been put in the right place then WANdisco Fusion will then update its license properties without the need to restart. -

If you run into problems, check the replicator logs (

/var/log/fusion/server/) for more information.PANIC: License is invalid com.wandisco.fsfs.licensing.LicenseException: Failed to load filepath>

3.3. Prerequisites Checklist

The following prerequisites checklist applies to both the WANdisco Fusion server and for separate IHC servers. We recommend that you deploy on physical hardware rather than on a virtual platform, however, there are no reasons why you can’t deploy on a virtual environment.

During the installation, your system’s environment is checked to ensure that it will support WANdisco Fusion the environment checks are intended to catch basic compatibility issues, especially those that may appear during an early evaluation phase.

3.3.1. Memory and storage

You deploy WANdisco Fusion/IHC server nodes in proportion to the data traffic between clusters; the more data traffic you need to handle, the more resources you need to put into the WANdisco Fusion server software.

If you plan to locate both the WANdisco Fusion and IHC servers on the same machine then check the collocated Server requirements:

- CPUs

-

Small WANdisco Fusion server deployment: 8 cores

Large WANdisco Fusion server deployment: 16 cores

Architecture: 64-bit only.

- System memory

-

There are no special memory requirements, except for the need to support a high throughput of data:

Type: Use ECC RAM

Size:

Small WANdisco Fusion server deployment: 48 GB

Large WANdisco Fusion server deployment: 64 GB

System memory requirements are matched to the expected cluster size and should take into account the number of files and block size. The more RAM you have, the bigger the supported file system, or the smaller the block size.

|

Collocation of WANdisco Fusion/IHC servers

Both the WANdisco Fusion server and the IHC server are, by default, installed on the same machine, in which case you would need to double the minimum memory requirements stated above. E.g.Size: Small WANdisco Fusion server deployment: 96 GB Large WANdisco Fusion server deployment: 128 GB or more |

- Storage space

-

Type: Hadoop operations are storage-heavy and disk-intensive so we strongly recommend that you use enterprise-class Solid State Drives (SSDs).

Size: Recommended: 1 TiB

Minimum: You need at least 250 GiB of disk space for a production environment.

- Network Connectivity

-

Minimum 1Gb Ethernet between local nodes.

Small WANdisco Fusion server: 2Gbps

Large WANdisco Fusion server: 4x10 Gbps (cross-rack)

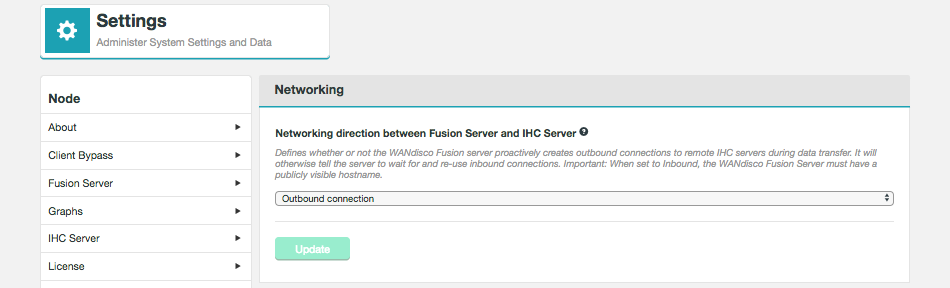

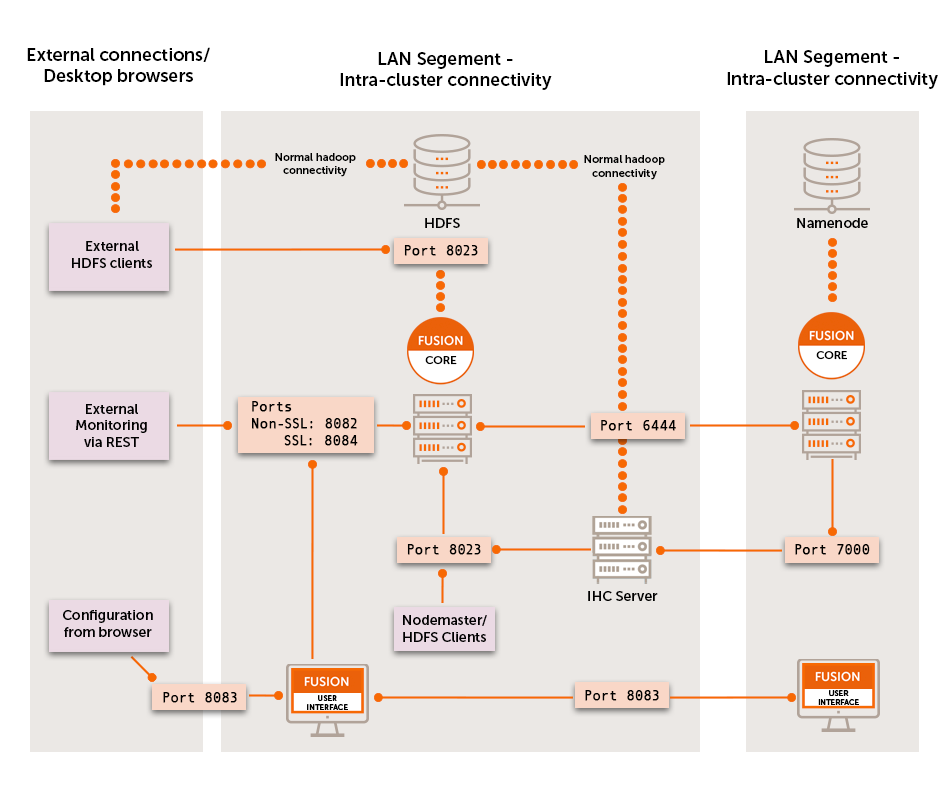

3.3.2. TCP Port Allocation

Before beginning installation you must have sufficient ports reserved. Below are the default, and recommended, ports.

- WANdisco Fusion Server

DConE replication port: 6444

DConE port handles all coordination traffic that manages replication.

It needs to be open between all WANdisco Fusion nodes.

Nodes that are situated in zones that are external to the data center’s network will require unidirectional access through the firewall.

Fusion HTTP Server Port: 8082

The HTTP Server Port or Application/REST API is used by the WANdisco Fusion application for configuration and reporting, both internally and via REST API.

The port needs to be open between all WANdisco Fusion nodes and any systems or scripts that interface with WANdisco Fusion through the REST API.

Fusion HTTPS Server Port: 8084

If SSL is enabled, this port is used for application for configuration and reporting, both internally and via REST API.

The port needs to be open between all WANdisco Fusion nodes and any systems or scripts that interface with WANdisco Fusion through the REST API.

Fusion Request port: 8023

Port used by WANdisco Fusion server to communicate with HCFS/HDFS clients.

The port is generally only open to the local WANdisco Fusion server, however you must make sure that it is open to edge nodes.

Fusion Server listening port: 8024

Port used by WANdisco Fusion server to listen for connections from remote IHC servers. It is only used in unidirectional mode, but it’s always opened for listening.

Remote IHCs connect to this port if the connection can’t be made in the other direction because of a firewall.

The SSL configuration for this port is controlled by the same ihc.ssl.enabled property that is used for IHC connections performed from the other side.

See Enable SSL for WANdisco Fusion.

IHC ports: 7000-range or 9000-range

7000 range, (the exact port is determined at installation time based on what ports are available), it is used for data transfer between Fusion Server and IHC servers.

It must be accessible from all WANdisco Fusion nodes in the replicated system.

9000 range, (the exact port is determined at installation time based on available ports), it is used for an HTTP Server that exposes JMX metrics from the IHC server.

- WANdisco Fusion UI

-

Web UI interface: 8083 Used to access the WANdisco Fusion Administration UI by end users (requires authentication), also used for inter-UI communication. This port should be accessible from all Fusion servers in the replicated system as well as visible to any part of the network where administrators require UI access.

3.3.3. Software requirements

Operating systems:

RHEL 6 x86_64

RHEL 7 x86_64

Oracle Linux 6 x86_64

Oracle Linux 7 x86_64

CentOS 6 x86_64

CentOS 7 x86_64

Ubuntu 14.04LTS

Ubuntu 16.04LTS

SLES 11 x86_64

SLES 12 x86_64

We only support AMD64/Intel64 64-Bit (x86_64) architecture.

Web browsers

We support the following browsers:

-

Chrome 55 and later

-

Edge 15 and later

-

Firefox 48 and later

Other browsers and older versions may be used but bugs may be encountered.

Java

Java JRE 1.7 / 1.8.

Testing and development are done using a minimum of Java JRE 1.7, or the minimum version for the target platform, whichever is the higher.

We have now added support for Open JDK 7, which is used in cloud deployments.

For other types of deployment we recommend running with Oracle’s Java as it has undergone more testing.

|

"JAVA_HOME could not be discovered" error

You need to ensure that the system user that is set to run Fusion has the JAVA_HOME variable set. Installation failures that result in a message "JAVA_HOME could not be discovered" are usually caused by the specific WAND_USER account not having JAVA_HOME set. From WANdisco Fusion 2.11 |

|

Heap leak when using OpenSSL or BoringSSL

There is a Netty bug ( https://github.com/netty/netty/issues/5372 (Leak in OpenSSL Context) where any use of tc-native with OpenSSL or BoringSSL would result in on-heap and off-heap memory leaks from Netty. The issue is fixed in Fusion 2.11.2.4 and 2.12.0.4 by bundling an updated version of Netty that contains a fix. Manual Fix

|

- Architecture

-

64-bit only

- Heap size

-

Set Java Heap Size of to a minimum of 1Gigabytes, or the maximum available memory on your server.

Use a fixed heap size. Give -Xminf and -Xmaxf the same value. Make this as large as your server can support.

Avoid Java defaults. Ensure that garbage collection will run in an orderly manner. Configure NewSize and MaxNewSize Use 1/10 to 1/5 of Max Heap size for JVMs larger than 4GB. Stay deterministic!

When deploying to a cluster, make sure you have exactly the same version of the Java environment on all nodes. - Where’s Java?

-

Although WANdisco Fusion only requires the Java Runtime Environment (JRE), Cloudera and Hortonworks may install the full Oracle JDK with the high strength encryption package included. This JCE package is a requirement for running Kerberized clusters.

For good measure, remove any JDK 6 that might be present in /usr/java. Make sure that /usr/java/default and /usr/java/latest point to an instance of java 7 version, your Hadoop manager should install this.Ensure that you set the

JAVA_HOMEenvironment variable for the root user on all nodes. Remember that, on some systems, invoking sudo strips environmental variables, so you may need to add the JAVA_HOME to Sudo’s list of preserved variables.

|

Due to a bug in JRE 7, you should not run javax.security.sasl.level=INFO The problem has been fixed for JDK 8 (FUS-1946). Due to a bug in JDK 8 prior to 8u60, replication throughput with SSL enabled can be extremely slow (less than 4MB/sec). This is down to an inefficient GCM implementation. Workaround |

File descriptor/Maximum number of processes limit

Maximum User Processes and Open Files limits are low by default on some systems. It is possible to check their value with the ulimit or limit command:

ulimit -u && ulimit -n

-u The maximum number of processes available to a single user.

-n The maximum number of open file descriptors.

For optimal performance, we recommend both hard and soft limits values to be set to 64000 or more:

RHEL6 and later: A file /etc/security/limits.d/90-nproc.conf explicitly overrides the settings in security.conf, i.e.:

# Default limit for number of user's processes to prevent

# accidental fork bombs.

# See rhbz #432903 for reasoning.

* soft nproc 1024 <- Increase this limit or ulimit -u will be reset to 1024

Ambari and Cloudera manager will set various ulimit entries, you must ensure hard and soft limits are set to 64000 or higher.

Check with the ulimit or limit command. If the limit is exceeded the JVM will throw an error: java.lang.OutOfMemoryError: unable to create new native thread.

Additional requirements

iptables

Use the following procedure to temporarily disable iptables, during installation:

RedHat 6

-

Turn off with

$ sudo chkconfig iptables off

-

Reboot the system.

-

On completing installation, re-enable with

$ sudo chkconfig iptables on

RedHat 7

-

Turn off with

$ sudo systemctl disable firewalld

-

Reboot the system.

-

On completing installation, re-enable with

$ sudo systemctl enable firewalld

Comment out requiretty in /etc/sudoers

The installer’s use of sudo won’t work with some linux distributions (CentOS where /etc/sudoer sets enables requiretty, where sudo can only be invoked from a logged in terminal session, not through cron or a bash script. When enabled the installer will fail with an error:

execution refused with "sorry, you must have a tty to run sudo" message Ensure that requiretty is commented out: # Defaults requiretty

SSL encryption

- Basics

-

WANdisco Fusion supports SSL for any or all of the three channels of communication: Fusion Server - Fusion Server, Fusion Server - Fusion Client, and Fusion Server - IHC Server.

- keystore

-

A keystore (containing a private key / certificate chain) is used by an SSL server to encrypt the communication and create digital signatures.

- truststore

-

A truststore is used by an SSL client for validating certificates sent by other servers. It simply contains certificates that are considered "trusted". For convenience you can use the same file as both the keystore and the truststore, you can also use the same file for multiple processes.

- Enabling SSL

-

You can enable SSL during installation (Step 4 Server) or through the SSL Settings screen, selecting a suitable Fusion HTTP Policy Type. It is also possible to enable SSL through a manual edit of the application.properties file. We don’t recommend using the manual method, although it is available if needed: Enable HTTPS.

| Due to a bug in JDK 8 prior to 8u60, replication throughput with SSL enabled can be extremely slow (less than 4MB/sec). This is down to an inefficient GCM implementation. |

Workaround

Upgrade to Java 8u60 or greater, or ensure WANdisco Fusion is able to make use of OpenSSL libraries instead of JDK. Requirements for this can be found at http://netty.io/wiki/requirements-for-4.x.html

FUS-3041

Disabling low strength encryption ciphers

Transport Layer Security (TLS) and its predecessor, Secure Socket Layer (SSL) are widely adopted protocols that are used transfer of data between the client and the server through authentication and encryption and integrity.

Recent research has indicated that some of the cipher systems that are commonly used in these protocols do not offer the level of security that was previously thought.

In order to stop WANdisco Fusion from using the disavowed ciphers (DES, 3DES, and RC4), use the following procedure on each node where the Fusion service runs:

-

Confirm JRE_HOME/lib/security/java.security allows override of security properties, which requires

security.overridePropertiesFile=true -

As root user:

mkdir /etc/wandisco/fusion/security chown hdfs:hadoop /etc/wandisco/fusion/security

-

As hdfs user:

cd /etc/wandisco/fusion/security echo "jdk.tls.disabledAlgorithms=SSLv3, DES, DESede, RC4" >> /etc/wandisco/fusion/security/fusion.security

-

As root user:

cd /etc/init.d

-

Edit the fusion-server file to add

-Djava.security.properties=/etc/wandisco/fusion/security/fusion.security

to the JVM_ARG property.

-

Edit the

fusion-ihc-server-xxxfile to add-Djava.security.properties=/etc/wandisco/fusion/security/fusion.security

to the JVM_ARG property.

cd /opt/wandisco/fusion-ui-server/lib

-

Edit the init-functions.sh file to add

-Djava.security.properties=/etc/wandisco/fusion/security/fusion.security

to the JAVA_ARGS property.

-

-

Restart the fusion server, ui server and IHC server.

3.3.4. Supported versions

The Hadoop distributions and versions supported are:

-

CDH 5.5.0 - 5.14.x

-

CDH 5.13 support added in version 2.11.1

-

CDH 5.14 support added in version 2.11.2

-

-

HDP 2.3.0 - 2.6.4

-

HDP 2.6.3 and HDP 2.6.4 support added in version 2.11.1

-

-

HDI 3.5 - 3.6

-

EMR 5.3 - 5.4

-

GCS 1.0 - 1.1

-

ASF 2.5.0 - 2.7.0

-

IBM (IOP) 4.0 - 4.2.5 (only 4.2.5 supported from 2.11.2)

-

MapR 5.0 - 5.2.0

3.3.5. Supported applications

Supported Big Data applications my be noted here, as we complete testing:

Application: |

Version Supported: |

Tested with: |

Syncsort DMX-h: |

8.2.4. |

See Knowledgebase |

3.3.6. Final Preparations

We’ll now look at what you should know and do as you begin the installation.

Time requirements

The time required to complete a deployment of WANdisco Fusion will in part be based on its size, larger deployments with more nodes and more complex replication rules will take correspondingly more time to set up. Use the guide below to help you plan for deployments.

-

Run through this document and create a checklist of your requirements. (1-2 hours).

-

Complete the WANdisco Fusion installation (about 20 minutes per node, or 1 hour for a test deployment).

-

Complete client installations and complete basic tests (1-2 hours).

Of course, this is a guideline to help you plan your deployment. You should think ahead and determine if there are additional steps or requirements introduced by your organization’s specific needs.

Network requirements

-

See the deployment checklist for a list of the TCP ports that need to be open for WANdisco Fusion.

-

WANdisco Fusion does not require that reverse DNS is set up but it is vital that all nodes can be resolved from all zones.

3.3.7. Security

Requirements for Kerberos

If you are running Kerberos on your cluster you should consider the following requirements:

-

Kerberos is already installed and running on your cluster

-

Fusion-Server is configured for Kerberos as described in the Kerberos section.

-

Kerberos Configuration before starting the installation.

For information about running Fusion with Kerberos, read this guide’s chapter on Kerberos.

|

Warning about mixed Kerberized / Non-Kerberized zones

In deployments that mix kerberized and non-kerberized zones it’s possible that permission errors will occur because the different zones don’t share the same underlying system superusers. In this scenario you would need to ensure that the superuser for each zone is created on the other zones.

|

For example, if you connect a Zone that runs CDH, which has superuser 'hdfs" with a zone running MapR, which has superuser 'mapr', you would need to create the user 'hdfs' on the MapR zone and 'mapr' on the CDH zone.

|

Kerberos Relogin Failure with Hadoop 2.6.0 and JDK7u80 or later

Hadoop Kerberos relogin fails silently due to HADOOP-10786. This impacts Hadoop 2.6.0 when JDK7u80 or later is used (including JDK8).

Users should downgrade to JDK7u79 or earlier, or upgrade to Hadoop 2.6.1 or later.

|

Manual Kerberos configuration

See the Knowledge Base for instructions on setting up manual Kerberos settings. You only need these in special cases as the steps have been handled by the installer. See Manual Updates for WANdisco Fusion UI Configuration.

Instructions on setting up auth-to-local permissions, mapping a Kerberos principal onto a local system user. See the KB article - Setting up Auth-to-local.

3.3.8. Clean Environment

Before you start the installation you must ensure that there are no existing WANdisco Fusion installations or WANdisco Fusion components installed on your elected machines. If you are about to upgrade to a new version of WANdisco Fusion you must first see the Uninstall chapter.

|

Ensure HADOOP_HOME is set in the environment

Where the hadoop command isn’t in the standard system path, administrators must ensure that the HADOOP_HOME environment variable is set for the root user and the user WANdisco Fusion will run as, typically hdfs.

When set, HADOOP_HOME must be the parent of the bin directory into which the Hadoop scripts are installed.

Example: if the hadoop command is:

|

/opt/hadoop-2.6.0-cdh5.4.0/bin/hadoop

then HADOOP_HOME must be set to

/opt/hadoop-2.6.0-cdh5.4.0/.

3.3.9. Installer File

You need to match the WANdisco Fusion installer file to each data center’s version of Hadoop. Installing the wrong version of WANdisco Fusion will result in the IHC servers being misconfigured.

|

Why installation requires root user

Fusion core and Fusion UI packages are installed using root permissions, using the RPM tool (or equivalent for .deb packages). RPM requires root to run - hence the need for the permissions. The main requirement for running with root is the need for the installer to create the directory structure for WANdisco Fusion components, e.g.

Once all files are put into place, they are permissioned and owned by a specific fusion user. After the installation of the artifacts root is not used and the Fusion processes themselves are run as a specific Fusion user (usually "hdfs"). |

4. Installation

This section will run through the installation of WANdisco Fusion from the initial steps where we make sure that your existing environment is compatible, through the procedure for installing the necessary components and then finally configuration.

- Deployment Checklist

-

Important hardware and software requirements, along with considerations that need to be made before starting to install WANdisco Fusion.

- Final Preparations

-

Things that you need to do immediately before you start the installation.

- Starting the installer

-

Step by step guide to the installation process when using the unified installer. For instructions on completing a fully manual installation see On-premises Installation.

- Configuration

-

Runs through the changes you need to make to start WANdisco Fusion working on your platform.

- Working in the Hadoop ecosystem

-

Necessary steps for getting WANdisco Fusion to work with supported Hadoop applications.

- Deployment appendix

-

Extras that you may need that we didn’t want cluttering up the installation guide.

4.1. On premises installation

The following section covers the installation of WANdisco inc. Fusion into a cluster that is based in your organization’s own premises.

|

Installation via sudo-restricted non-root user

In some deployments it may not be permitted to complete the installation using root user. It should be possible to complete an installation with a limited set of sudo commands.

|

|

Workaround if /tmp directory is "noexec"

Running the installer script will write files to the system’s /tmp directory. If the system’s /tmp directory is mounted with the "noexec" option then you will need to use the following argument when running the installer: --target <someDirectoryWhichCanBeWrittenAndExecuted> E.g. sudo ./fusion-ui-server-<version>_rpm_installer.sh --target /opt/wandisco/installation/ The location must be somewhere

|

4.1.1. Starting the installation

Use the following steps to complete an installation using the installer file.

This requires an administrator to enter details throughout the procedure.

Once the initial settings are entered through the terminal session, the installation is then completed through a browser or alternatively, using a Silent Installation option to handle configuration programmatically.

Note: The screenshots shown in this section are from a Cloudera installation so there may be slight differences to your set up.

-

Open a terminal session on your first installation server. Download the appropriate installer from customer.wandisco.com. You need the appropriate one for your platform.

-

Ensure the downloaded files are executable e.g.

chmod +x fusion-ui-server-<version>_rpm_installer.sh

-

Execute the file with root permissions, e.g.

sudo ./fusion-ui-server-<version>_rpm_installer.sh

-

The installer will now start.

Verifying archive integrity... All good. Uncompressing WANdisco Fusion.............................. :: :: :: # # ## #### ###### # ##### ##### ##### :::: :::: ::: # # # # ## ## # # # # # # # # # ::::::::::: ::: # # # # # # # # # # # # # # ::::::::::::: ::: # # # # # # # # # # # ##### # # # ::::::::::: ::: # # # # # # # # # # # # # # # :::: :::: ::: ## ## # ## # # # # # # # # # # # :: :: :: # # ## # # # ###### # ##### ##### ##### Welcome to the WANdisco Fusion installation You are about to install WANdisco Fusion version 2.11 Do you want to continue with the installation? (Y/n) yThe installer will perform an integrity check, confirm the product version that will be installed, then invite you to continue. Enter "Y" to continue the installation.

-

The installer checks that both Perl and Java are installed on the system.

Checking prerequisites: Checking for perl: OK Checking for java: OK

See the Installation Checklist Java Requirements for more information about these requirements.

-

Next, confirm the port that will be used to access WANdisco Fusion through a browser.

Which port should the UI Server listen on? [8083]:

-

Select the platform version and type from the list of supported platforms. The examples given below are from a Cloudera installation.

Please specify the appropriate backend from the list below: [0] cdh-5.3.x [1] cdh-5.4.x [2] cdh-5.5.x [3] cdh-5.6.x [4] cdh-5.7.x [5] cdh-5.8.x [6] cdh-5.9.x [7] cdh-5.10.x [8] cdh-5.11.x Which fusion backend do you wish to use? 5

Installing on AmbariIf you are using HDP-2.6.x ensure you specify the correct platform version - version 2.6.0 and 2.6.1 need a separate installer to 2.6.2 and above.MapR availabilityThe MapR versions of Hadoop have been removed from the trial version of WANdisco Fusion in order to reduce the size of the installer for most prospective customers. These versions are run by a small minority of customers, while their presence nearly doubled the size of the installer package. Contact WANdisco inc. if you need to evaluate WANdisco Fusion running with MapR.

Additional available packages

[1] mapr-4.0.1 [2] mapr-4.0.2 [3] mapr-4.1.0 [4] mapr-5.0.0

URI MapR needs to use WANdisco Fusion’s native "fusion:///" URI, instead of the default hdfs:///.

Ensure that during installation you select the Use WANdisco Fusion URI with HCFS file system URI option.

Superuser

If you install into a MapR cluster then you need to assign the MapR superuser system account/groupmaprif you need to run WANdisco Fusion using the fusion:/// URI. See the requirement for MapR Client Configuration. See the requirement for MapR impersonation. When using MapR and doing a TeraSort run, if one runs without the simple partitioner configuration, then the YARN containers will fail with a Fusion Client ClassNotFoundException. The remedy is to setyarn.application.classpathon each node’s yarn-site.xml.

FUI-1853 -

Next, you set the system user group for running the application.

We strongly advise against running Fusion as the root user. For default CDH setups, the user should be set to 'hdfs'. However, you should choose a user appropriate for running HDFS commands on your system. Which user should WANdisco Fusion run as? [hdfs] Checking 'hdfs' ... ... 'hdfs' found. Please choose an appropriate group for your system. By default CDH uses the 'hdfs' group. Which group should WANdisco Fusion run as? [hdfs] Checking 'hdfs' ... ... 'hdfs' found.

-

The installer does a search for the commonly used account and group, assigning these by default. Check the summary to confirm that your chosen settings are appropriate: Installing with the following settings:

Installing with the following settings: Installation Prefix: /opt/wandisco User and Group: hdfs:hdfs Hostname: <your.fusion.hostname> WANdisco Fusion Admin UI Listening on: 0.0.0.0:8083 WANdisco Fusion Admin UI Minimum Memory: 128 WANdisco Fusion Admin UI Maximum memory: 512 Platform: <your selected platform and version> WANdisco Fusion Server Hostname and Port: <your.fusion.hostname>:8082 Do you want to continue with the installation? (Y/n)

If these settings are correct then enter "Y" to complete the installation of the WANdisco Fusion server.

-

The package will now install.

Installing <your selected packages> server packages: <your selected server package> ... Done <your selected ihc-server package> ... Done Installing plugin packages: <any selected plugin packages> ... Done Installing fusion-ui-server package: fusion-ui-server-<your version>.noarch.rpm ... Done Starting fusion-ui-server: [ OK ] Checking if the GUI is listening on port 8083: .......Done

-

The WANdisco Fusion server will now start up:

Please visit <your.fusion.hostname> to complete installation of WANdisco Fusion If <your.fusion.hostname> is internal or not available from your browser, replace this with an externally available address to access it.

At this point the WANdisco Fusion server and corresponding IHC server will be installed. The next step is to configure the WANdisco Fusion UI through a browser or using the silent installation script.

4.1.2. Configure WANdisco Fusion through a browser

Follow this section to complete the installation by configuring WANdisco Fusion using a browser-based graphical user interface.

|

Silent Installation

For large deployments it may be worth using Silent Installation option.

|

-

Open a web browser and point it at the provided URL. e.g.

http://<your.fusion.hostname>.com:8083/

-

In the first "Welcome" screen you’re asked to choose between Create a new Zone and Add to an existing Zone.

Figure 7. Welcome

Figure 7. WelcomeMake your selection as follows: Adding a new WANdisco Fusion cluster Select Add Zone. Adding additional WANdisco Fusion servers to an existing WANdisco Fusion cluster Select Add to an existing Zone.

High Availability for WANdisco Fusion / IHC Servers

It’s possible to enable High Availability in your WANdisco Fusion cluster by adding additional WANdisco Fusion/IHC servers to a zone. These additional nodes ensure that in the event of a system outage, there will remain sufficient WANdisco Fusion/IHC servers running to maintain replication.Add HA nodes to the cluster using the installer and choosing to Add to an existing Zone. A new node name will be assigned although you can choose your own label if you prefer.

-

Run through the installer’s detailed Environment checks. For more details about exactly what is required and checked for, see the pre-requisites checklist.

Figure 8. Installer screen

Figure 8. Installer screen -

On clicking validate the installer will run through a series of checks of your system’s hardware and software setup and warn you if any of WANdisco Fusion’s prerequisites are missing.

Figure 9. Validation results

Figure 9. Validation resultsAny element that fails the check should be addressed before you continue the installation. Warnings may be ignored for the purposes of completing the installation, especially if only for evaluation purposes and not for production. However, when installing for production, you should address all warnings, or at least take note of them and exercise due care if you continue the installation without resolving and revalidating.

-

Upload the license file.

Figure 10. Installer screen

Figure 10. Installer screenThe conditions of your license agreement will be shown in the top panel.

-

In the lower panel is the EULA. Read through the EULA. When the scroll bar reaches the bottom you can click on the I agree to the EULA to continue, then click Next Step.

Figure 11. Verify license and agree to subscription agreement

Figure 11. Verify license and agree to subscription agreement -

Enter settings for the WANdisco Fusion server.

Figure 12. Fusion server settings

Figure 12. Fusion server settingsWANdisco Fusion Server

- Fully Qualified Domain Name / IP

-

The full hostname for the server.

- We have detected the following hostname/IP addresses for this machine.

-

The installer will try to detect the server’s hostname from its network settings. Additional hostnames will be listed on a dropdown selector.

- DConE Port

-

TCP port used by WANdisco Fusion for replicated traffic. Validation will check that the port is free and that it can be bound to.

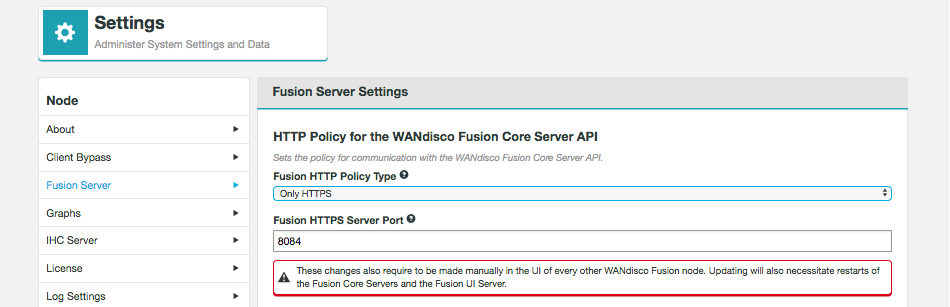

- Fusion HTTP Policy Type

-

Sets the policy for communication with the WANdisco Fusion Core Server API.

Select from one of the following policies:

Only HTTP - WANdisco Fusion will not use SSL encryption on its API traffic.

Only HTTPS - WANdisco Fusion will only use SSL encryption for API traffic.

Use HTTP and HTTPS - WANdisco Fusion will use both encrypted and un-encrypted traffic.Known IssueCurrently, the HTTP policy and SSL settings both independently alter how WANdisco Fusion uses SSL, when they should be linked. You need to make sure that your HTTP policy selection and the use of SSL (enabled in the next section of the Installer) are in sync. If you choose either to the policies that use HTTPS, then you must enable SSL. If you stick with "Only HTTP" then you must ensure that you do not enable SSL. In a future release these two settings will be linked so it wont be possible to have contradictory settings. - Fusion HTTP Server Port

-

The TCP port used for standard HTTP traffic. Validation checks whether the port is free and that it can be bound.

- Maximum Java heap size (GB)

-

Enter the maximum Java Heap value for the WANdisco Fusion server. The minimum for production is 16GB but 64GB is recommended.

- Umask (currently 0022)

-

Set the default permissions applied to newly created files. The value 022 results in default directory permissions 755 and default file permissions 644. This ensures that the installation will be able to start up/restart.

Advanced options

|

Only apply these options if you fully understand what they do.

The following advanced options provide a number of low level configuration settings that may be required for installation into certain environments.

The incorrect application of some of these settings could cause serious problems, so for this reason we strongly recommend that you discuss their use with WANdisco’s support team before enabling them.

|

Custom Fusion Request Port

You can provide a custom TCP port for the Fusion Request Port (also known as WANdisco Fusion client port).

The default value is 8023.

Strict Recovery

Two advanced options are provided to change the way that WANdisco Fusion responds to a system shutdown where WANdisco Fusion was not shutdown cleanly.

Currently the default setting is to not enforce a panic event in the logs, if during startup we detect that WANdisco Fusion wasn’t shutdown.

This is suitable for using the product as part of an evaluation effort.

However, when operating in a production environment, you may prefer to enforce the panic event which will stop any attempted restarts to prevent possible corruption to the database.

-

DConE panic if db is dirty

This option lets you enable the strict recovery option for WANdisco’s replication engine, to ensure that any corruption to its prevayler database doesn’t lead to further problems. When the checkbox is ticked, WANdisco Fusion will log a panic message whenever WANdisco Fusion is not properly shutdown, either due to a system or application problem.

-

App panic if db is dirty

This option lets you enable the strict recovery option for WANdisco Fusion’s database, to ensure that any corruption to its internal database doesn’t lead to further problems. When the checkbox is ticked, WANdisco Fusion will log a panic message whenever WANdisco Fusion is not properly shutdown, either due to a system or application problem.

Push Threshold

-

Set threshold manually

Set to blocksize, by default. See Set Push Threshold Manually

Chunk Size

The size of the 'chunks' used in file transfer.

-

Enter the settings for the IHC Server.

Figure 13. IHC Server details

Figure 13. IHC Server details- Maximum Java heap size (GB)

-

Enter the maximum Java Heap value for the WD Inter-Hadoop Communication (IHC) server. The minimum for production is 16GB but 64GB is recommended.

- IHC network interface

-

The hostname for the IHC server. It can be typed or selected from the dropdown on the right.

|

Don’t use Default route (0.0.0.0) for this address

Use an actual IP address for an interface that is accessible from the other cluster. Default route is already used by the WANdisco Fusion server on the other side to pick up a proper address for the IHC server at the remote end.

|

Advanced Options (optional)

- IHC server binding address

-

In the advanced settings you can decide which address the IHC server will bind to. The address is optional, by default the IHC server binds to all interfaces (0.0.0.0), using the port specified in the

ihc.serverfield.

Once all settings have been entered, click Next step.

-

Next, you will enter the settings for your new Zone.

Figure 14. Zone information

Figure 14. Zone informationEntry fields for zone properties:

- Zone Name

-

The name used to identify the zone in which the server operates.

- Node Name

-

The Node’s assigned name that is used in with the UI and referenced in the node server’s hostname.

Induction failureIf induction fails, attempting a fresh installation may be the most straight forward cure, however, it is possible to push through an induction manually, using the REST API. See Handling Induction Failure.Known issue with Node IDsYou must use different Node IDs for each zone. If you use the same name for multiple zones, then you will not be able to complete the induction between those nodes. - Management Endpoint

-

If relevant to your set up, select the manager that you are using, for example Cloudera or Ambari. The selection will display the entry fields for your selected manager.

URI Selection

The default behavior for WANdisco Fusion is to fix all replication to the Hadoop Distributed File System / hdfs:/// URI. Setting the hdfs-scheme provides the widest support for Hadoop client applications, since some applications can’t support the available "fusion:///" URI they can only use the HDFS protocol. Each option is explained below:

- Use HDFS URI with HDFS file system

-

The element appears in a radio button selector:

This option is available for deployments where the Hadoop applications support neither the WANdisco Fusion URI nor the HCFS standards. WANdisco Fusion operates entirely within HDFS.

This configuration will not allow paths with the fusion:/// uri to be used; only paths starting with hdfs:/// or no scheme that correspond to a mapped path will be replicated. The underlying file system will be an instance of the HDFS DistributedFileSystem, which will support applications that aren’t written to the HCFS specification.

- Use WANdisco Fusion URI with HCFS file system

-

Figure 16. URI option B

Figure 16. URI option BThis is the default option that applies if you don’t enable Advanced Options, and was the only option in WANdisco Fusion prior to version 2.6. When selected, you need to use

fusion://for all data that must be replicated over an instance of the Hadoop Compatible File System. If your deployment includes Hadoop applications that are either unable to support the Fusion URI or are not written to the HCFS specification, this option will not work.

| Platforms that must be run with Fusion URI with HCFS: |

|---|

Azure |

LocalFS |

OnTapLocalFs |

UnmanagedBigInsights |

UnmanagedSwift |

UnmanagedGoogle |

UnmanagedS3 |

UnmanagedEMR |

MapR |

- Use Fusion URI with HDFS file system

-

Figure 17. URI option C

Figure 17. URI option C

This differs from the default in that while the WANdisco Fusion URI is used to identify data to be replicated, the replication is performed using HDFS itself. This option should be used if you are deploying applications that can support the WANdisco Fusion URI but not the Hadoop Compatible File System.

Benefits of HDFS.

The following advanced options provide a number of low level configuration settings that may be required for installation into certain environments.

The incorrect application of some of these settings could cause serious problems, so for this reason we strongly recommend that you discuss their use with WANdisco’s support team before enabling them.

- Use Fusion URI and HDFS URI with HDFS file system

-

This "mixed mode" supports all the replication schemes (

fusion://, hdfs:// and no scheme) and uses HDFS for the underlying file system, to support applications that aren’t written to the HCFS specification. Figure 18. URI option D

Figure 18. URI option D

Advanced Options

|

Only apply these options if you fully understand what they do.

The following Advanced Options provide a number of low level configuration settings that may be required for installation into certain environments.

The incorrect application of some of these settings could cause serious problems, so for this reason we strongly recommend that you discuss their use with WANdisco’s support team before enabling them.

|

- Custom UI Host

-

To change the host the UI binds to, enter your UI host or select it from the drop down below.

- Custom UI Port

-

To change the port the UI binds to, enter the port number for the Fusion UI. Make sure this port is available.

- External UI Address

-

The address external processes should use to connect to the UI on. This is the address used by, for example, the Jump to node button on the UI Nodes tab. Depending on your system configuration this may be different to the internal address used when accessing the node via a browser.

-

In the lower panel you now need to configure the Cloudera or Ambari manager if relevant to your set up.

Figure 20. Manager Configuration

Figure 20. Manager Configuration- Manager Host Name /IP

-

The FQDN for the server the manager is running on.

- Port

-

The TCP port the manager is served from. The default is 7180.

- Username

-

The username of the account that runs the manager. This account must have admin privileges on the Management endpoint.

- Password

-

The password that corresponds with the above username.

- SSL

-

Tick the SSL checkbox to use https in your Manager Host Name and Port. You may be prompted to update the port if you enable SSL but don’t update from the default http port.

Once you have entered the information click Validate.

- Cluster manager type

-

Validates connectivity with the cluster manager.

- HDFS service state

-

WANdisco Fusion validates that the HDFS service is running. If it is unable to confirm the HDFS state a warning is given that will tell you to check the UI logs for possible errors. See the Logs section for more information.

- HDFS service health

-

WANdisco Fusion validates the overall health of the HDFS service. If the installer is unable to communicate with the HDFS service then you’re told to check the WANdisco Fusion UI logs for any clues.

See the Logs section for more information.

- HDFS service maintenance mode

-

WANdisco Fusion looks to see if HDFS is currently in maintenance mode. Both Hortonworks and Ambari support this mode for when you need to make changes to your Hadoop configuration or hardware, it suppresses alerts for a host, service, role or, if required, the entire cluster.

WANdisco Fusion does not require maintenance mode to be off, this validation is simply to bring the state to your attention.

- Fusion node as HDFS client

-

Validates that this Fusion node is a HDFS client.

-

Enter the security details applicable to your deployment.

Figure 21. "Security

Figure 21. "Security- Username

-

The username for the controlling account that will be used to access the WANdisco Fusion UI.

- Password

-

The password used to access the WANdisco Fusion UI.

- Confirm Password

-

A verification that you have correctly entered the above password.

-

At this stage of the installation you are provided with a complete summary of all of the entries that you have so far made. Go through the options and check each entry.

Figure 22. Summary

Figure 22. SummaryOnce you are happy with the settings and all your WANdisco Fusion clients are installed, click Deploy Fusion Server.

-

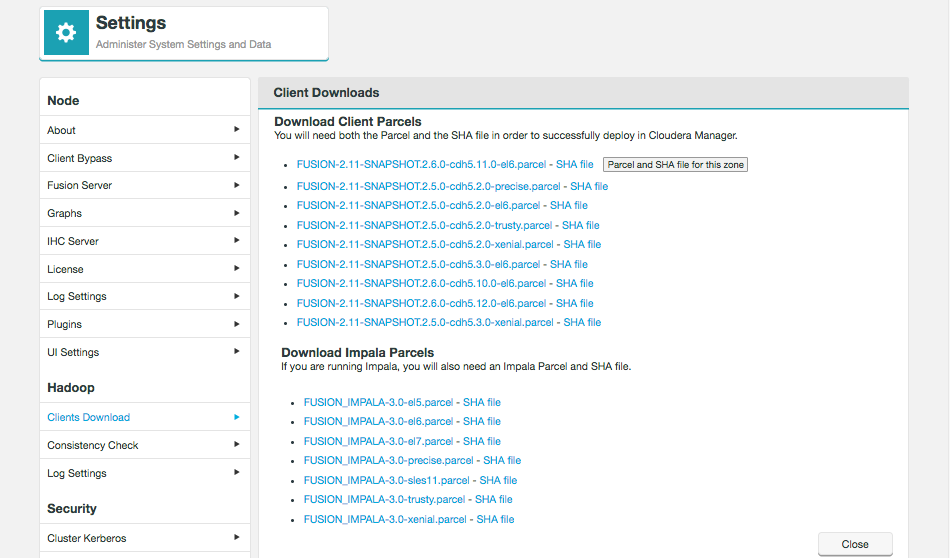

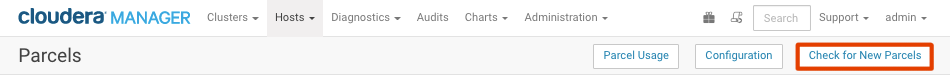

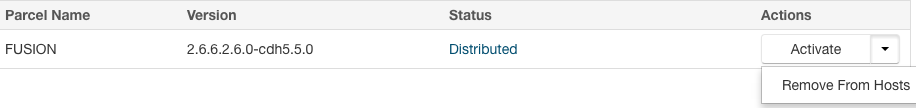

In the next step you need to place the WANdisco Fusion client parcel on the manager node and distribute to all nodes in the cluster. The WANdisco Fusion client is required to support data WANdisco Fusion’s replication across the Hadoop ecosystem.

Follow the on-screen instructions relevant to your installation, this may involve going to the UI of your manager. Figure 23. Clients

Figure 23. Clients

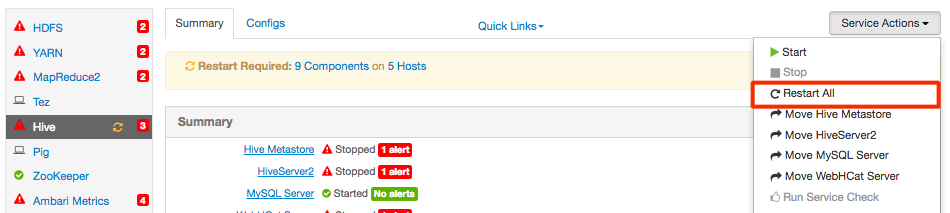

Ambari Installation

If you are installing onto a platform that is running Ambari, once the clients are installed you should log in to Ambari and restart services that are flagged as waiting for a restart. This will apply to MapReduce and YARN, in particular.

|

Potential failures on restart

In some deployments, particularly running HBase, you may find that you experience failures after restarting. In these situations if possible, leave the failed service down until you have completed the next step where you will restart WANdisco Fusion.

|

If you are running Ambari 1.7, you’ll be prompted to confirm this is done.

Confirm that you have completed the restarts.

|

Restarting Ambari

If using centos6/rhel6 we recommend using the following command to restart: initctl restart ambari-server Instead of service ambari-server restart |

|

Important! If you are installing on Ambari 1.7 or CDH 5.3.x

Additionally, due to a bug in Ambari 1.7, and an issue with the classpath in CDH 5.3.x, before you can continue you must log into Ambari/Cloudera Manager and complete a restart of HDFS, in order to re-apply WANdisco Fusion’s client configuration.

|

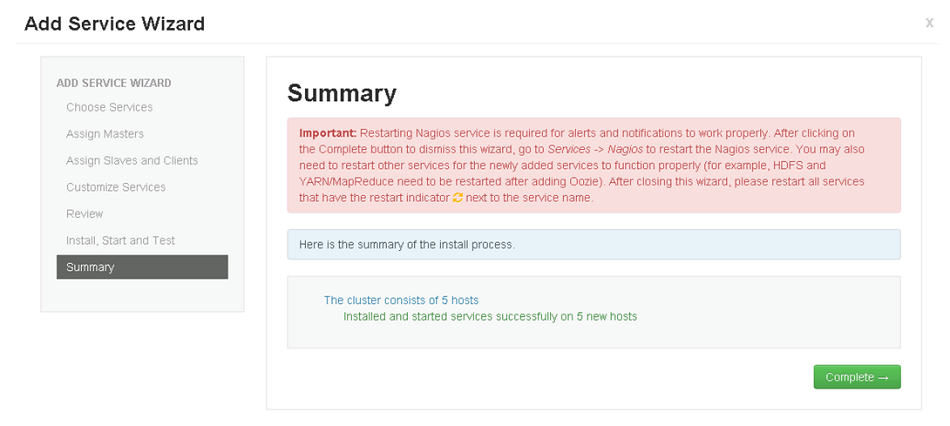

-

Configuration is now complete. You may receive notices or warning messages if, for example, your clients have not yet been installed. You can now address any client installations, then click Revalidate Client Install to make the warning go away. Once you have followed the on screen instructions click Start WANdisco Fusion to continue.

Figure 26. Startup

Figure 26. Startup -

If you have existing nodes you can induct them now. If you would rather induct them later, click Skip Induction.

Figure 27. Induction

Figure 27. Induction- Fully Qualified Domain Name

-

The fully qualified domain name of the node that you wish to connect to.

- Fusion Server Port

-

The TCP port used by the remote node that you are connecting to 8082 is the default port.

|

No induction for the first installed node

When you install the first node, you can’t complete an induction. Instead you will click "Skip Induction".

|

|

Installation on second node

If you have just installed on a second node, you now need to check in core-site.xml that hadoop.proxyuser.<fusionusername>.hosts has the expected value.

|

Configuration

Once WANdisco Fusion has been installed on all data centers you can proceed with setting up replication on your HDFS file system. You should plan your requirements ahead of the installation, matching up your replication with your cluster to maximize performance and resilience. The next section will take a brief look at a example configuration and run through the necessary steps for setting up data replication between two data centers.

Setting up Replication

The following steps are used to start replicating HDFS data. The detail of each step will depend on your cluster setup and your specific replication requirements, although the basic steps remain the same.

-

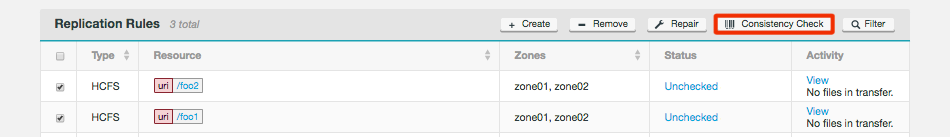

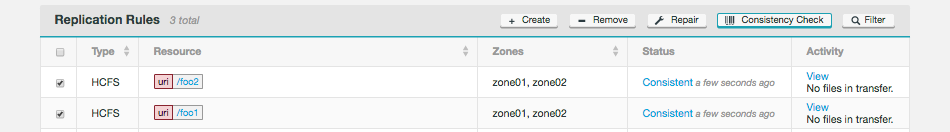

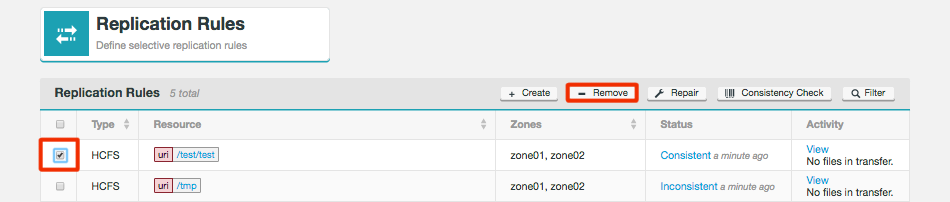

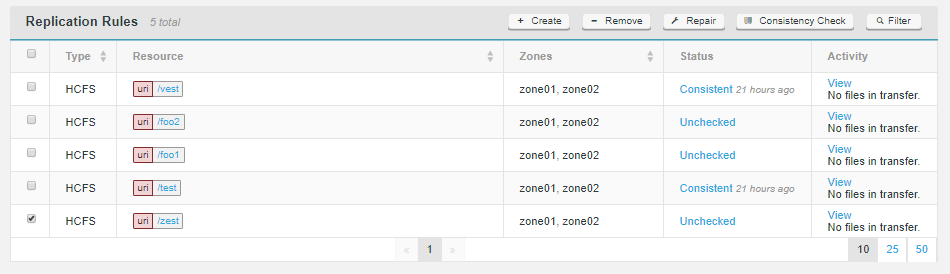

Create and configure a Replication Rule. See Replication Rules.

-

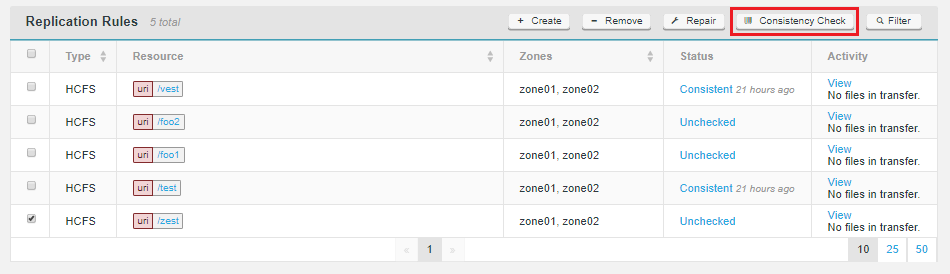

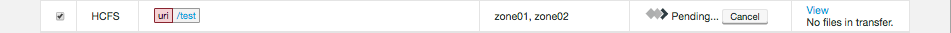

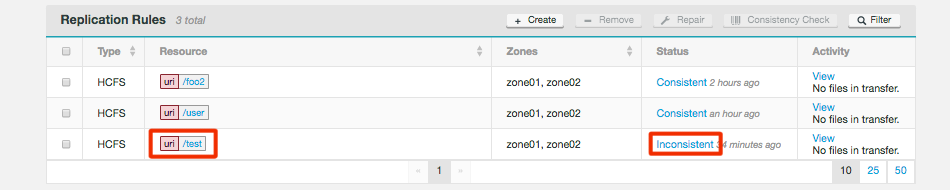

Perform a consistency check on your replication rule. See Consistency Check.

-

Configure your Hadoop applications to use WANdisco’s protocol.

-

Run Tests to validate that your replication rule remains consistent while data is being written to each data center.

4.2. Silent Installation

The "Silent" installation tools are still under development, although, with a bit of scripting, it should now be possible to automate WANdisco Fusion node installation. The following section looks at the provided tools, in the form of a number of scripts, which automate different parts of the installation process.

|

Client Installations

The silent installer does not handle the deployment of client stacks/parcels. You must be aware of the following:

Stacks/Parcels must be in place before the silent installer is run, this includes restarting/checking for parcels on their respective managers.

Failure to do so will leave the HDFS cluster in a state without fusion clients and running with a config that expects them to be there, this can be fixed by reverting service configs if necessary.

See Installing Parcels and Stacks.

|

4.2.1. Overview

The silent installation process supports two levels: Unattended installation handles just the command line steps of the installation, leaving the web UI-based configuration steps in the hands of an administrator. See unattended installation.

Fully Automated also includes the steps to handle the configuration without the need for user interaction.

4.2.2. Unattended Installation

Use the following command for an unattended installation where an administrator will complete the configuration steps using the browser UI.

sudo FUSIONUI_USER=x FUSIONUI_GROUP=y FUSIONUI_FUSION_BACKEND_CHOICE=z ./fusion-ui-server_rpm_installer.sh

4.2.3. Set the environment

There are a number of properties that need to be set up before the installer can be run:

- FUSIONUI_USER

-

User which will run WANdisco Fusion services. This should match the user who runs the hdfs service.

- FUSIONUI_GROUP

-

Group of the user which will run Fusion services. The specified group must be one that FUSIONUI_USER is in.

|

Check FUSIONUI_USER is in FUSIONUI_GROUP

Verify that your chosen user is in your selected group. > groups hdfs hdfs : hdfs hadoop |

- FUSIONUI_FUSION_BACKEND_CHOICE

-

Should be one of the supported package names, as per the following list, which includes all options, not all will be available on a single installer:

|

Check your release notes

Check the release notes for your version of WANdisco Fusion to be sure the packages are supported on your version.

|

-

cdh-5.4.0:2.6.0-cdh5.4.0

-

cdh-5.5.0:2.6.0-cdh5.5.0

-

cdh-5.6.0:2.6.0-cdh5.6.0

-

cdh-5.8.0:2.6.0-cdh5.8.0

-

cdh-5.9.0:2.6.0-cdh5.9.0

-

cdh-5.10.0:2.6.0-cdh5.10.0

-

cdh-5.11.0:2.6.0-cdh5.11.0

-

cdh-5.12.0:2.6.0-cdh5.12.0

-

cdh-5.13.0:2.6.0-cdh5.13.0 (v2.11.1 onwards)

-

cdh-5.14.0:2.6.0-cdh5.14.0 (v2.11.2 onwards)

-

emr-5.3.0:2.7.3-amzn-1

-

emr-5.4.0:2.7.3-amzn-1

-

gcs-1.0:2.7.3

-

gcs-1.1:2.7.3

-

hdi-3.5:2.7.3.2.5.0.0-1245

-

hdi-3.6:2.7.3.2.6.2.0-147

-

hdp-2.3.0:2.7.1.2.3.0.0-2557

-

hdp-2.4.0:2.7.1.2.4.0.0-169

-

hdp-2.5.0:2.7.3.2.5.0.0-1245

-

hdp-2.6.0:2.7.3.2.6.0.3-8

-

hdp-2.6.2:2.7.3.2.6.2.0-205

-

hdp-2.6.3:2.7.3.2.6.3.0-235 (v2.11.1 onwards)

-

hdp-2.6.4:2.7.3.2.6.4.0-91 (v2.11.1 onwards)

-

ibm-3.0:2.2.0

-

ibm-4.0:2.6.0 (support dropped from v2.11.2)

-

ibm-4.1:2.7.1

-

ibm-4.2:2.7.2

-

ibm-4.2.5:2.7.3-IBM-29

-

localfs-2.7.0:2.7.0

-

mapr-5.2.0:2.7.0-mapr-1607

-

asf-2.5.0:2.5.0

-

asf-2.6.0:2.6.0

-

asf-2.7.0:2.7.0

|

(ontap)/(s3)/(swt)

Each of these version use the same package "asf-2.5.0:2.5.0".

|

This mode only automates the initial command line installation step, the configuration steps still need to be handled manually in the browser steps.

4.2.4. Fully Automated Installation

This mode is closer to a full "Silent" installation as it handles the configuration steps as well as the installation.

Properties that need to be set:

- SILENT_CONFIG_PATH

-

Path for the environmental variables used in the command-line driven part of the installation. The paths are added to a file called silent_installer_env.sh.

- SILENT_PROPERTIES_PATH

-

Path to 'silent_installer.properties' file. This is a file that will be parsed during the installation, providing all the remaining parameters that are required for getting set up. The template is annotated with information to guide you through making the changes that you’ll need.

Take note that parameters stored in this file will automatically override any default settings in the installer. - FUSIONUI_USER

-

User which will run Fusion services. This should match the user who runs the hdfs service.

- FUSIONUI_GROUP

-

Group of the user which will run Fusion services. The specified group must be one that FUSIONUI_USER is in.

- FUSIONUI_FUSION_BACKEND_CHOICE

-

Should be one of the supported package names.

- FUSIONUI_UI_HOSTNAME

-

The hostname for the WANdisco Fusion server.

- FUSIONUI_UI_PORT

-

Specify a fusion-ui-server port (default is 8083)

- FUSIONUI_TARGET_HOSTNAME

-

The hostname or IP of the machine hosting the WANdisco Fusion server.

- FUSIONUI_TARGET_PORT

-

The fusion-server port (default is 8082)

- FUSIONUI_MEM_LOW

-

Starting Java Heap value for the WANdisco Fusion server.

- FUSIONUI_MEM_HIGH

-

Maximum Java Heap.

- FUSIONUI_UMASK

-

Sets the default permissions applied to newly created files. The value 022 results in default directory permissions 755 and default file permissions 644. This ensures that the installation will be able to start up/restart.

- FUSIONUI_INIT

-

Sets whether the server will start automatically when the system boots. Set as "1" for yes or "0" for no

Cluster Manager Variables are deprecated

The cluster manager variables are mostly redundant as they generally get set in different processes though they currently remain in the installer code.

FUSIONUI_MANAGER_TYPE FUSIONUI_MANAGER_HOSTNAME FUSIONUI_MANAGER_PORT

- FUSIONUI_MANAGER_TYPE

-

"AMBARI", "CLOUDERA", "MAPR" or "UNMANAGED_EMR" and "UNMANAGED_BIGINSIGHTS" for IBM deployments. This setting can still be used but it is generally set at a different point in the installation now.

- validation.environment.checks.enabled

-

Permits the validation checks for environmental

- validation.manager.checks.enabled

-

Note manager validation is currently not available for S3 installs

- validation.kerberos.checks.enabled

-

Note kerberos validation is currently not available for S3 installs

If this part of the installation fails it is possible to re-run the silent_installer part of the installation by running:

/opt/wandisco/fusion-ui-server/scripts/silent_installer_full_install.sh /path/to/silent_installer.properties

4.2.5. Uninstall WANdisco Fusion UI only

This procedure is useful for UI-only installations:

sudo yum erase -y fusion-ui-server sudo rm -rf /opt/wandisco/fusion-ui-server /etc/wandisco/fusion/ui

4.2.6. To UNINSTALL Fusion UI, Fusion Server and Fusion IHC Server (leaving any fusion clients installed):

See the Uninstall Script Usage Section for information on removing Fusion.

4.2.7. Silent Installation files

For every package of WANdisco Fusion there’s both an env.sh and a .properties file. The env.sh sets environment variables that complete the initial command step of an installation. The env.sh also points to a properties file that is used to automate the browser-based portion of the installer. The properties files for the different installation types are provided below:

- silent_installer.properties

-

standard HDFS installation.

- s3_silent_installer.properties

-

properties file for Amazon S3-based installation.

- swift_silent_installer.properties

-

file for Swift-based installation.

4.3. Manual installation

The following procedures covers the hands-on approach to installation and basic setup of a deployment that deploys over the LocalFileSystem. For the vast majority of cases you should use the previous Installer-based LocalFileSystem Deployment procedure.

|

Don’t do it this way unless you have to.

We provide this example to illustrate how a completely hands-on installation can be performed. We don’t recommend that you use it for a deployment unless you absolutely can’t use the installers. Instead, use it as a reference so that you can see what changes are made by our installer.

|

4.3.1. Non-HA Local filesystem setup

-

Start with the regular WANdisco Fusion setup. You can go through either the installation manually or using the installer.

-

When you select the $user:$group you should pick a master user account that will have complete access to the local directory that you plan to replicate. You can set this manually by modifying etc/wandisco/fusion-env.sh setting FUSION_SERVER_GROUP to

$groupand FUSION_SERVER_USER to$user. -

Next, you’ll need to configure the core-site.xml, typically in /etc/hadoop/conf/, and override “fs.file.impl” to “com.wandisco.fs.client.FusionLocalFs”, “fs.defaultFS” to "file:///", and "fs.fusion.underlyingFs" to "file:///". (Make sure to add the usual Fusion properties as well, such as "fusion.server").

-

If you are running with fusion URI, (via “fs.fusion.impl”), then you should still set the value to “com.wandisco.fs.client.FusionLocalFs”.

-

If you are running with Kerberos then you should also override “fusion.handshakeToken.dir” to point to some directory that will exist within the local directory you plan to replicate to/from. You should also make sure to have “fusion.keytab” and “fusion.principal” defined as usual.

-

Ensure that the local directory you plan to replicate to/from alreadly exists. If not, create it and give it 777 permissions or create a symlink (locally) that will point to the local path you plan to replicate to/from.

-

For example, if you want to replicate /repl1/ but don’t want to create a directory on your root level, you can create a symlink to repl1 on your root level and point it to wherever you want to actually be your replicated directory. In the case of using NFS, it should be used to point to /mnt/nfs/.

-

Set-up an NFS.

Be sure to point your replicated directory to your NFS mount, either directly or using a a symlink.

4.3.2. HA local file system setup

-

Install Fusion UI, Server, IHC, and Client (for LocalFileSystem) on every node you plan to use for HA.

-

When you select the

$user:$groupyou should pick a master user account that will have complete access to the local directory that you plan to replicate. You can set this manually by modifying /etc/wandisco/fusion-env.sh setting FUSION_SERVER_GROUP to$groupand FUSION_SERVER_USER to$user. -

Next, you’ll need to configure the core-site.xml, typically in /etc/hadoop/conf/, and override “fs.file.impl” to “com.wandisco.fs.client.FusionLocalFs”, “fs.defaultFS” to "file:///", and “fs.fusion.underlyingFs” to "file:///". (Make sure to add the usual Fusion properties as well, such as "fs.fusion.server").

-

If you are running with fusion URI, (via “fs.fusion.impl”), then you should still set the value to “com.wandisco.fs.client.FusionLocalFs”.

-

If you are running with Kerberos then you should also override “fusion.handshakeToken.dir” to point to some directory that will exist within the local directory you plan to replicate to/from. You should also make sure to have “fusion.keytab” and “fusion.principal” defined as usual.

-

Ensure that the local directory you plan to replicate to/from alreadly exists. If not, create it and give it 777 permissions or create a symlink (locally) that will point to the local path you plan to replicate to/from.

-

For ex, if you want to replicate /repl1/ but don’t want to create a directory on your root level, you can create a symlink to repl1 on your root level and point it to wherever you want to actually be your replicated directory. In the case of using NFS, it should be used to point to /mnt/nfs/.

-

Now follow a regular HA set up, making sure that you copy over the core-site.xml and fusion-env.sh everywhere so all HA nodes have the same configuration.

-

Create the replicated directory (or symlink to it) on every HA node and chmod it to 777.

4.4. LocalFileSystem Installation

For most cloud deployments, a WANdisco Fusion node must be installed within the customer’s local cluster for data replication into cloud-based storage.

4.4.1. Installer-based LocalFileSystem Deployment

The following procedure covers the installation and setup of WANdisco Fusion deployed over the LocalFileSystem. This requires an administrator to enter details throughout the procedure. Once the initial settings are entered through the terminal session, the deployment to the LocalFileSystem is then completed through a browser.

Follow the first few steps given in the On-premises installation guide.

Make sure that you use the LocalFileSystem installer, for example fusion-ui-server-localfs_rpm_installer.sh.

Once the fusion-ui-server has started, follow the steps below to configure WANdisco Fusion with Swift through the browser.

-

In the first "Welcome" screen you’re asked to choose between Create a new Zone and Add to an existing Zone.

Make your selection as follows:- Adding a new WANdisco Fusion cluster

-

Select Add Zone.

- Adding additional WANdisco Fusion servers to an existing WANdisco Fusion cluster

-

Select Add to an existing Zone.

Figure 28. Welcome screen

Figure 28. Welcome screen

-

Run through the installer’s detailed Environment checks. For more details about exactly what is checked in this stage, see Environmental Checks in the Appendix.

Figure 29. Environmental checks

Figure 29. Environmental checks -

On clicking Validate the installer will run through a series of checks of your system’s hardware and software setup and warn you if any of WANdisco Fusion’s prerequisites are not going to be met.

Figure 30. Example check results

Figure 30. Example check resultsAddress any failures before you continue the installation. Warnings may be ignored for the purposes of completing the installation, especially if the installation is only for evaluation purposes and not for production. However, when installing for production, you should address all warnings, or at least take note of them and exercise due care if you continue the installation without resolving and revalidating.

-

Select your license file and upload it.

Figure 31. Upload your license file

Figure 31. Upload your license fileThe conditions of your license agreement will be shown in the top panel.

-

In the lower panel is the EULA.

Figure 32. Verify license and agree to subscription agreement

Figure 32. Verify license and agree to subscription agreementTick the checkbox I agree to the EULA to continue, then click Next Step.

-

Enter settings for the WANdisco Fusion server. See WANdisco Fusion Server for more information about what is entered during this step.

Figure 33. Server information

Figure 33. Server information -

Enter the settings for the IHC Server. See the on premise install section for more information about what is entered during this step.

Figure 34. IHC Server information

Figure 34. IHC Server information -

Next, you will enter the settings for your new Node.

Figure 35. Zone information

Figure 35. Zone information- Zone Name

-

Give your zone a name to allow unique identification of a group of nodes.

- Node Name

-

A unique identifier that will help you find the node on the UI.

There are also advanced options but only use these if you fully understand what they do:

- Custom UI Host

-

Enter your UI host or select it from the drop down below.

- Custom UI Port

-

Enter the port number for the Fusion UI.

- External UI Address

-

The address external processes should use to connect to the UI on.

Once these details are added, click Validate.

-

Enter the security details applicable to your deployment.

Figure 36. LocalFS installer - Security

Figure 36. LocalFS installer - SecurityIf you are using Kerberos, tick the Use Kerberos for file system access check-box to enable Kerberos authentication on the local filesystem.

- Kerberos Token Directory

-

This defines what the root token directory should be for the Kerberos Token field. This is only set if you are using LocalFileSystem with Kerberos and want to target the token creations within the NFS directory and not on just the actual LocalFileSystem. If left unset it will default to the original behavior; which is to create tokens in the /user/<username>/ directory.

The installer will validate that the directory given or that is set by default (if you leave the field blank), can be written to by WANdisco Fusion.

- Configuration file path

-

System path to the Kerberos configuration file, e.g. /etc/krb5.conf

- Keytab file path

-

System path to your generated keytab file, e.g. /etc/krb5.keytab

Name and place the keytab where you likeThese paths and file names can be anything you like, providing they are the consistent with your field entries. - Username

-

The username for the controlling account that will be used to access the WANdisco Fusion UI.

- Password

-

The password used to access the WANdisco Fusion UI.

- Confirm Password

-

A verification that you have correctly entered the above password.

-

At this stage of the installation you are provided with a complete summary of all of the entries that you have so far made. Go through the options and check each entry.

Figure 37. LocalFS installer - Summary

Figure 37. LocalFS installer - Summary -

There are no clients to install for a Local File System installation, click Next Step. This step is reserved for deployments where HDFS clients need to be installed.

Figure 38. LocalFS installer - Clients

Figure 38. LocalFS installer - Clients -

It’s now time to start up the Fusion server. Click Start WANdisco Fusion.

Figure 39. Startup

Figure 39. StartupThe Fusion server will now start up.

-

If you have existing nodes you can induct them now. If you would rather induct them later, click Skip Induction.

Induction will connect this second node to your existing "on-premises" node. When adding a node to an existing zone, users will be prompted for zone details at the start of the installer and induction will be handled automatically. Nodes added to a new zone will have the option of being inducted at the end of the install process where the user can add details of the remote node. Figure 40. Induction

Figure 40. InductionIf you are inducting now, enter the following details then Click Start Induction.

- Fully Qualified Domain Name

-

The full address of the existing on-premises node.

- Fusion Server Port

-

The TCP Port on which the on-premises node is running. Default:8082

For the first node you will miss this step out. For all the following node installations you will provide the FQDN or IP address and port of this first node.

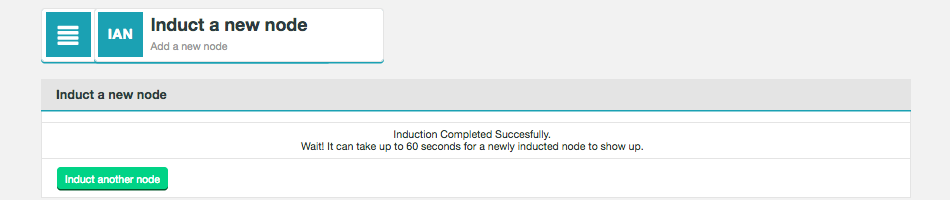

"Could not resolved Kerberos principal" errorYou need to ensure that the hostname of your EC2 machine has been added to the /etc/hosts file of your LocalFS server.What is induction?Multiple instances of WANdisco Fusion join together to form a replication network or ecosystem. Induction is the process used to connect each new node to an existing set of nodes.

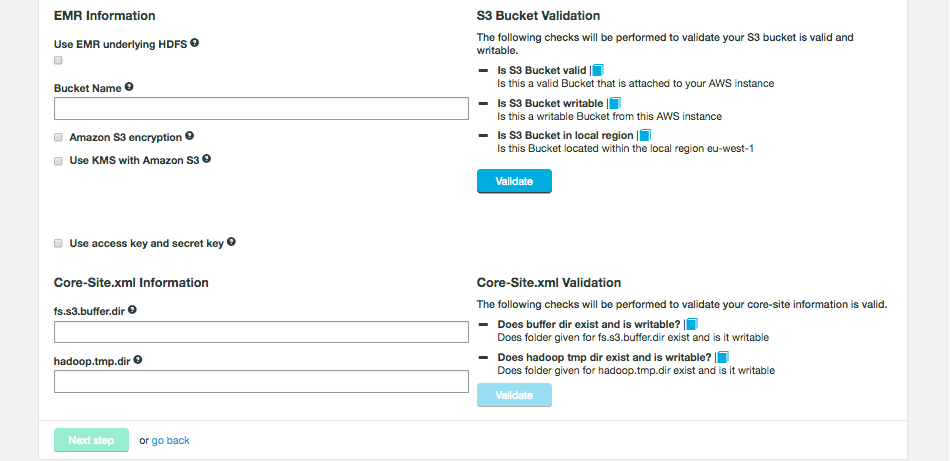

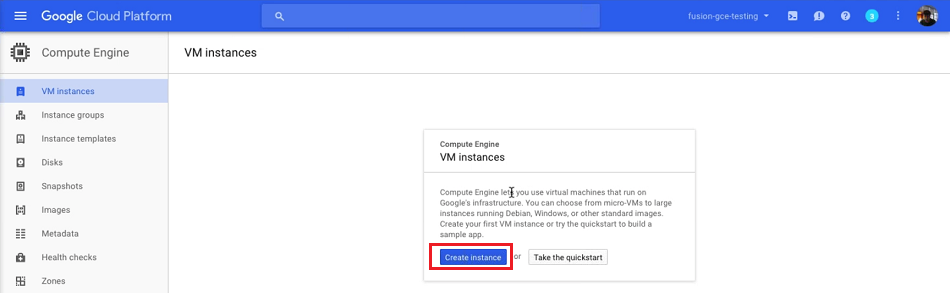

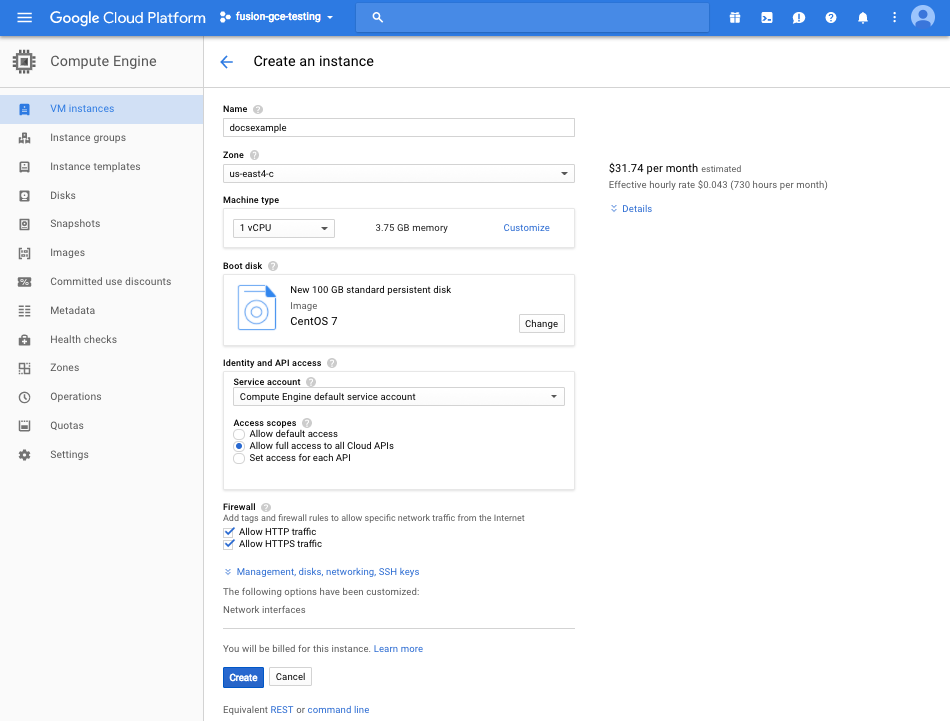

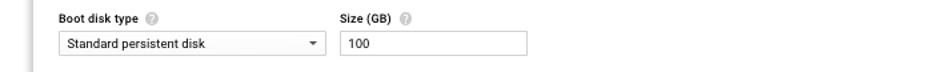

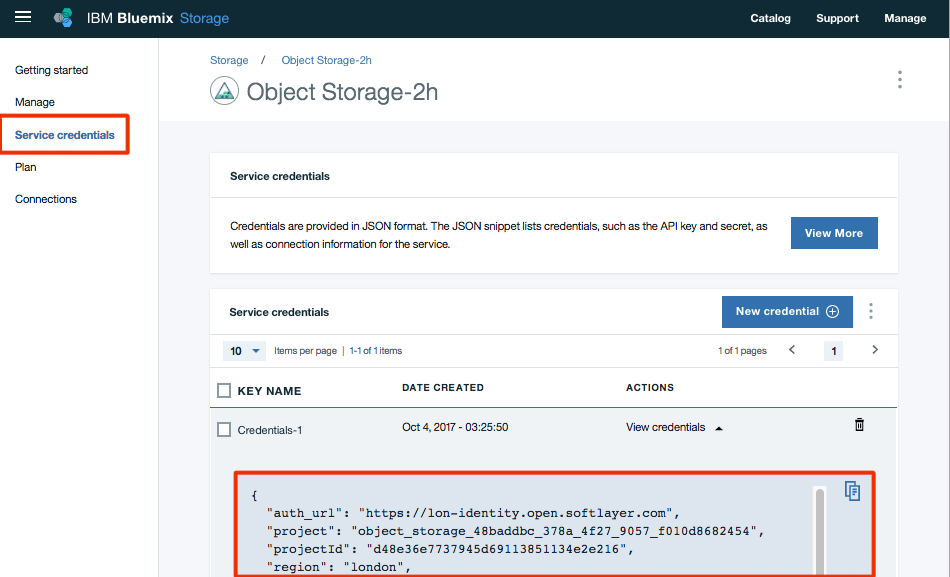

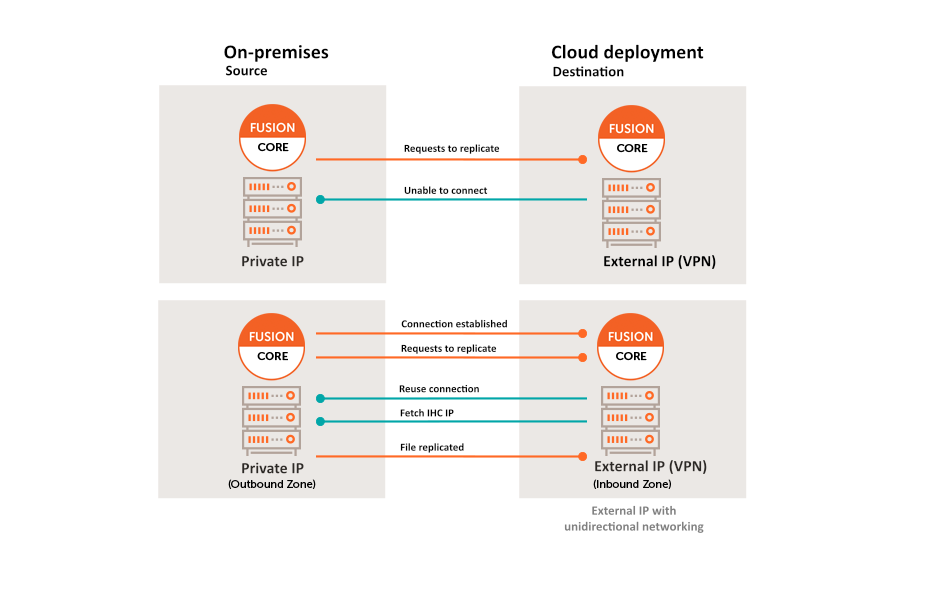

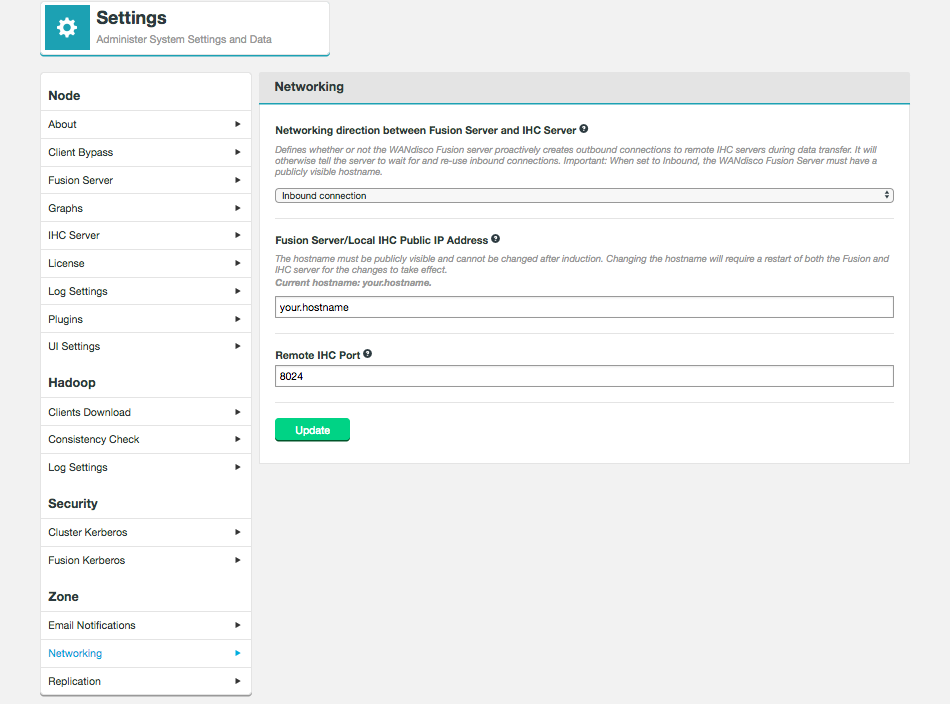

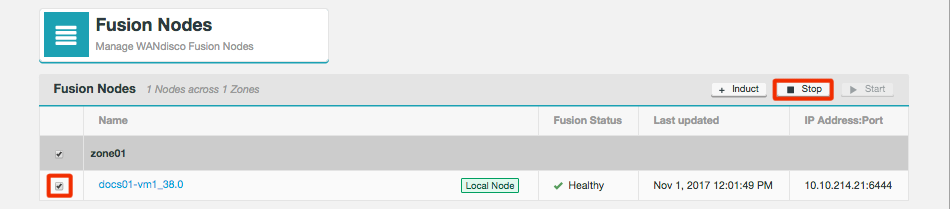

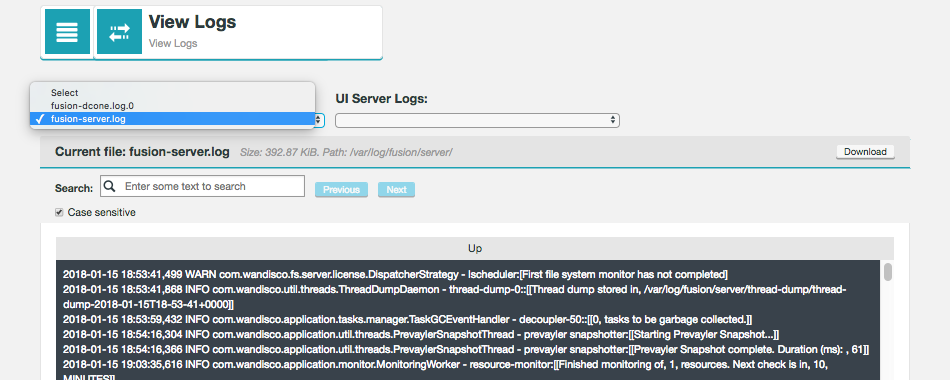

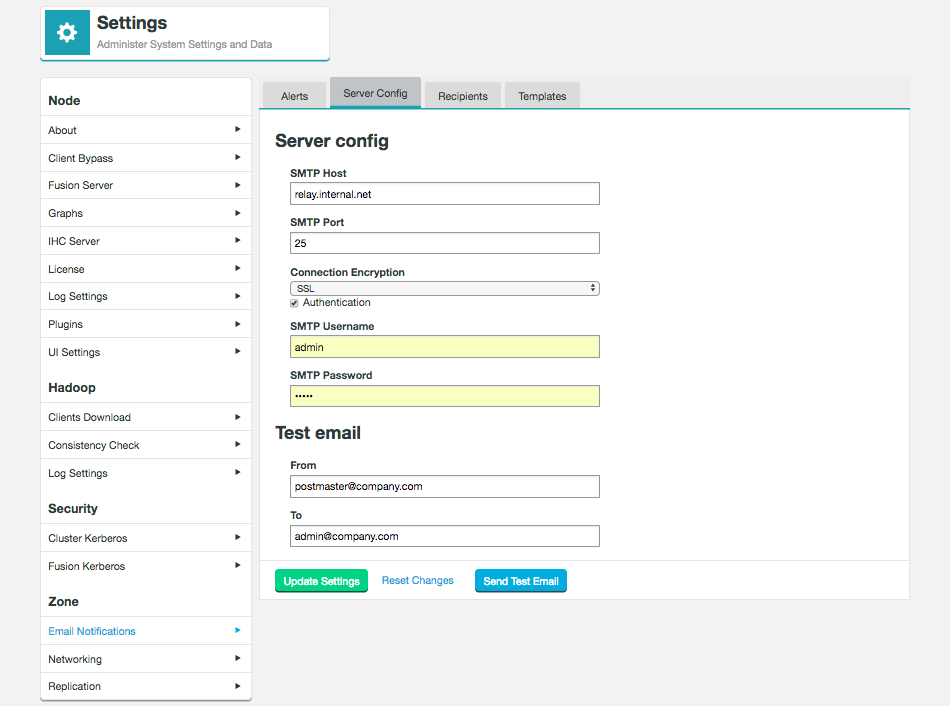

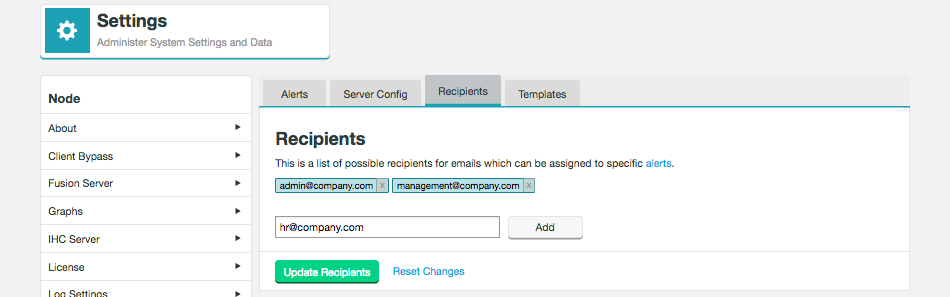

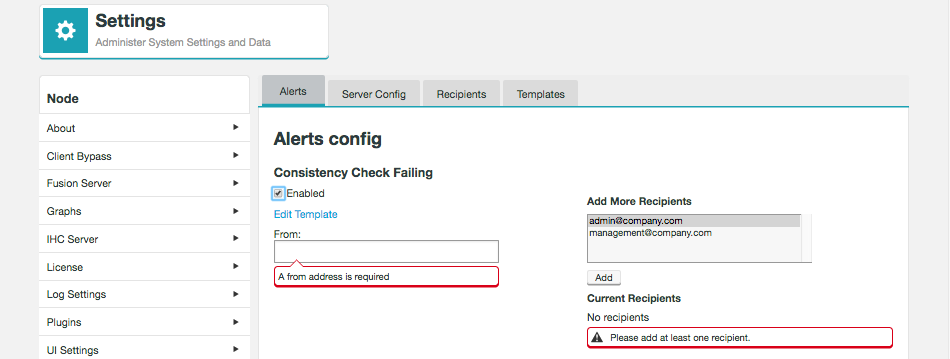

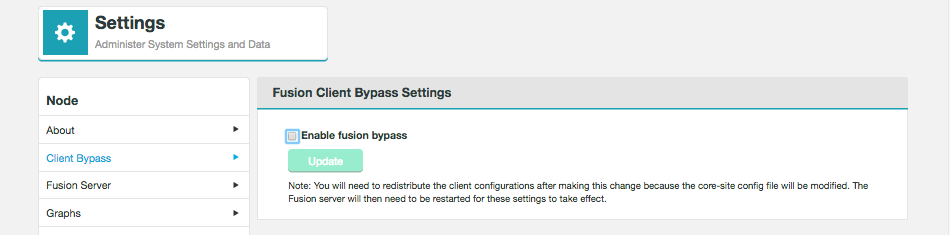

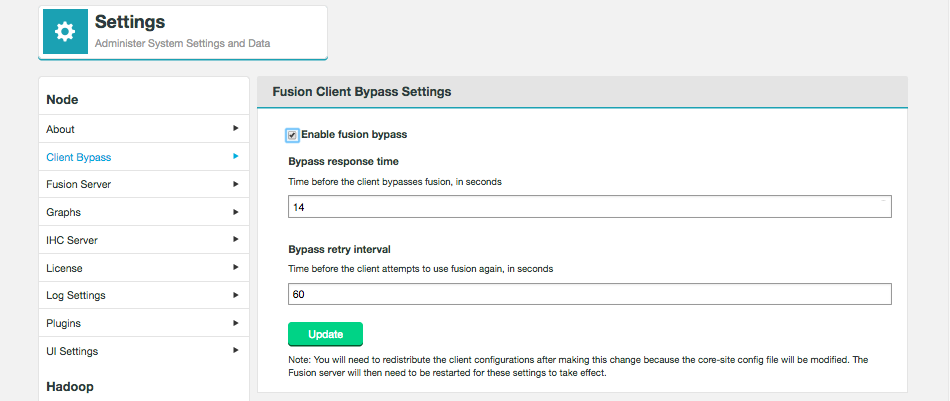

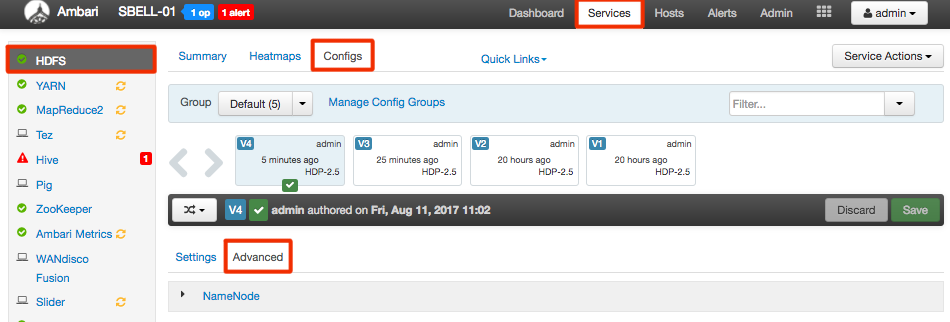

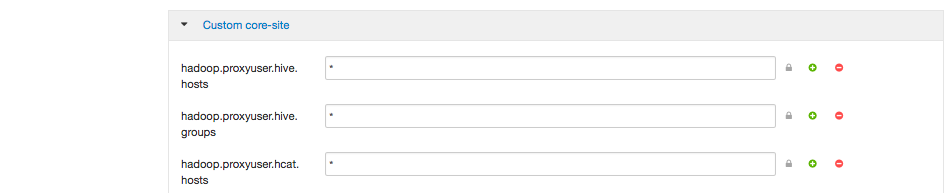

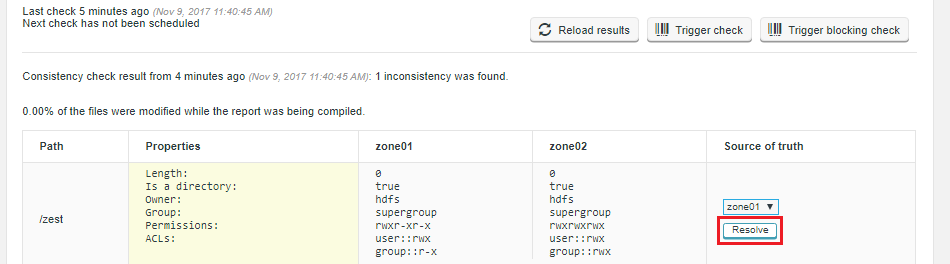

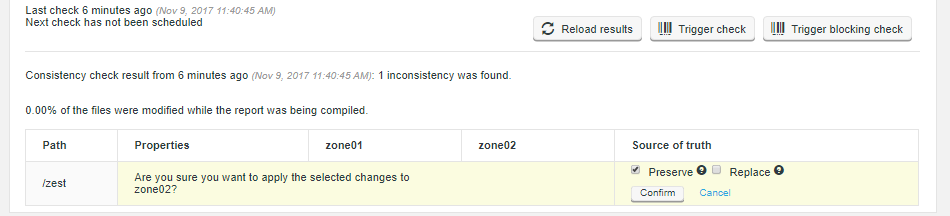

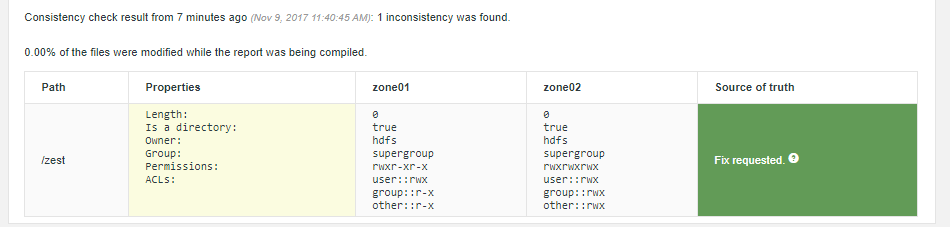

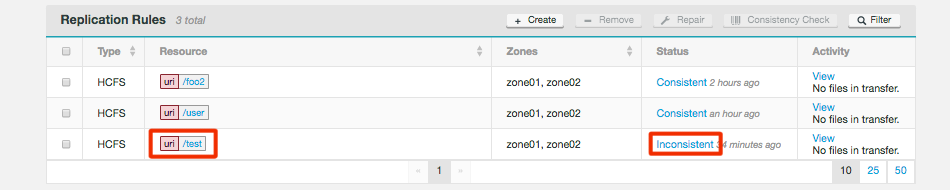

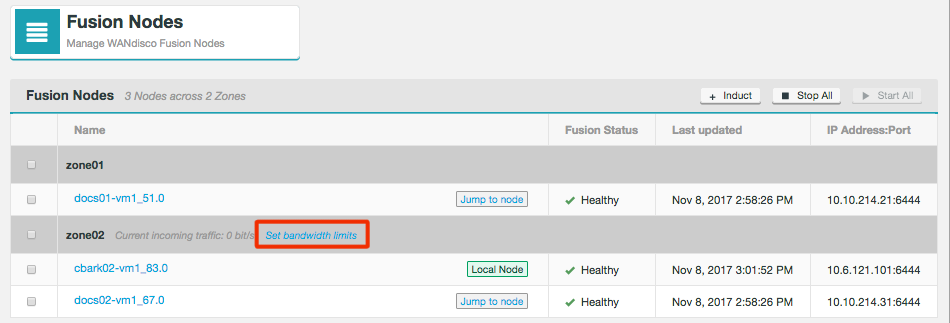

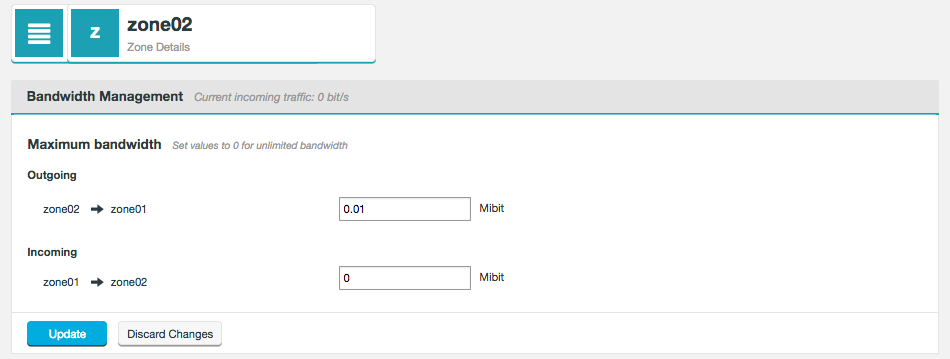

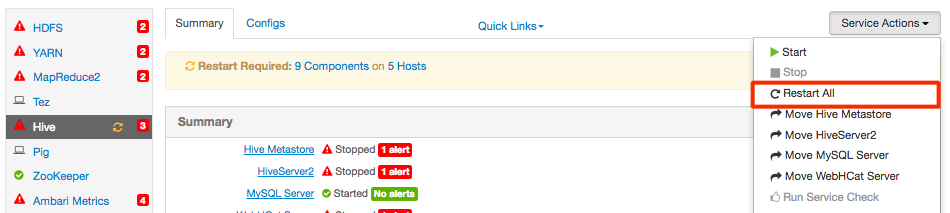

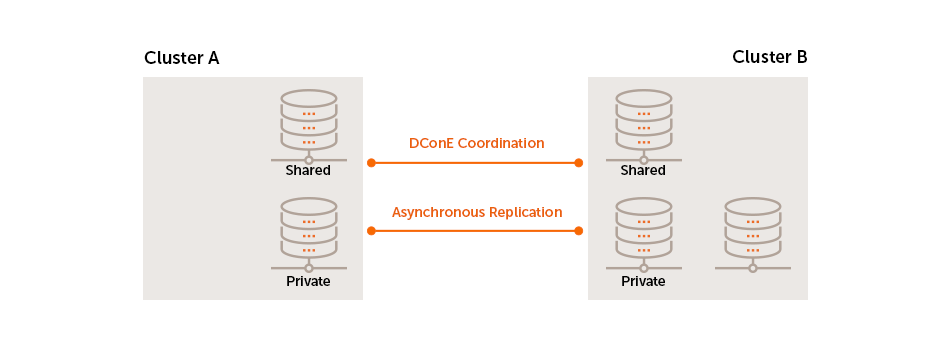

-